POLS 1140

Ideology and Issues

Updated Apr 13, 2026

Thursday

Plan for the Week

Tuesday

- Stats and POLS 1140

- Finish up discussion of polling and forecasting

- Discuss Converse (1964)

Thursday

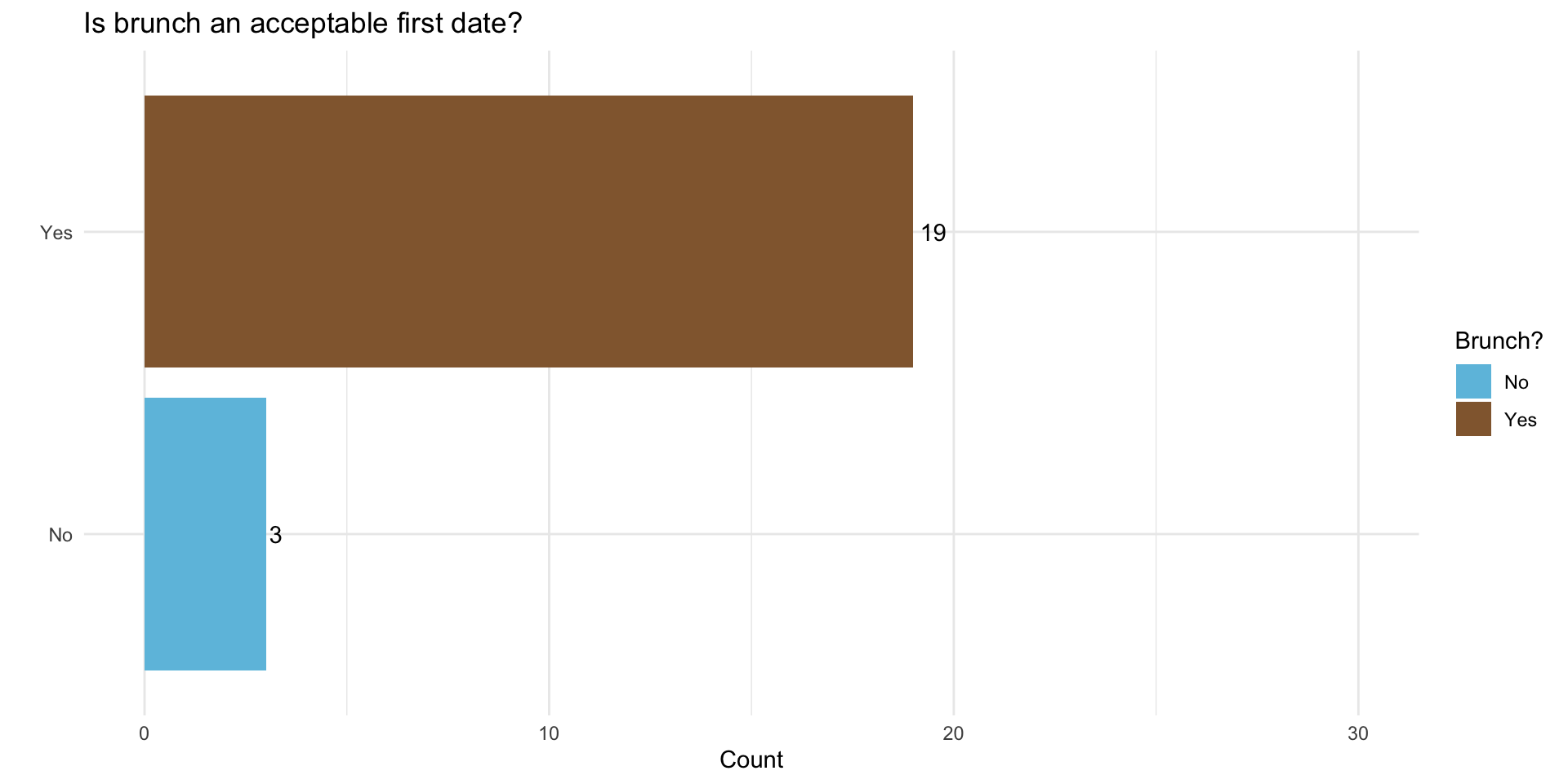

Let’s do brunch?

Eggsellent Choice

Statistics and POLS 1140 Part III

Causal claims involve counterfactual comparisons

Causal claims imply claims about counterfactuals

What would have happened if we were to change some aspect of the world?

We can represent counterfactuals in terms of potential outcomes

Individual Causal Effects

Let \(Y\) measure outcomes and \(D \in \{0,1\}\) denote the presence or absence of some treatment

For any individual, we can imagine different potential outcomes:

\[ \begin{align} Y_i(D_i = 1) & & \text{Outcome under treatment}\\ Y_i(0) & & \text{Outcome under control}\\ \end{align} \] The individual causal effect is simply the difference in these potential outcomes

\[ \begin{align} \tau_i = Y_i(1) - Y_i(0) && \text{Individual Causal Effect} \end{align} \]

The fundamental problem of causal inference is that individual causal effects are unknowable because we only observe one of many potential outcomes

- A problem of missing data

A statistical solution to the FPoCI

Rather than focus individual causal effects:

\[ \tau_i \equiv Y_i(1) - Y_i(0) \]

We focus on average causal effects (Average Treatment Effects [ATEs]):

\[ E[\tau_i] = \overbrace{E[Y_i(1) - Y_i(0)]}^{\text{Average of a difference}} = \overbrace{E[Y_i(1)] - E[Y_i(0)]}^{\text{Difference of Averages}} \]

When does the difference of averages provide us with a good estimate of the average difference?

Let’s consider a simple example

Does eating chocolate make you happy?

\(Y_i\) happiness measured on a 0-10 scale

\(D_i\) whether a person ate chocolate \((D=1)\) or fruit \((D = 0)\)

\(Y_i(1)\) a person’s happiness eating chocolate

\(Y_i(0)\) a person’s happiness eating fruit

\(X_i\) a person’s self-reported preference \((X_i \in\) {chocolate, fruit })

Potential Outcomes:

| \(Y_i(1)\) | \(Y_i(0)\) | \(\tau_i\) |

|---|---|---|

| 7 | 3 | 4 |

| 8 | 6 | 2 |

| 5 | 4 | 1 |

| 4 | 3 | 1 |

| 6 | 10 | -4 |

| 8 | 9 | -1 |

| 5 | 4 | 1 |

| 7 | 8 | -1 |

| 4 | 3 | 1 |

| 6 | 0 | 6 |

| \(E[Y_i(1)]\) | \(E[Y_i(0)]\) | \(E[\tau_i]\) |

|---|---|---|

| 6 | 5 | 1 |

If we could observe everyone’s potential outcomes, we could calculate the ICE

On average eating chocolate increases happiness by 1 point on our 10-point scale (ATE = 1)

Suppose we conducted a study and let folks select what they wanted to eat.

Potential Outcomes:

| \(Y_i(1)\) | \(Y_i(0)\) | \(\tau_i\) |

|---|---|---|

| 7 | 3 | 4 |

| 8 | 6 | 2 |

| 5 | 4 | 1 |

| 4 | 3 | 1 |

| 6 | 10 | -4 |

| 8 | 9 | -1 |

| 5 | 4 | 1 |

| 7 | 8 | -1 |

| 4 | 3 | 1 |

| 6 | 0 | 6 |

| \(E[Y_i(1)]\) | \(E[Y_i(0)]\) | \(ATE\) |

|---|---|---|

| 6 | 5 | 1 |

Observed Treatment:

| \(x_i\) | \(d_i\) | \(y_i\) |

|---|---|---|

| chocolate | 1 | 7 |

| chocolate | 1 | 8 |

| chocolate | 1 | 5 |

| chocolate | 1 | 4 |

| fruit | 0 | 10 |

| fruit | 0 | 9 |

| chocolate | 1 | 5 |

| fruit | 0 | 8 |

| chocolate | 1 | 4 |

| chocolate | 1 | 6 |

| \(\bar{y}_{d=1}\) | \(\bar{y}_{d=0}\) | \(\hat{ATE}\) |

|---|---|---|

| 5.57 | 9 | -3.43 |

Observed Treatment:

| \(x_i\) | \(d_i\) | \(y_i\) |

|---|---|---|

| chocolate | 1 | 7 |

| chocolate | 1 | 8 |

| chocolate | 1 | 5 |

| chocolate | 1 | 4 |

| fruit | 0 | 10 |

| fruit | 0 | 9 |

| chocolate | 1 | 5 |

| fruit | 0 | 8 |

| chocolate | 1 | 4 |

| chocolate | 1 | 6 |

| \(\bar{y}_{d=1}\) | \(\bar{y}_{d=0}\) | \(\hat{ATE}\) |

|---|---|---|

| 5.57 | 9 | -3.43 |

Selection Bias

Our estimate of the ATE is biased by the fact that folks who prefer fruit seem to be happier than folks who prefer chocolate in this example

In general, selection bias occurs when folks who receive the treatment differ systematically from folks who don’t

What if instead of letting people pick and choose, we randomly assigned half our respondents to chocolate and half to receive fruit

Potential Outcomes:

| \(Y_i(1)\) | \(Y_i(0)\) | \(\tau_i\) |

|---|---|---|

| 7 | 3 | 4 |

| 8 | 6 | 2 |

| 5 | 4 | 1 |

| 4 | 3 | 1 |

| 6 | 10 | -4 |

| 8 | 9 | -1 |

| 5 | 4 | 1 |

| 7 | 8 | -1 |

| 4 | 3 | 1 |

| 6 | 0 | 6 |

| \(E[Y_i(1)]\) | \(E[Y_i(0)]\) | \(ATE\) |

|---|---|---|

| 6 | 5 | 1 |

Randomly Assigned Treatment:

| \(x_i\) | \(d_i\) | \(y_i\) |

|---|---|---|

| chocolate | 1 | 7 |

| chocolate | 1 | 8 |

| chocolate | 0 | 4 |

| chocolate | 1 | 4 |

| fruit | 0 | 10 |

| fruit | 1 | 8 |

| chocolate | 0 | 4 |

| fruit | 0 | 8 |

| chocolate | 1 | 4 |

| chocolate | 0 | 0 |

| \(\bar{y}_{d=1}\) | \(\bar{y}_{d=0}\) | \(\hat{ATE}\) |

|---|---|---|

| 6.2 | 5.2 | 1 |

Randomly Assigned Treatment:

| \(x_i\) | \(d_i\) | \(y_i\) |

|---|---|---|

| chocolate | 1 | 7 |

| chocolate | 1 | 8 |

| chocolate | 0 | 4 |

| chocolate | 1 | 4 |

| fruit | 0 | 10 |

| fruit | 1 | 8 |

| chocolate | 0 | 4 |

| fruit | 0 | 8 |

| chocolate | 1 | 4 |

| chocolate | 0 | 0 |

| \(\bar{y}_{d=1}\) | \(\bar{y}_{d=0}\) | \(\hat{ATE}\) |

|---|---|---|

| 6.2 | 5.2 | 1 |

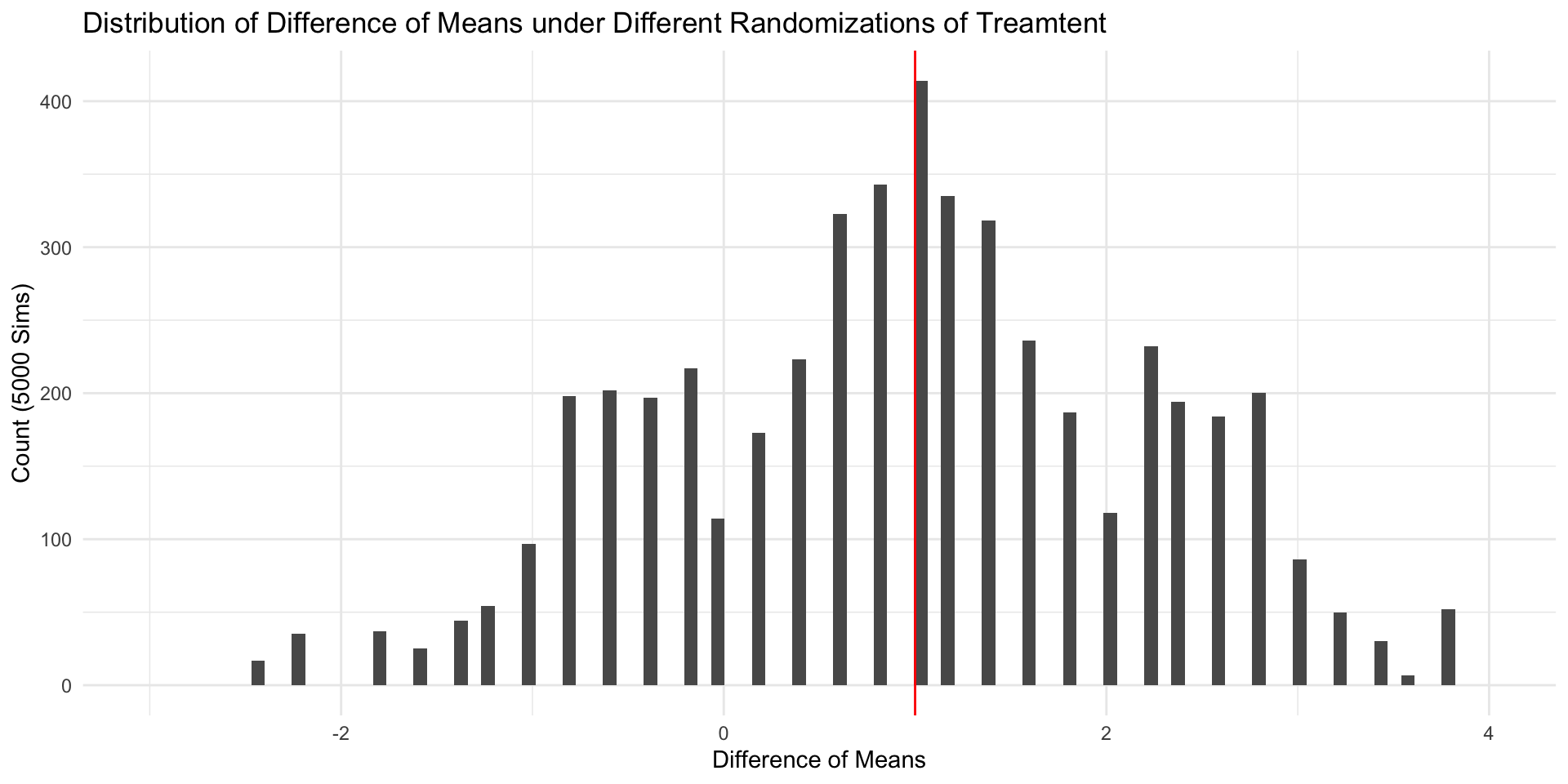

Random Assignment

When treatment has been randomly assigned, a difference in sample means provides an unbiased estimate of the ATE

The fact that our \(\hat{ATE} = ATE\) in this example is pure coincidence.

If we randomly assigned treatment a different way, we’d get a different estimate.

In general unbiased estimators will tend to be neither too high nor too low (e.g. \(E[\hat{\theta} - \theta] = 0\)])

Estimating an Average Treatment Effect

If we treatment has been randomly assigned, we can estimate the ATE by taking the difference of means between treatment and control:

\[ \begin{align*} E \left[ \frac{\sum_1^m Y_i}{m}-\frac{\sum_{m+1}^N Y_i}{N-m}\right]&=\overbrace{E \left[ \frac{\sum_1^m Y_i}{m}\right]}^{\substack{\text{Average outcome}\\ \text{among treated}\\ \text{units}}} -\overbrace{E \left[\frac{\sum_{m+1}^N Y_i}{N-m}\right]}^{\substack{\text{Average outcome}\\ \text{among control}\\ \text{units}}}\\ &= E [Y_i(1)|D_i=1] -E[Y_i(0)|D_i=0] \end{align*} \]

That is, the ATE is causally identified by the difference of means estimator in an experimental design

Random Assignment 1

| \(x_i\) | \(d_i\) | \(y_i\) |

|---|---|---|

| chocolate | 1 | 7 |

| chocolate | 1 | 8 |

| chocolate | 0 | 4 |

| chocolate | 1 | 4 |

| fruit | 0 | 10 |

| fruit | 1 | 8 |

| chocolate | 0 | 4 |

| fruit | 0 | 8 |

| chocolate | 1 | 4 |

| chocolate | 0 | 0 |

| \(\bar{y}_{d=1}\) | \(\bar{y}_{d=0}\) | \(\hat{ATE}\) |

|---|---|---|

| 6.6 | 2.8 | 3.8 |

Random Assignment 2

| \(x_i\) | \(d_i\) | \(y_i\) |

|---|---|---|

| chocolate | 0 | 3 |

| chocolate | 1 | 8 |

| chocolate | 0 | 4 |

| chocolate | 1 | 4 |

| fruit | 1 | 6 |

| fruit | 1 | 8 |

| chocolate | 0 | 4 |

| fruit | 1 | 7 |

| chocolate | 0 | 3 |

| chocolate | 0 | 0 |

| \(\bar{y}_{d=1}\) | \(\bar{y}_{d=0}\) | \(\hat{ATE}\) |

|---|---|---|

| 5.4 | 5.8 | -0.4 |

Random Assignment 3

| \(x_i\) | \(d_i\) | \(y_i\) |

|---|---|---|

| chocolate | 1 | 7 |

| chocolate | 0 | 6 |

| chocolate | 1 | 5 |

| chocolate | 1 | 4 |

| fruit | 0 | 10 |

| fruit | 0 | 9 |

| chocolate | 0 | 4 |

| fruit | 1 | 7 |

| chocolate | 1 | 4 |

| chocolate | 0 | 0 |

| \(\bar{y}_{d=1}\) | \(\bar{y}_{d=0}\) | \(\hat{ATE}\) |

|---|---|---|

| 5.4 | 5.8 | -0.4 |

Distribution of Sample ATEs

Observational vs Experimental Designs

Experimental designs are studies in which a causal variable of interest, the treatement, is manipulated by the researcher to examine its causal effects on some outcome of interest

Observational designs are studies in which a causal variable of interest is determined by someone/thing other than the researcher (nature, governments, people, etc.)

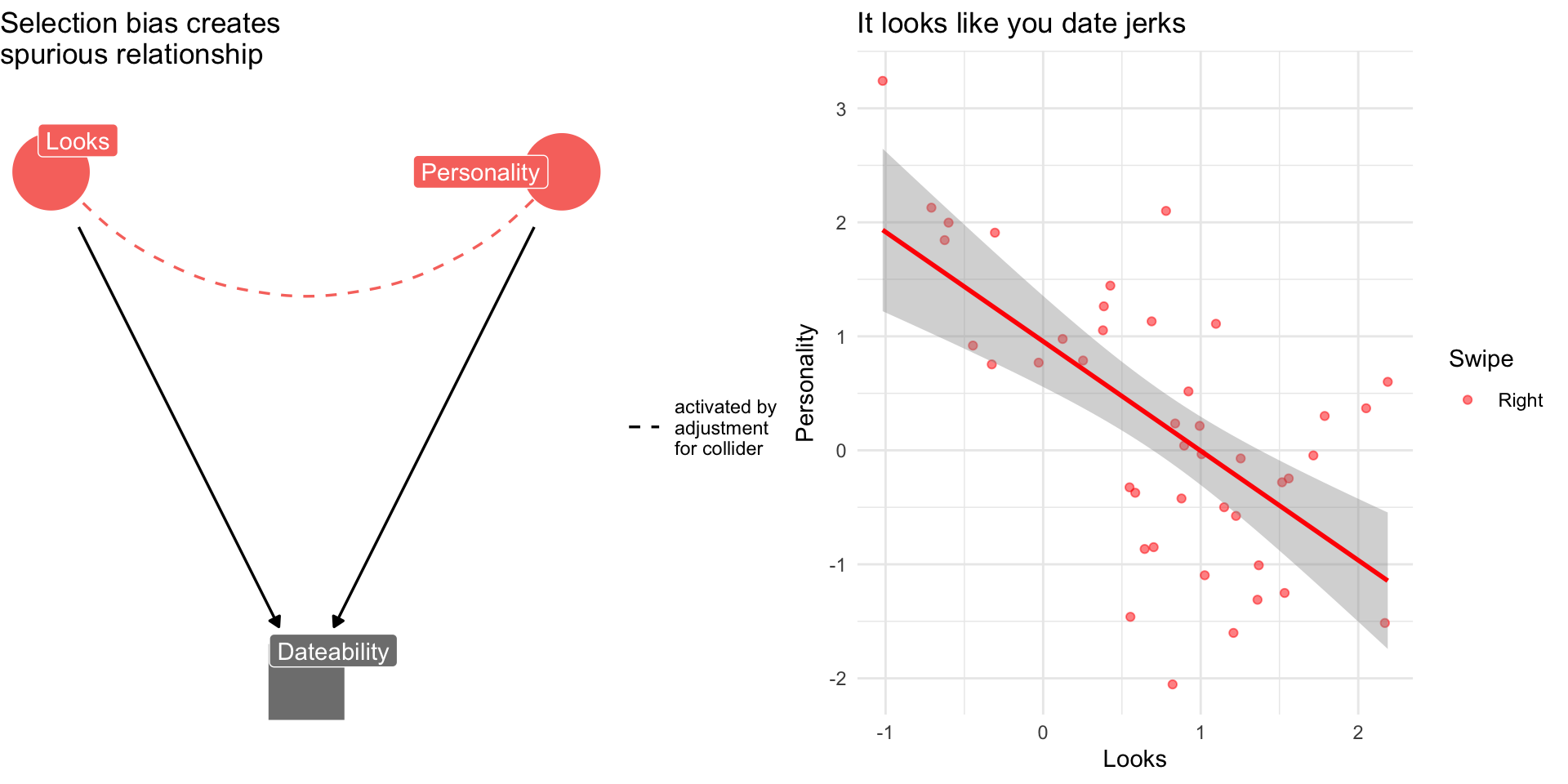

Two Kinds of Bias

Confounder bias: Failing to control for a common cause of

DandY(aka Omitted Variable Bias)Collider bias: Controlling for a common consequence

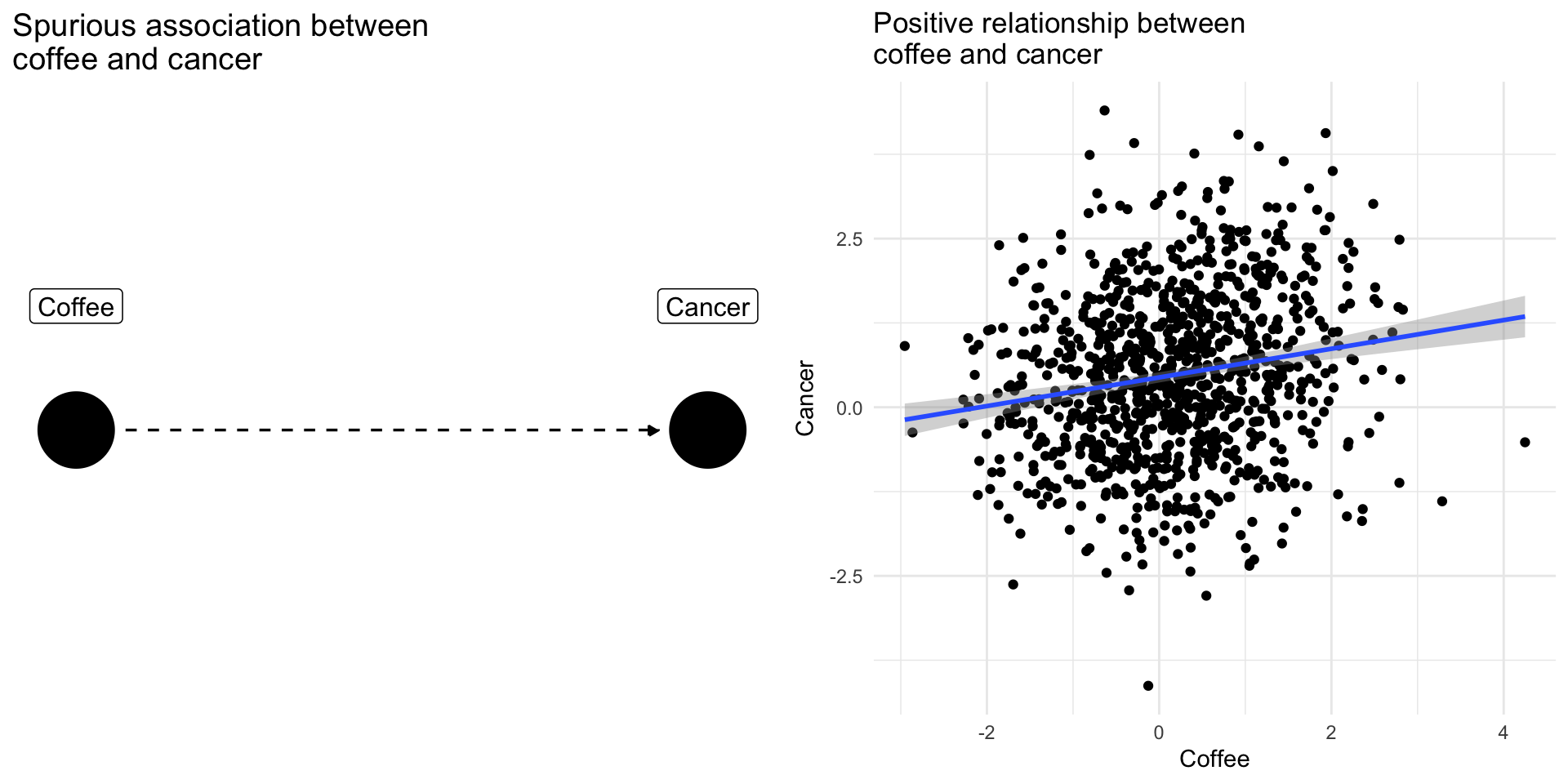

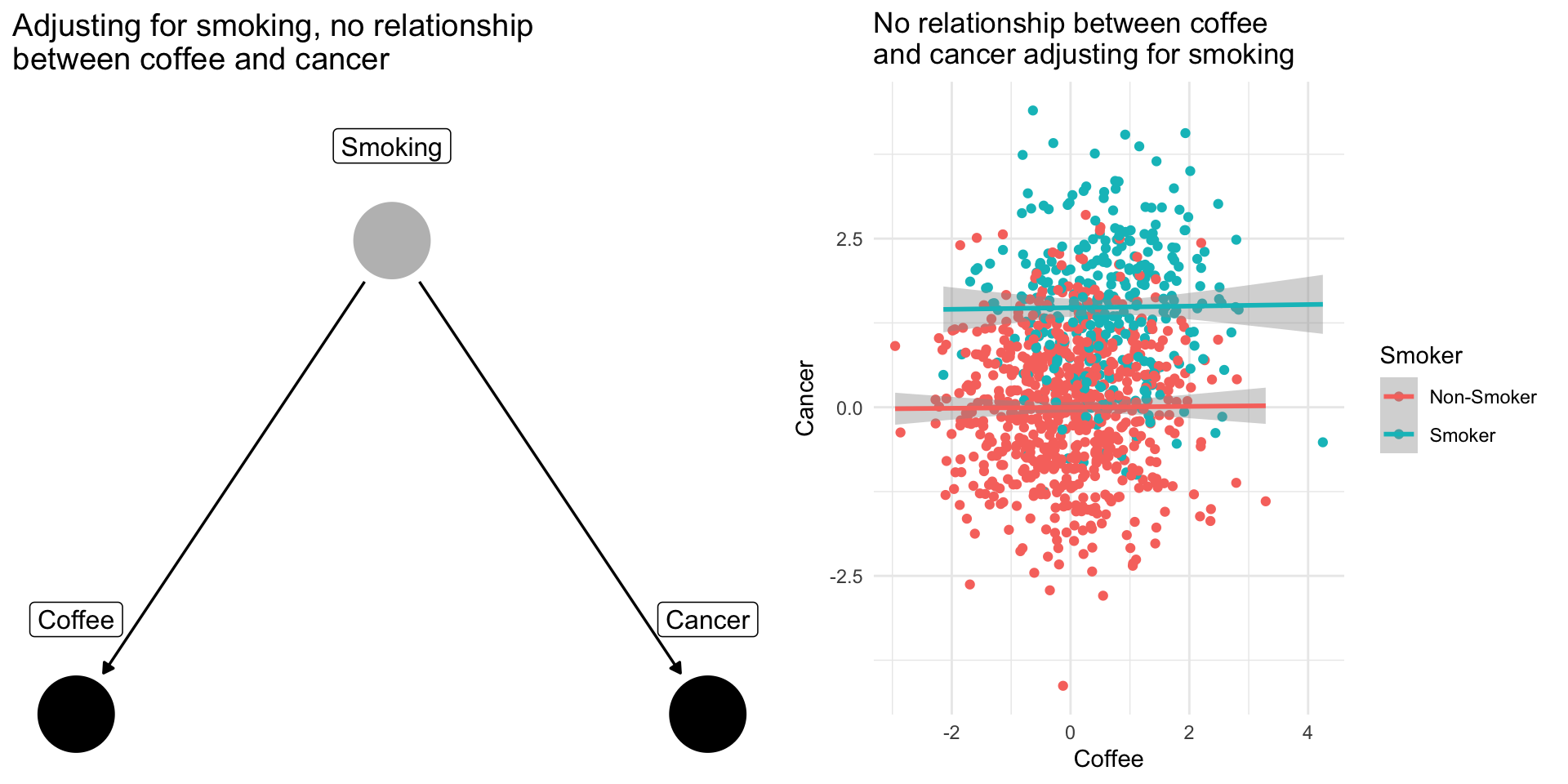

Confounding Bias: The Coffee Example

Drinking coffee doesn’t cause lung cancer we might find correlation between them because they share a common cause: smoking.

Smoking is a confounding variable, that if omitted will bias our results producing a spurious relationsip

Adjusting for confounders removes this source of bias

Note

When scholars include “control variables” in a regression, often they are trying to adjust for confounding variables that if omitted would bias their results

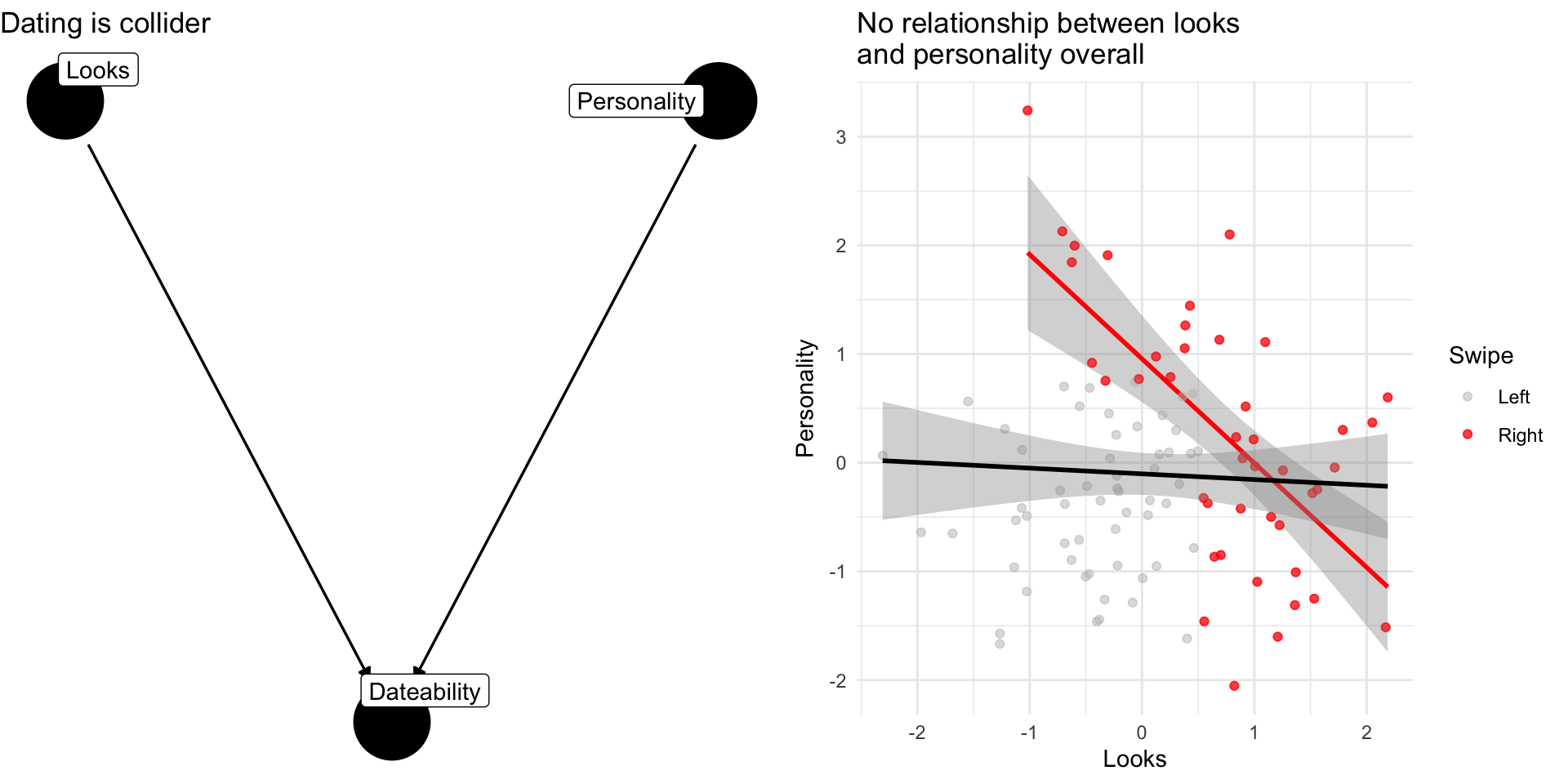

Collider Bias: The Dating Example

Why are attractive people such jerks?

Suppose dating is a function of looks and personality

Dating is a common consequences of looks and personality

Basing our claim off of who we date is an example of selection bias created by controlling for collider

Note

If you see a regression model that controls for everything and the kitchen sink without theoretical justification, we might worry about the potential for collider bias

When to control for a variable:

Causal Inference

Causal inference is about making credible counterfactual comparisons

In an experiment, researchers create these comparisons through random assignment

- Pro: Addresses concerns about selection bias

- Con: Do results generalize?

In an observational study, also attempt to make credible counterfactual comparisons through how they design their studies and analyze their data.

- Pro: May generalize better/greater ecological validity

- Cons: Greater potential for confounding and colliding bias

In general characteristics of the design are more important than the specifics variables in a given model for addressing bias

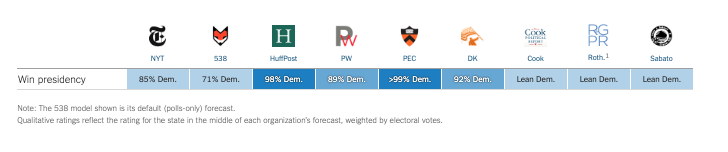

Using polls to forecast elections

Forecasting Elections

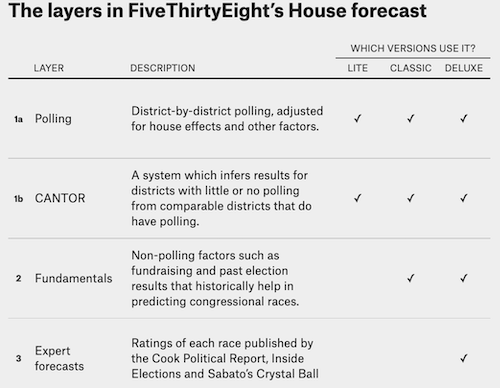

Election forecasts reflect varying combinations of:

- Expert Opinion

- Fundamentals

- Polling

Forecasts differ in the extent to which they rely on these components and how they integrate them in their final predictions

FiveThirtyEight’s Approach to Forecasting

Under Nate Silver…

Forecasting Elections with Polls

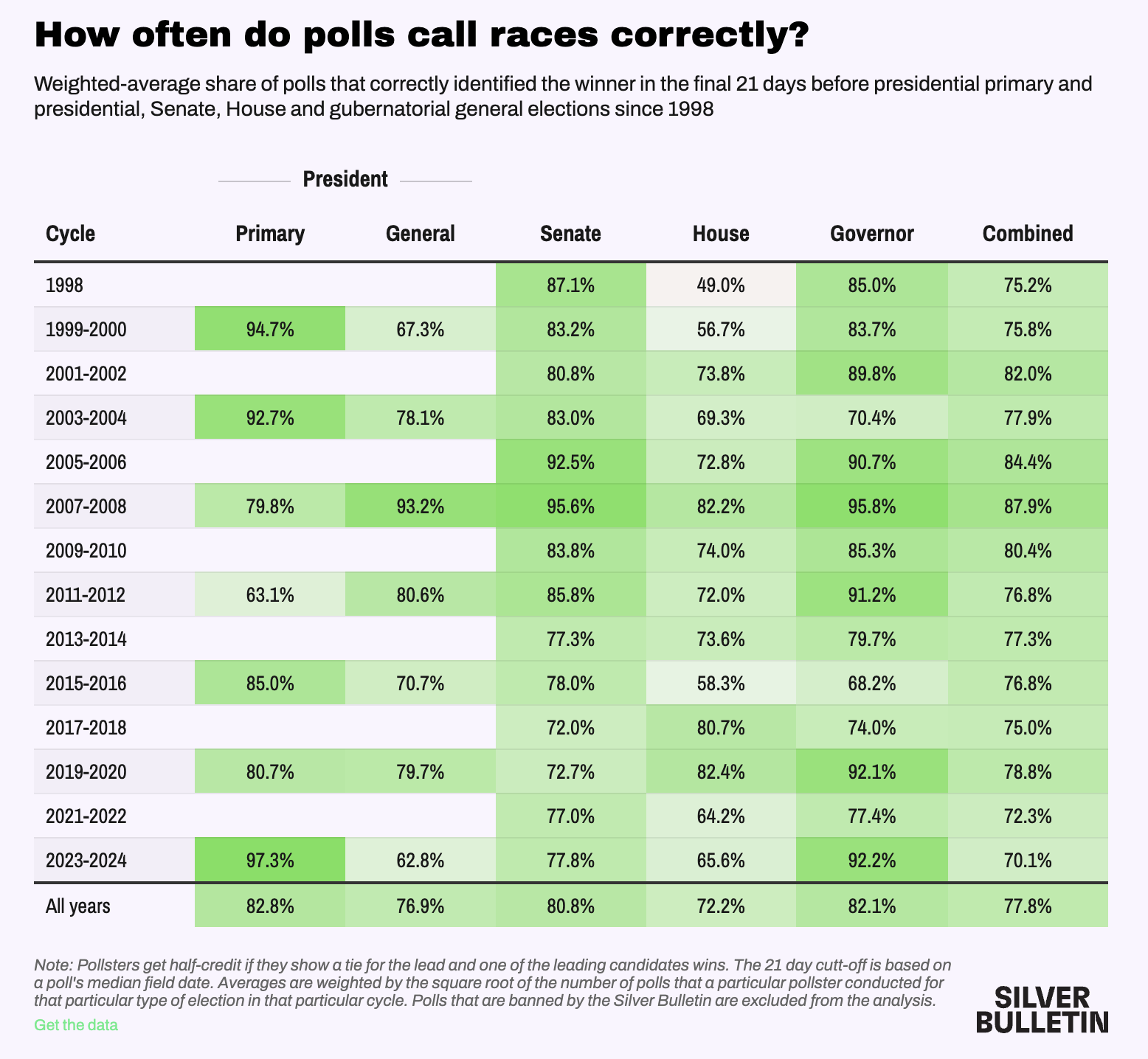

The preeminence of polling in modern forecasts reflects the success of Nate Silver and FiveThirtyEight in correctly predicting the 2008 (49/50 states correct) 2012 (50/50) presidential elections

Any one poll is likely to deviate from the true outcome

Averaging over multiple polls \(\to\) more accurate predictions than any one poll, provided…

the polls aren’t systematically biased

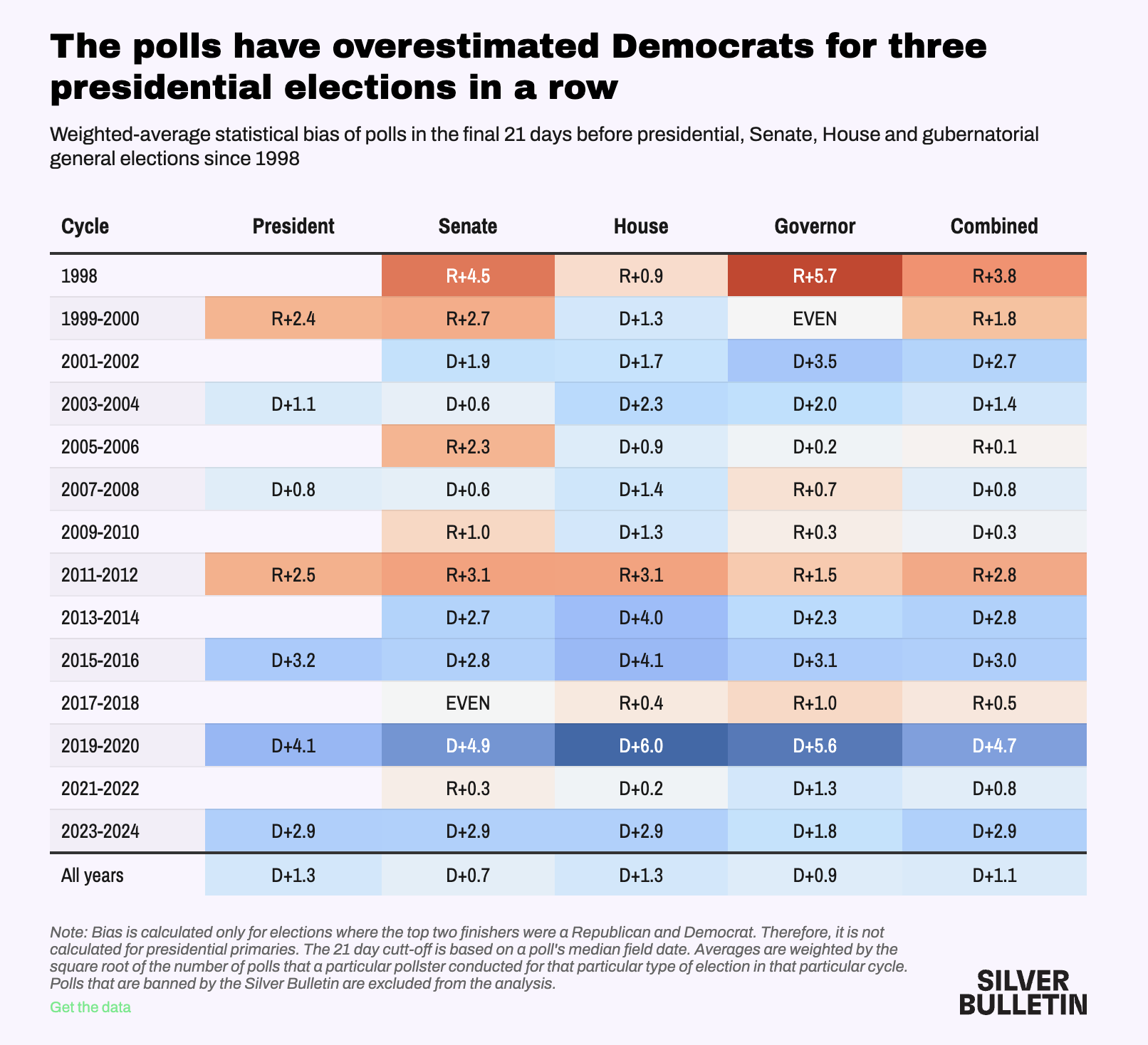

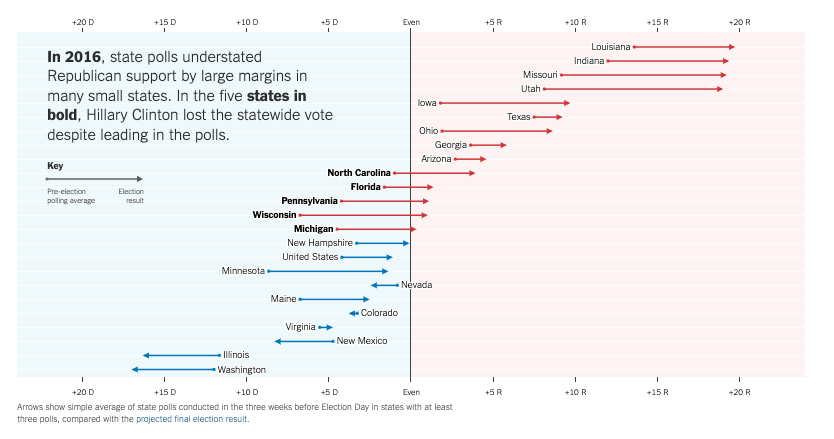

Concerns about the polls reflect the failure of such approaches to predict

Trump’s Victory in 2016

Strength of Trumps Support in 2020 and 2024

Polling in Recent Elections

Polling the 2016 Election:

- The polls missed bigly

- National polls were reasonably accurate (Clinton wins Popular Vote)

- State polls overstated Clinton’s lead / understated Trump support

How did we get it so wrong in 2016?

Some likely explanations

Likely voter models overstated Clinton’s support

Large number of undecided voters broke decisively for Trump

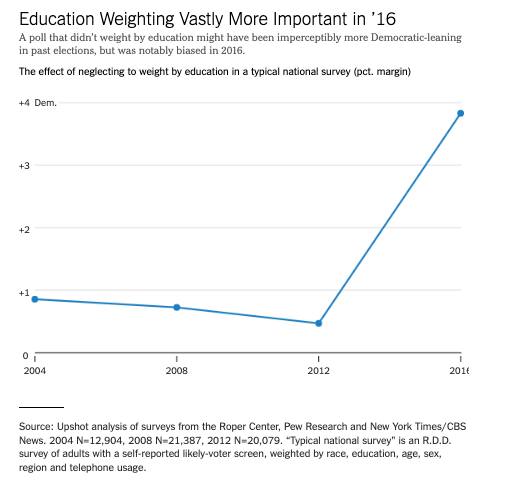

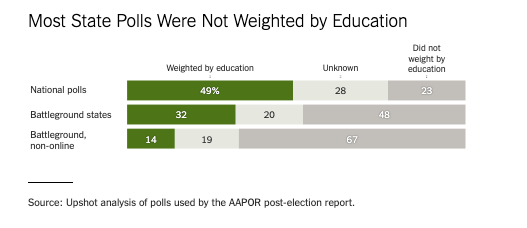

White voters without a college degree underrepresented in pre-election surveys

Weighting for education

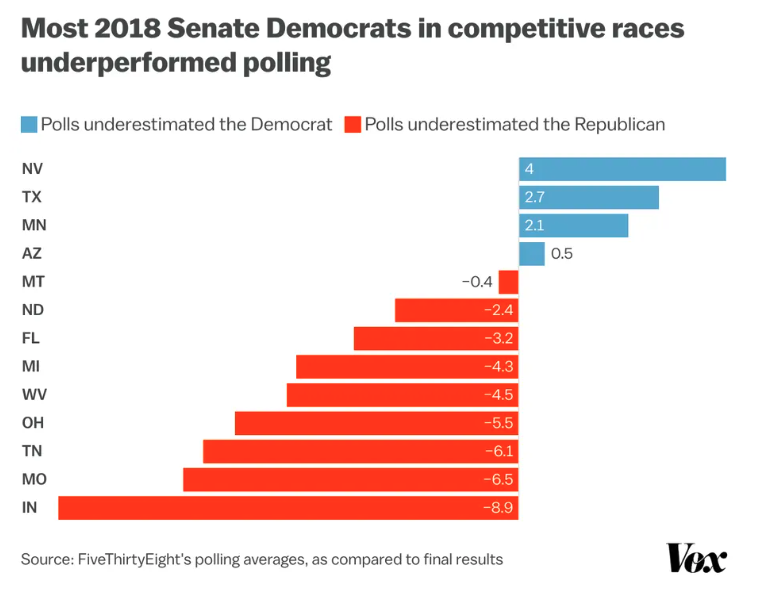

2018: A brief repreive?

Polls did a better job

- Most state polls weighted by education

- Underestimated Democrats in House and Gubernatorial races

- No partisan bias in Senate Races

Forecasts correctly call:

- Democratic House

- Republican Senate

However…

2020: Historic Problems, Unclear Solutions

Average polling errors for national popular vote were 4.5 percentage points highest in 40 years

Polls overstated Biden’s support by 3.9 points national polls (4.3 points in state polls)

Polls overstated Democratic support in Senate and Gubernatorial races by about 6 points

Forecasts predicted Democrats would hold

- 48-55 seats in the Senate (actual: 50 seats)

- 225-254 seats in the House (actual: 222 seats)

2020: What Went Wrong

Unlike 2016, no clear cut explanations for what went wrong

Not a cause:

- Undecided voters

- Failing to weight for education

- Other demographic imbalances

- “Shy Trump Voters”

- Polling early vs election day voters

Potential Explanations

- Covid-19

- Democrats more likely to take polls

- Unit non-response

- Between parties

- Within parties

- Across new and unaffiliated voters

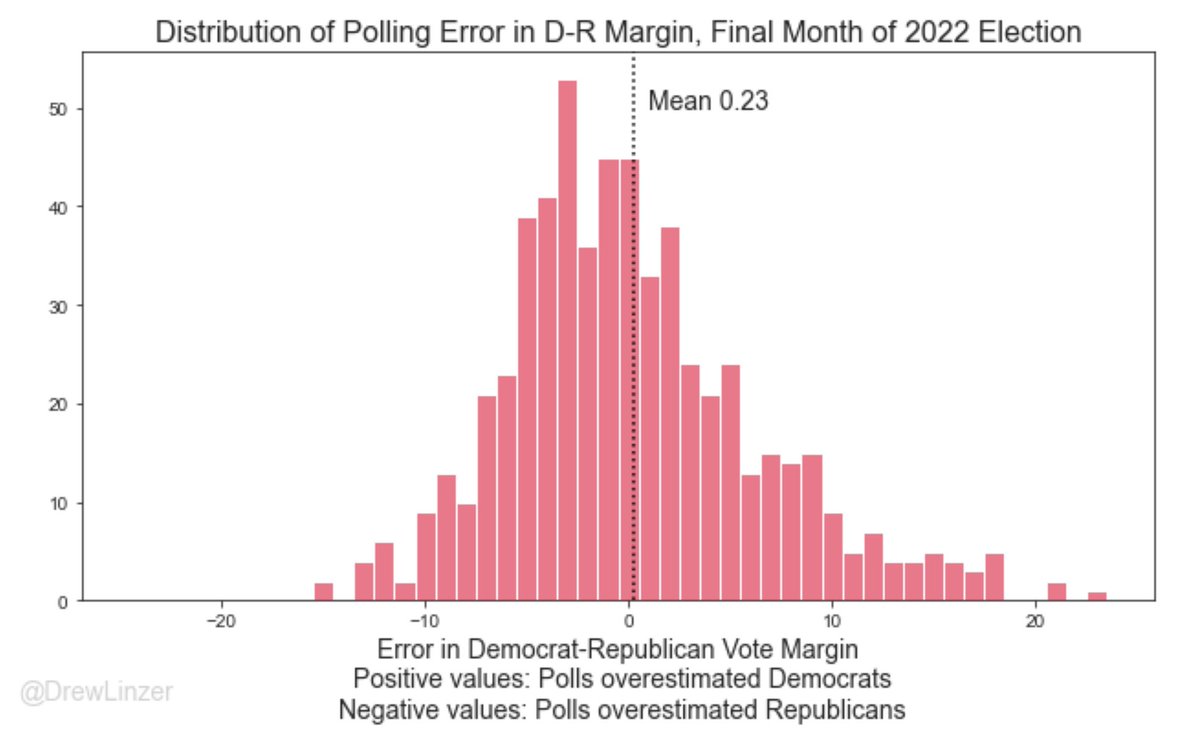

How the polls did in 2022

Overall, pretty good

Average error close to 0

Average absolute error ~ 4.5 percentage points

Some polls tended overstate Republican support (e.g. Trafalgar)

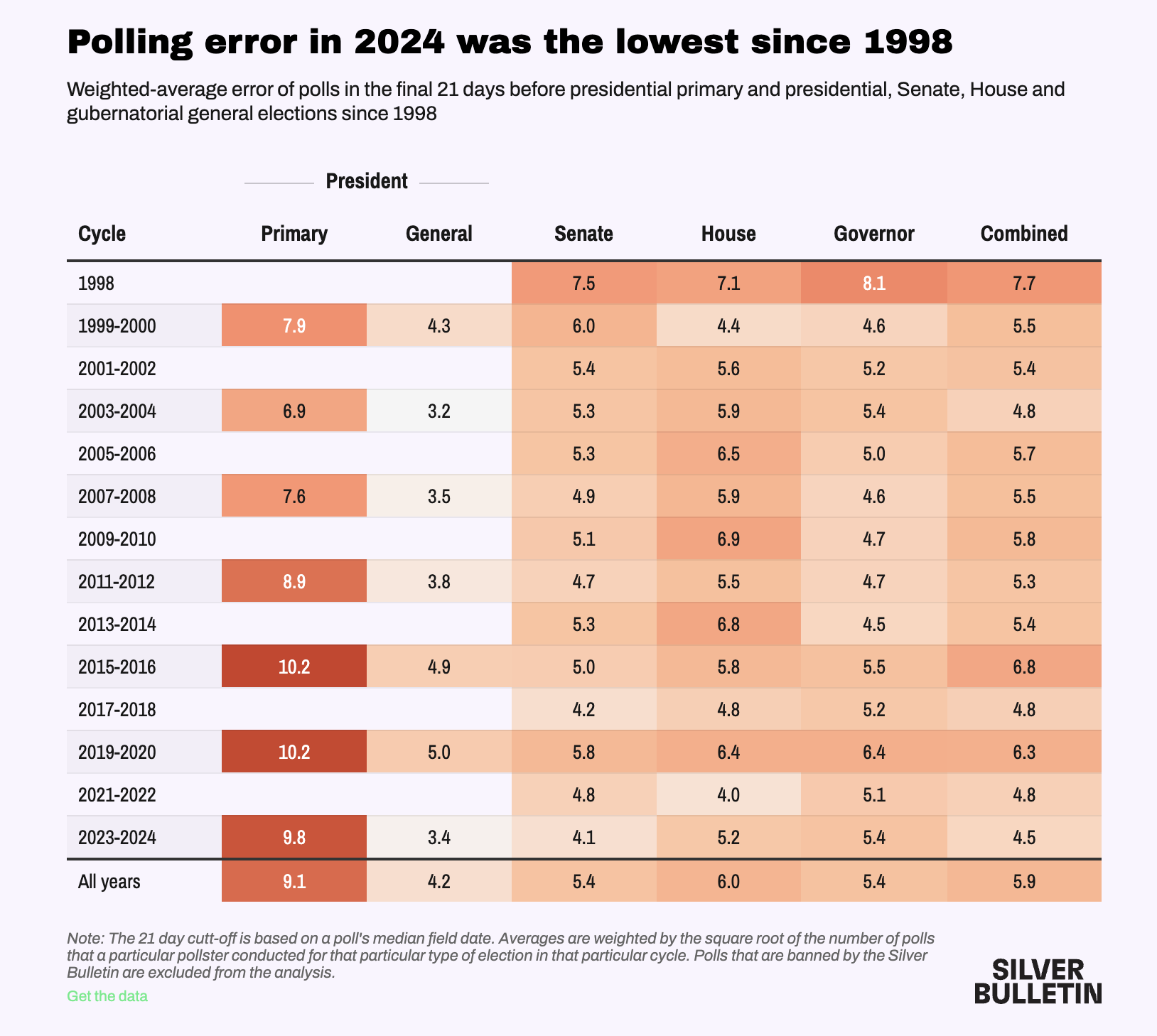

How the polls did in 2024

Good and bad news (Silver Bulletin)

Good:

- Average Polling Error within historical norms

Bad:

Consistently underestimate Trump/Republican support in Presidential election years

Bad job of calling close races

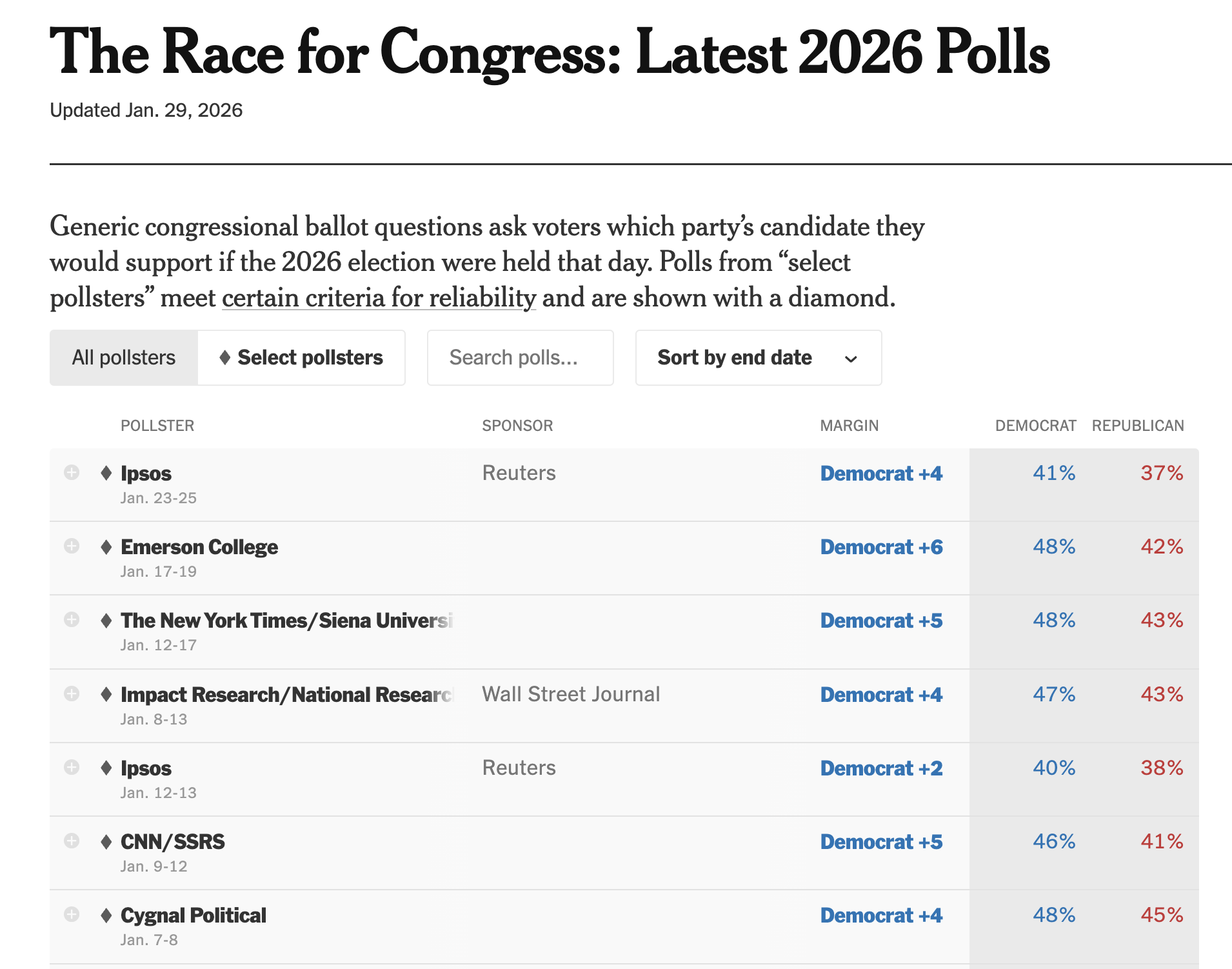

What to expect for 2026 Midterms

Democrats hold a 3-5 point lead in generic ballots

Polling traditionally better when Trumps not on the ballot

Lot’s can change between now and November (Temporal Error…)

Converse (1964)

Goals

This weeks readings are HARD

Our goal is to answer the following:

- What’s the research question

- What’s the theoretical framework

- What’s the empirical design

- What’s are the results

- What’s are the conclusions

The Structure of Converse (1964)

- Introduction

- Some Clarification of Terms

- Sources of Constraint on Idea Elements

- Active Use of Ideological Dimensions of Judgement

- Recognition of Ideological Uses of Judgement

- Constraints among idea-elements

- Social Groupings as central objects in belief systems

- The Stability of belief elements over time

- Issue Publics

- Summary

- Conclusion

Introduction

Converse introduces the concept of belief systems and tells us this article is about the contrast between the belief systems held by political elites and the mass public

He gestures towards a hierarchy of belief strata and the importance of belief systems for democratic theory

Kind of slow start

Some Clarification of Terms

Converse defines his core concepts

Beliefs Systems \(\sim\) Ideology

Idea elements \(\sim\) Attitudes

Constraint:

- The interdependence of ideas in a belief system

- A sense of what goes with what

Centrality:

- How likely a belief is to change?

Range:

- The diversity of topics

Sources of Constraint

(Theoretical Framework)

Converse lays out some plausible sources of ideological constraint:

Logical: More spending + Less taxes -> Bigger deficits

Psychological: “the quasi-logic of cogent arguments”

Social: Social diffusion of information -> creates perceptions of what goes with what

Converse also offers a definition of the well-informed person who understands what goes with what but can also articulate why.

Consequences of declining information belief systems

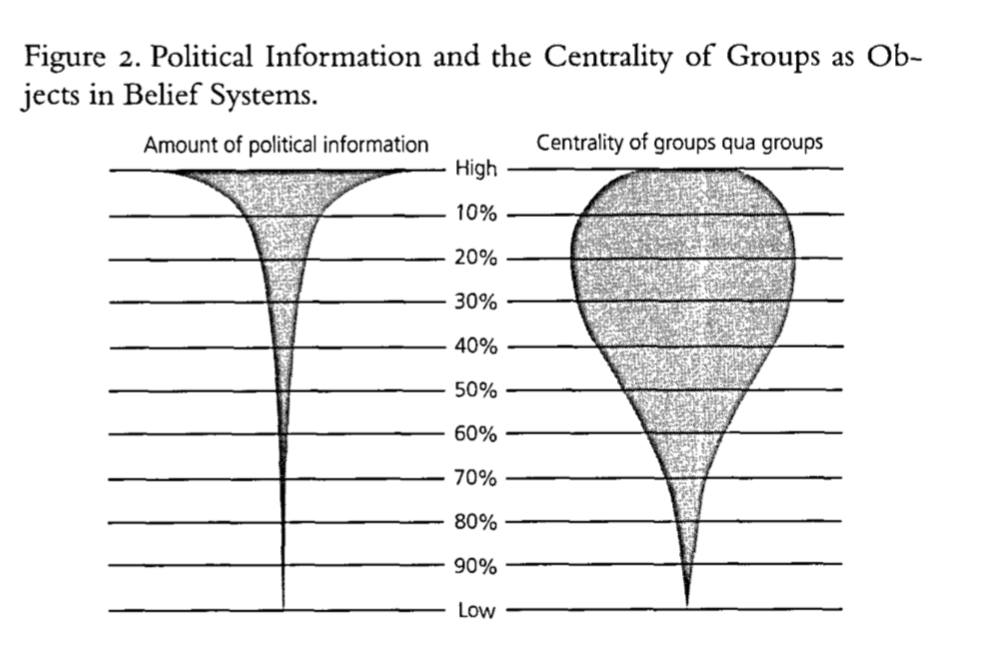

Converse argues as we move from the well-informed to uninformed, several things happen:

- Belief systems lose constraint

- Social groups replace ideology principles in centrality

So how does he go about doing showing this?

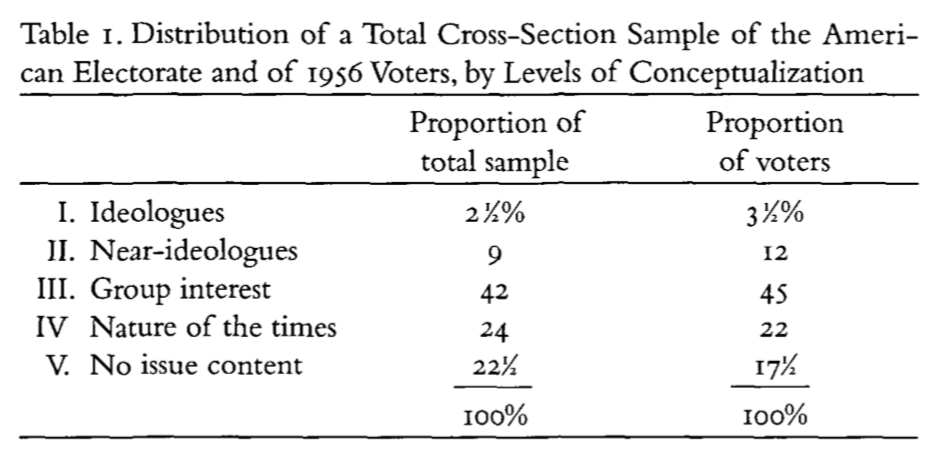

Active Use of Ideological Dimensions of Judgement

Converse considers people’s open-ended responses to questions about whether there is anything they like or dislike about presidential candidates in 1956 and the political parties discussed in detail in chapter 10 of the The American Voter

Active Use of Ideological Dimensions of Judgement

The American Voter

Ideologues:

Well, the Democratic Party tends to favor socialized medicine and I’m being influenced in that because I came from a doctor’s family.

Group Benefits:

Well I just don’t believe their for the common people

Nature of the times:

My husband’s job is better. … My husband is a furrier and when people get money they buy furs

No Content:

I hate the darned backbiting

Recognition of Ideological Dimensions of Judgement

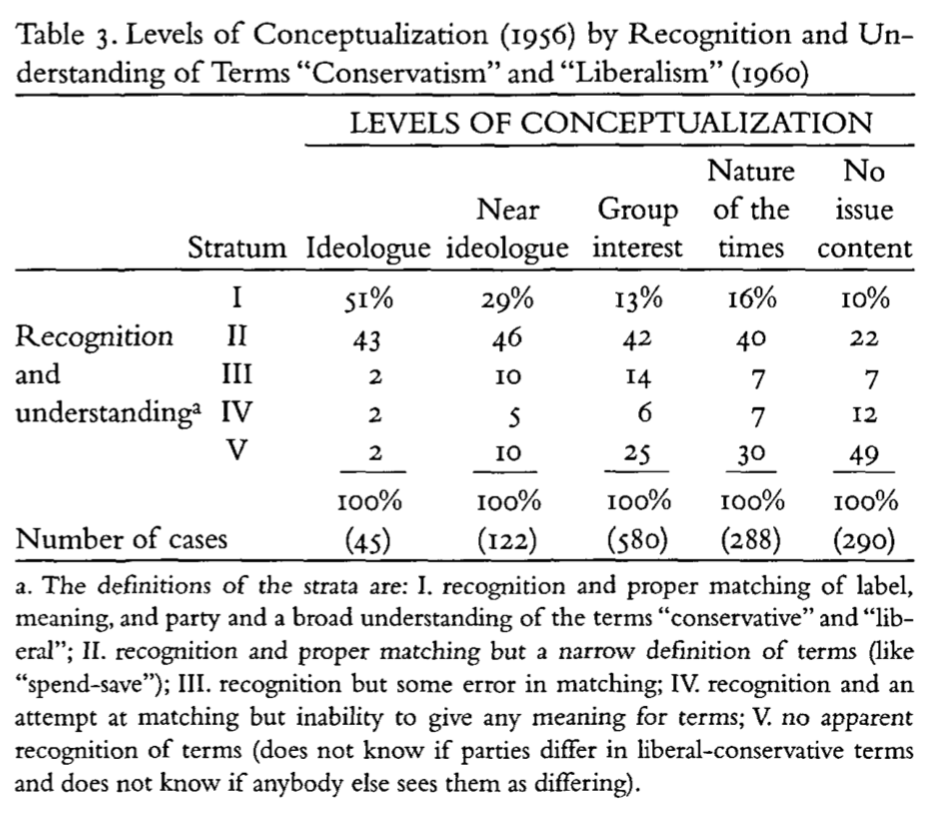

Next Converse considers these levels of conceptualization in 1956 with peoples ability to attach the correct ideological labels with political parties

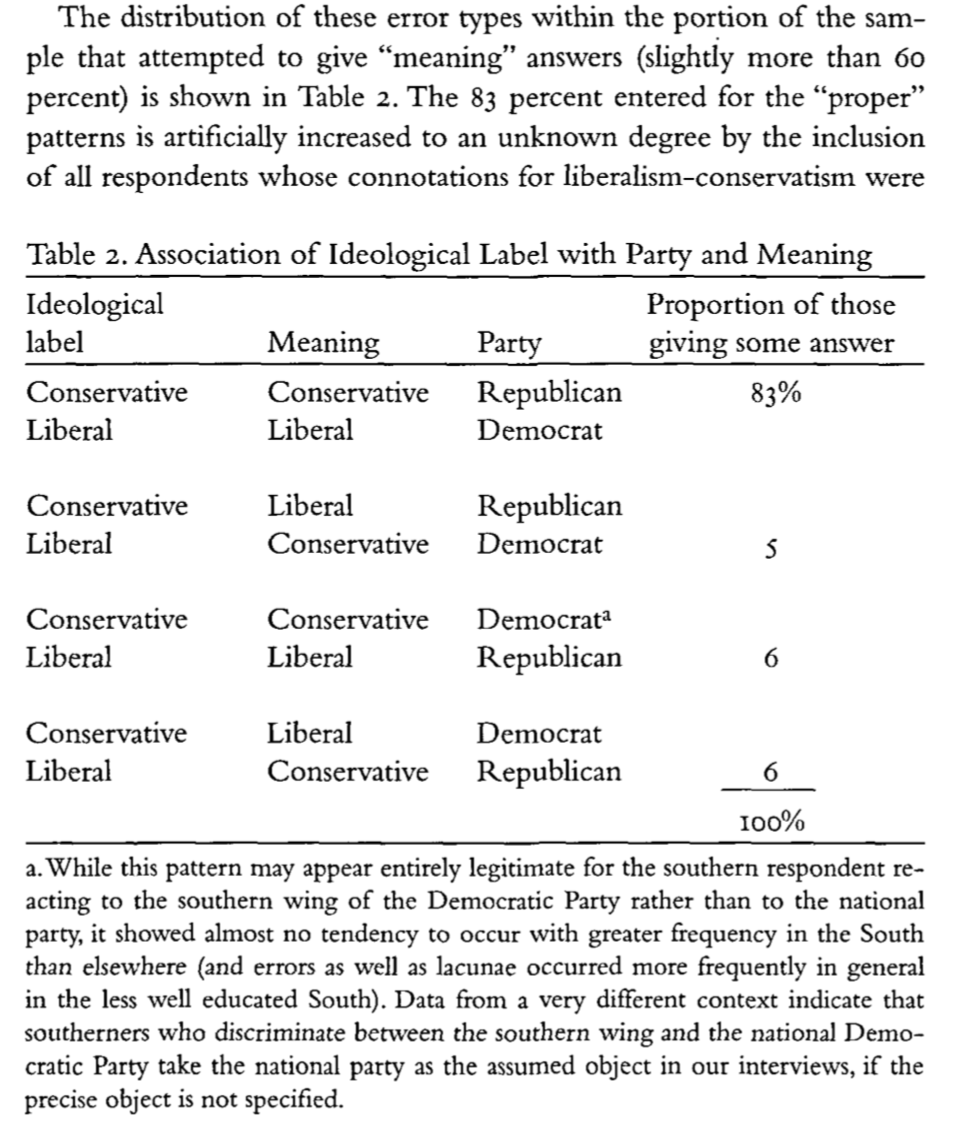

Overall, most respondents label Democrats as the liberal and Republicans as the conservative party (Table 2)

But the depth of this understanding appears quite shallow (e.g. spend vs save) (Table 3)

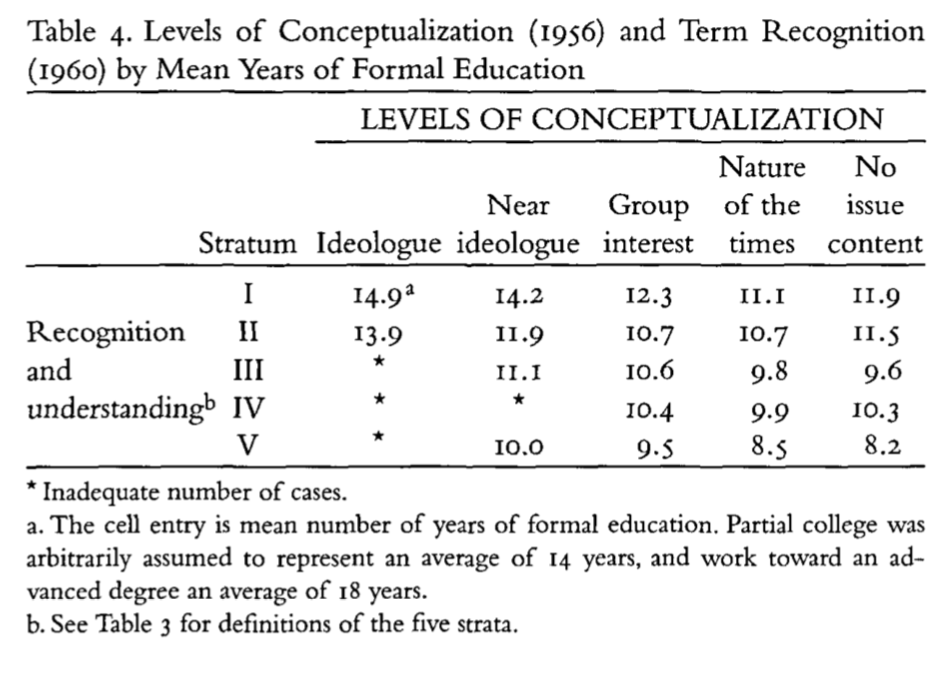

Recognition varies with education (Table 4)

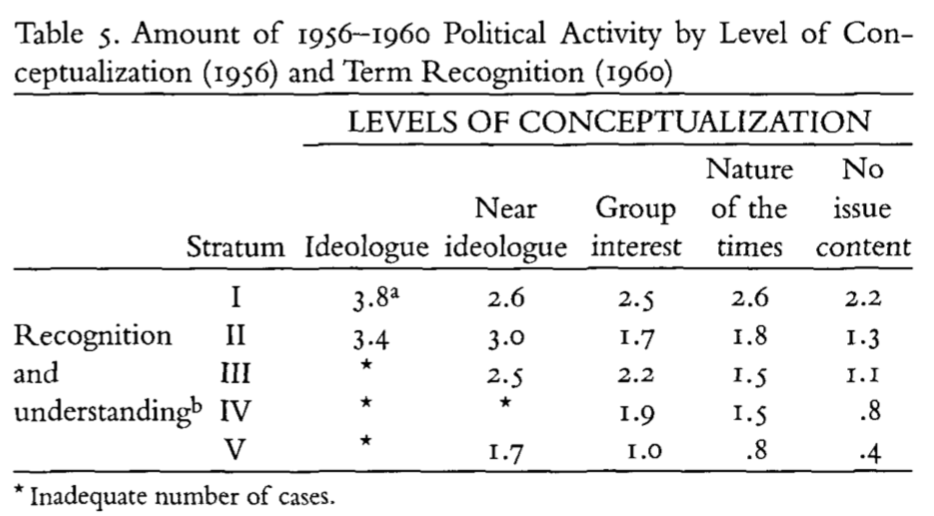

Those with greater levels of recognition are more active in politics (Table 5)

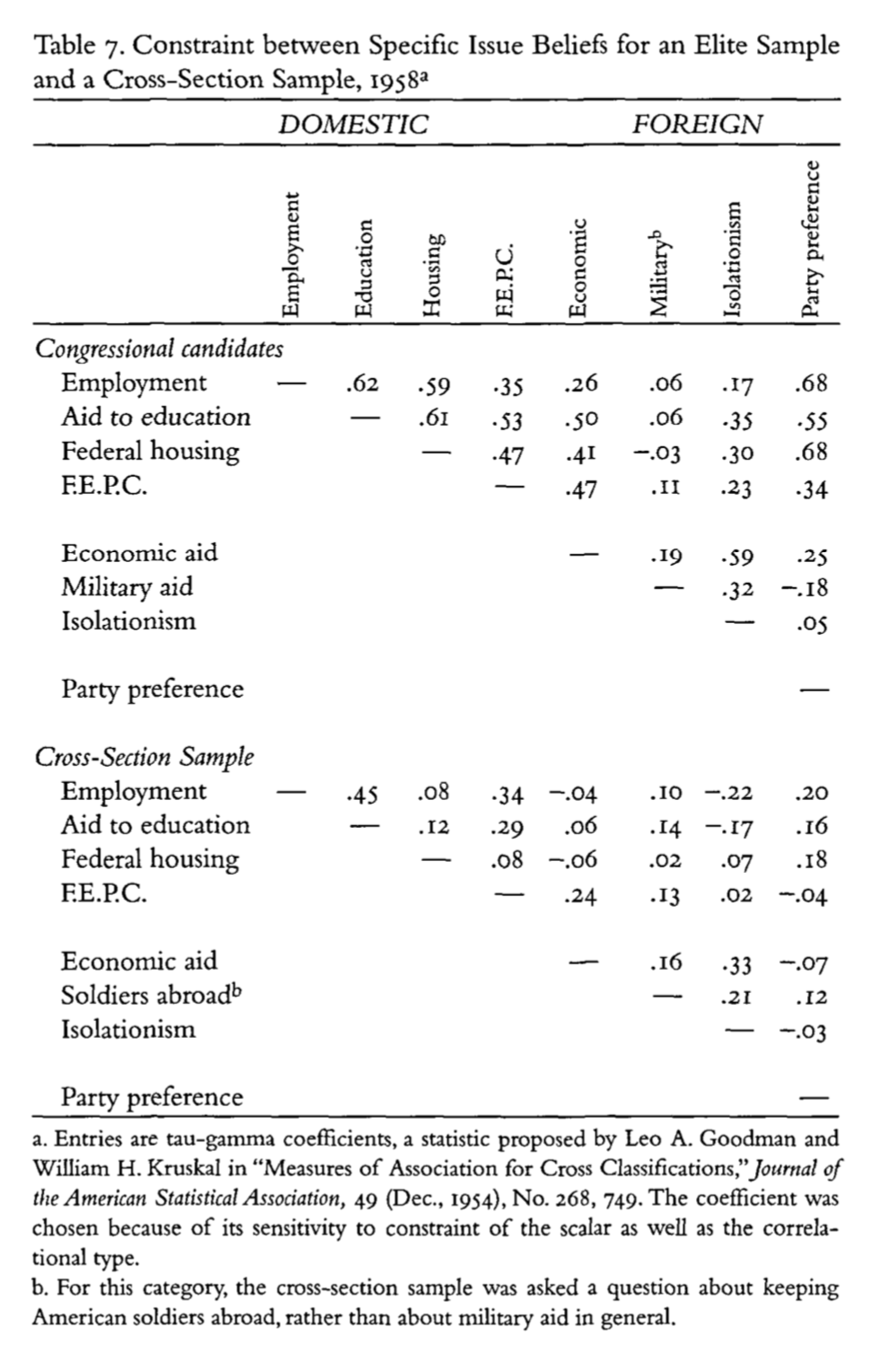

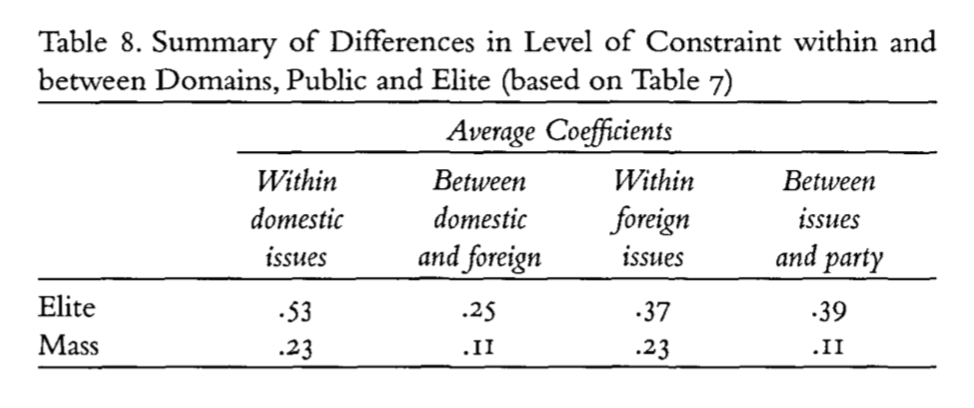

Constraints among idea-elements

Next Converse considers the degree of constraint (measured by correlations) between issue elements in an elite (congressional candidates) compared to the mass public

People who took a liberal position on one issue did not necessarily take a liberal position on another

the correlations between between elites’ issue attitudes were higher than the mass public

Individuals lack a sense of what goes with what

What are these measures

Read the footnotes!

What are these measures

What’s a tau-gamma coefficient?

I believe Converse is using a measure of association for ordinal data that’s built off the cross tabs of variables. The estimate of gamma, \(G\), depends on two quantities:

- \(N_s\), the number of pairs of cases ranked in the same order on both variables (number of concordant pairs),

- \(N_d\), the number of pairs of cases ranked in reversed order on both variables (number of reversed pairs),

where “ties” (cases where either of the two variables in the pair are equal) are dropped. Then

\[G=\frac{N_s-N_d}{N_s+N_d}\]

Note

As long as you have a basic sense of what correlations are trying to tell us you don’t need to know the technical details of a specific estimator

Elites show higher degrees of constraint

Social groupings as central objects in belief systems

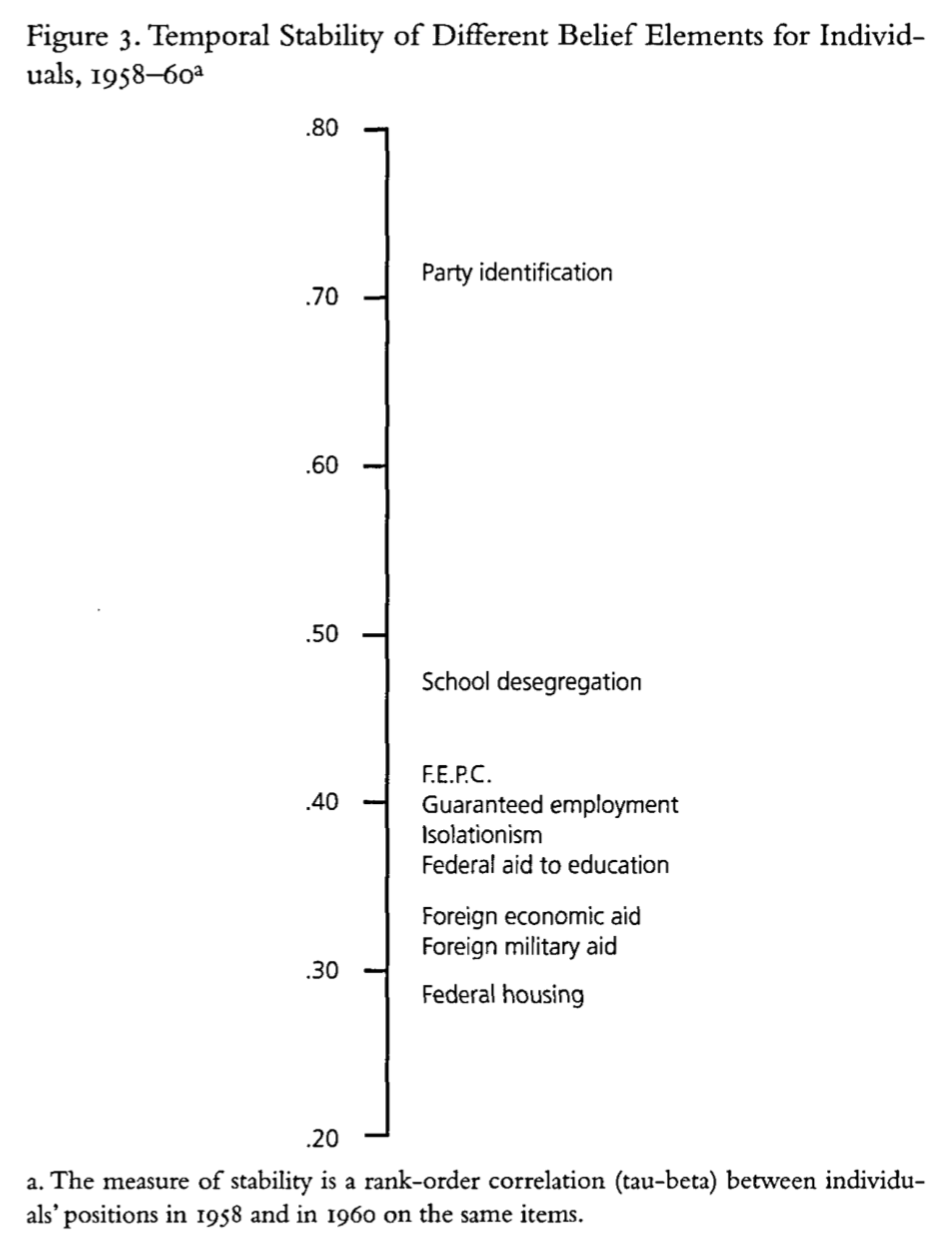

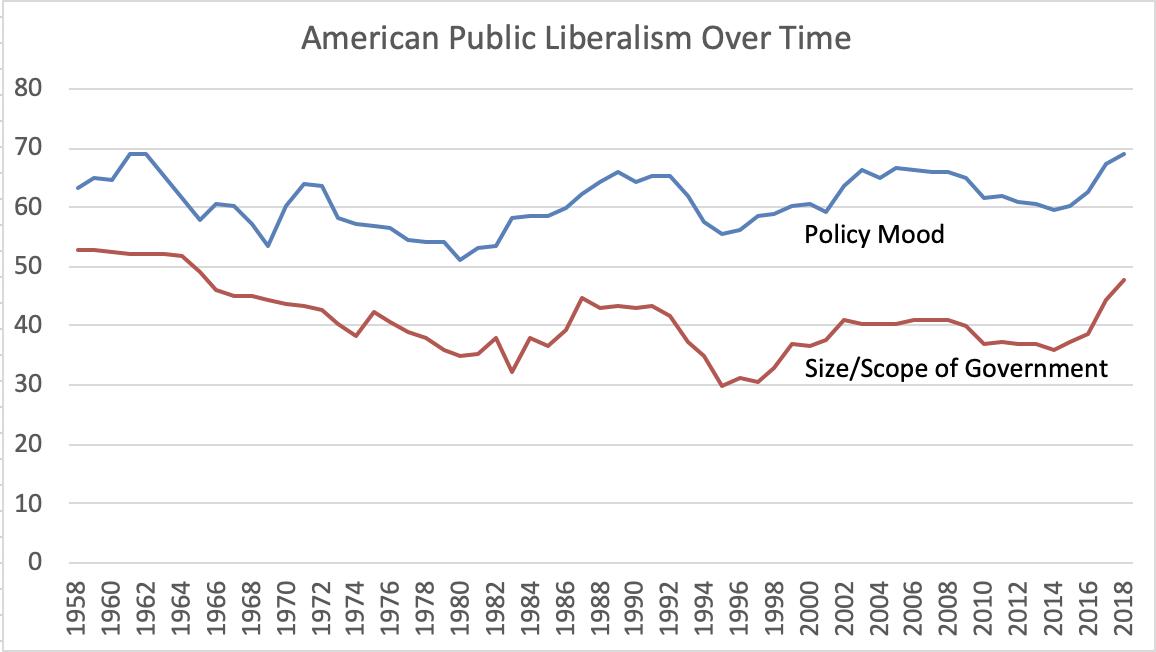

The stability of belief elements over time

Converse considers the stability of responses over time, looking at data from 1958-1960 finding variation the strength of temporal correlations across surveys, and attributes this to the centrality of groups and the party system for the mass public

The stability of belief elements over time

The final piece of Converse’s argument concerns the stability of a single belief over time (1956, 1958, 1960):

The government should leave things like electrical power and housing for private businessmen to handle

A limiting case an issue not in the public debate of this period

People appear to answer the question at random

Only a small proportion (~20% fn. 39) held stable attitudes across all three periods

Issue Publics, Summary, Conclusion

Converse wraps up his argument by

Allowing for the possibility of small “issue publics” on more narrow issues

Offering some comments on cross-national and historical comparisons

Summarizing the “continental shelf” that exists between elites and masses.

What do we think?

- What’s the research question

- What’s the theoretical framework

- What’s the empirical design

- What’s are the results

- What’s are the conclusions

Summary of Converse (1964)

Converse (1964) remains one of the most influential articles in American Political Behavior

Framed decades of research on questions of ideology and citizen competence

- Why?

In the absence of coherent and stable worldviews, how does democracy function?

Responses to Converse (1964)

Responses to Converse (1964)

- Measurement error (Today)

- Revised definitions of citizens competence (Next Week)

- The Miracle of Aggregation

- Source Cues and Heuristics (Weeks 5 and 6)

- Revised models of Survey Response (Weeks 5 and 6)

- Revised models of what democracy requires (on going)

The Miracle of Aggregation

Responses to Converse (1964)

- Measurement error (Today)

- Revised definitions of citizens competence (Next Week)

- The Miracle of Aggregation

- Source Cues and Heuristics (Weeks 5 and 6)

- Revised models of Survey Response (Weeks 5 and 6)

- Revised models of what democracy requires (on going)

Ansolabehere et al. (2008)

Goals

- What’s the research question

- What’s the theoretical framework

- What’s the empirical design

- What’s are the results

- What’s are the conclusions

What’s the research question

- Are issue preferences as unstable and incoherent as Converse suggests, or can accounting for measurement error reveal a more ideologically consistent mass public

What’s the theoretical framework

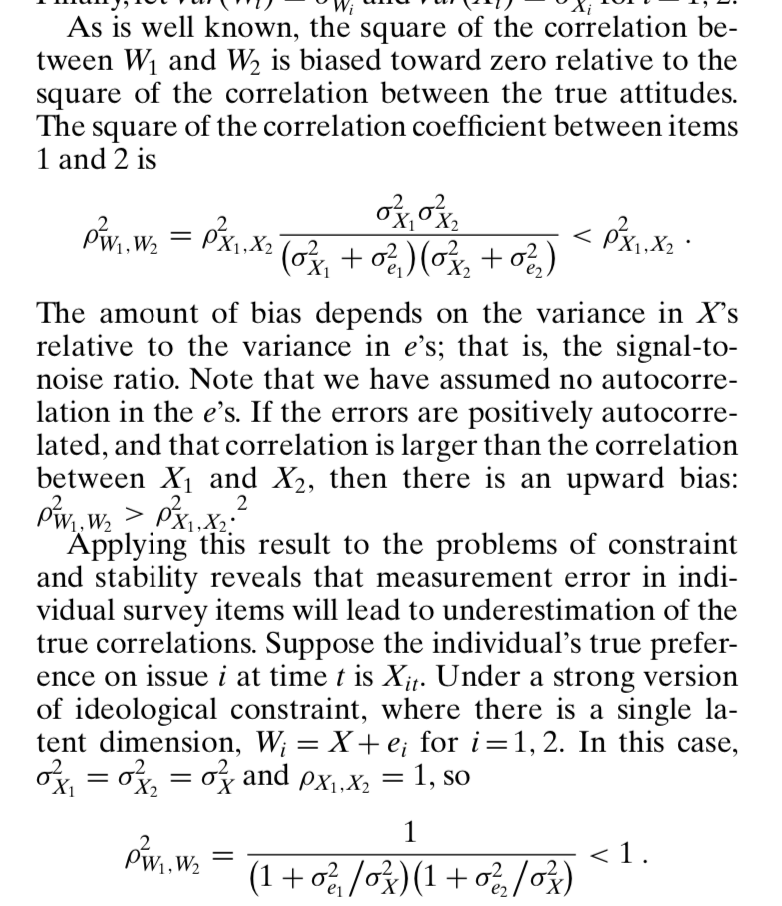

Ansolabehere et al. pick up a critique made by Achen (1975) and others that the lack of constraint is primarily caused my measurement error

They show that measurement error tends to decreases with the number of items one uses

They offer a simple solution: measure concepts with scales constructed from multiple items

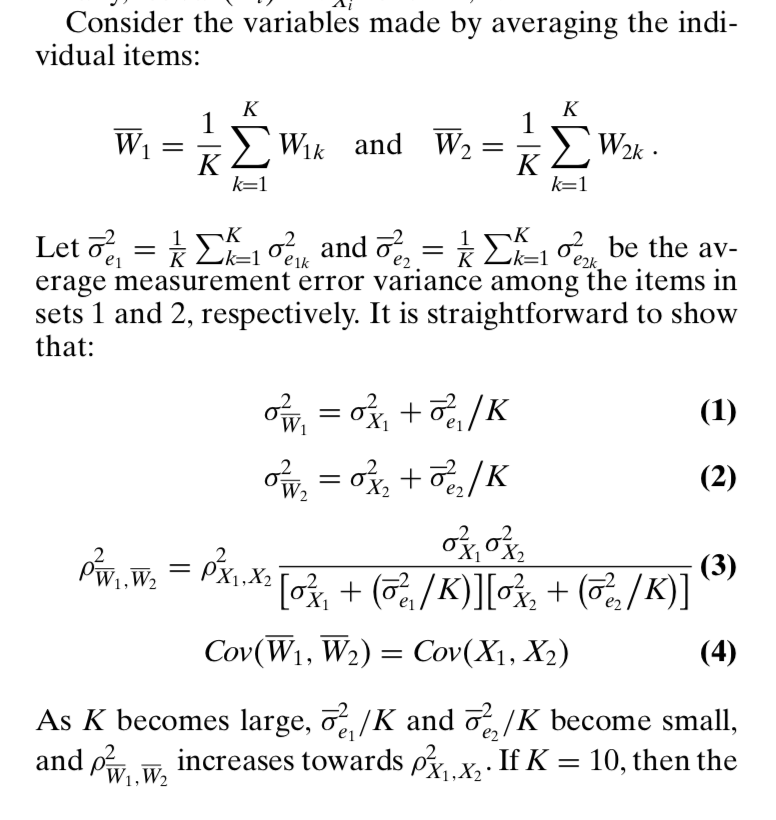

Measurement Error

Classic measurement error models assume what we observe is a measure of some unobserved (latent) truth, plus measurement error that has mean 0 and is uncorrelated with the latent truth, X.

\[\underbrace{W}_{\text{What we observe}} = \overbrace{X}^{\text{The latent truth}} +\underbrace{\epsilon}_{\text{Measurement Error}}\]

Measurement Error

One can show that:

\[Var(W) = Var(X) + Var(\epsilon)\\ = \sigma^2_X + \sigma^2_\epsilon\]

And the covariance between our observed and unobserved variables is:

\[Cov(XW) = \sigma^2_X\]

Correlations and Reliability

With some assumptions and transformations we can show that the square of correlations describe the reliability of a measure

Reliability is the proportion of the variance in the observed variable that comes from the latent variable of interest, and not from random error.

This motivates Ansolabehere et al. approach

Correlations and Reliability

\[\begin{aligned} \rho^2 &= \left(\frac{Cov(XW)}{SD(X)SD(W)} \right)^2\\ &=\left(\frac{\sigma_X^2}{\sqrt{\sigma_X^2}(\sqrt{\sigma_X^2+\sigma_\epsilon^2)}} \right)^2\\ &=\frac{\sigma_X^4}{\sigma_X^2(\sigma_X^2+\sigma_\epsilon^2)}\\ &=\frac{\sigma_X^2}{\sigma_X^2+\sigma_\epsilon^2}\\ &=\frac{\text{True Variance}}{\text{Total Variance}}\\ \end{aligned}\]

Measurement error reduces reliability

Multiple items reduce measurement error

With some caveats

- No autocorrelation

- Error on one item doesn’t predict error on another

- Additional items can’t be too noisy

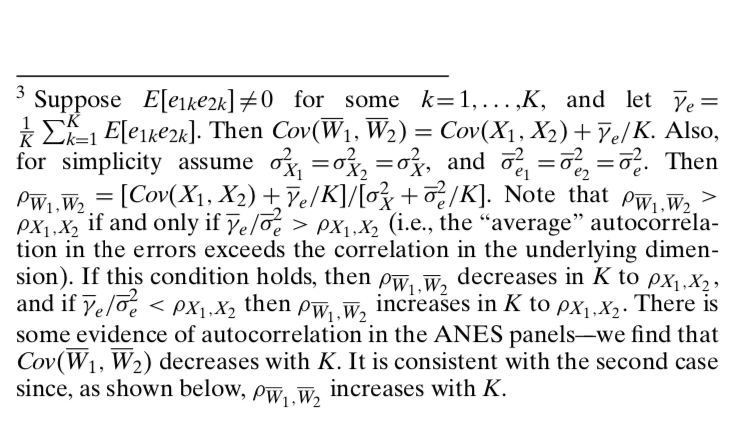

What’s the empirical design

Panel data from the NES

Principal component factor analysis to scale items together

- Basically averaging (weighting by the variance each item contributes)

Correlational analysis within items and across time and also within surveys

Simulations

Sub-group analysis by political sophistication

Regression analysis of issue voting

Principal Components Analysis

Find dimensions that explain the maximum variance with the minimum error

A useful tool for data reduction

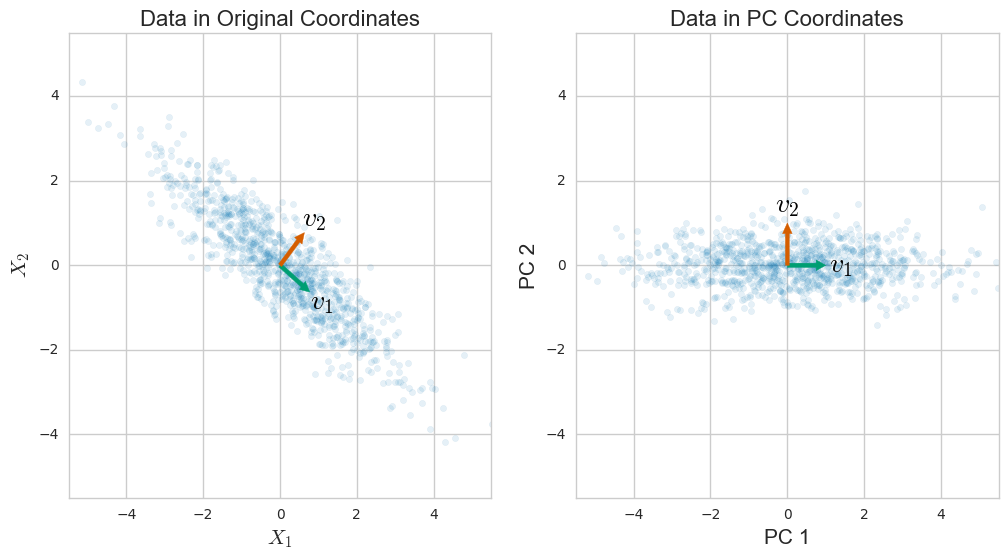

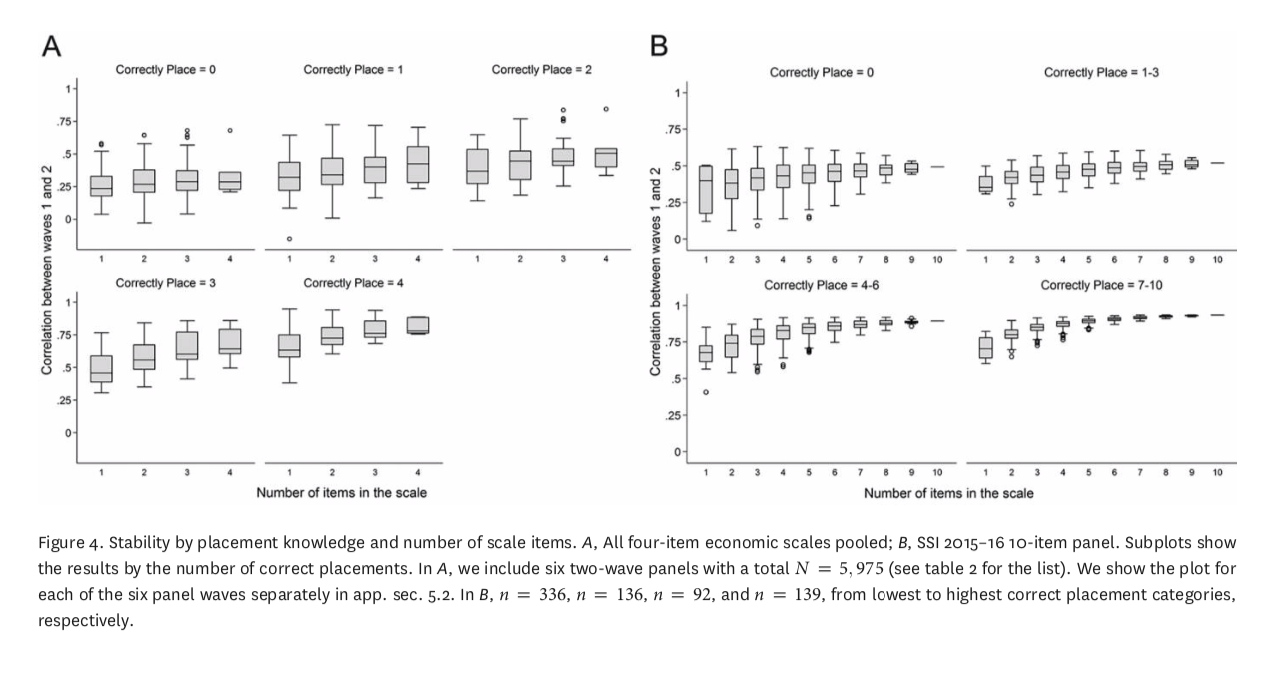

What’s are the results

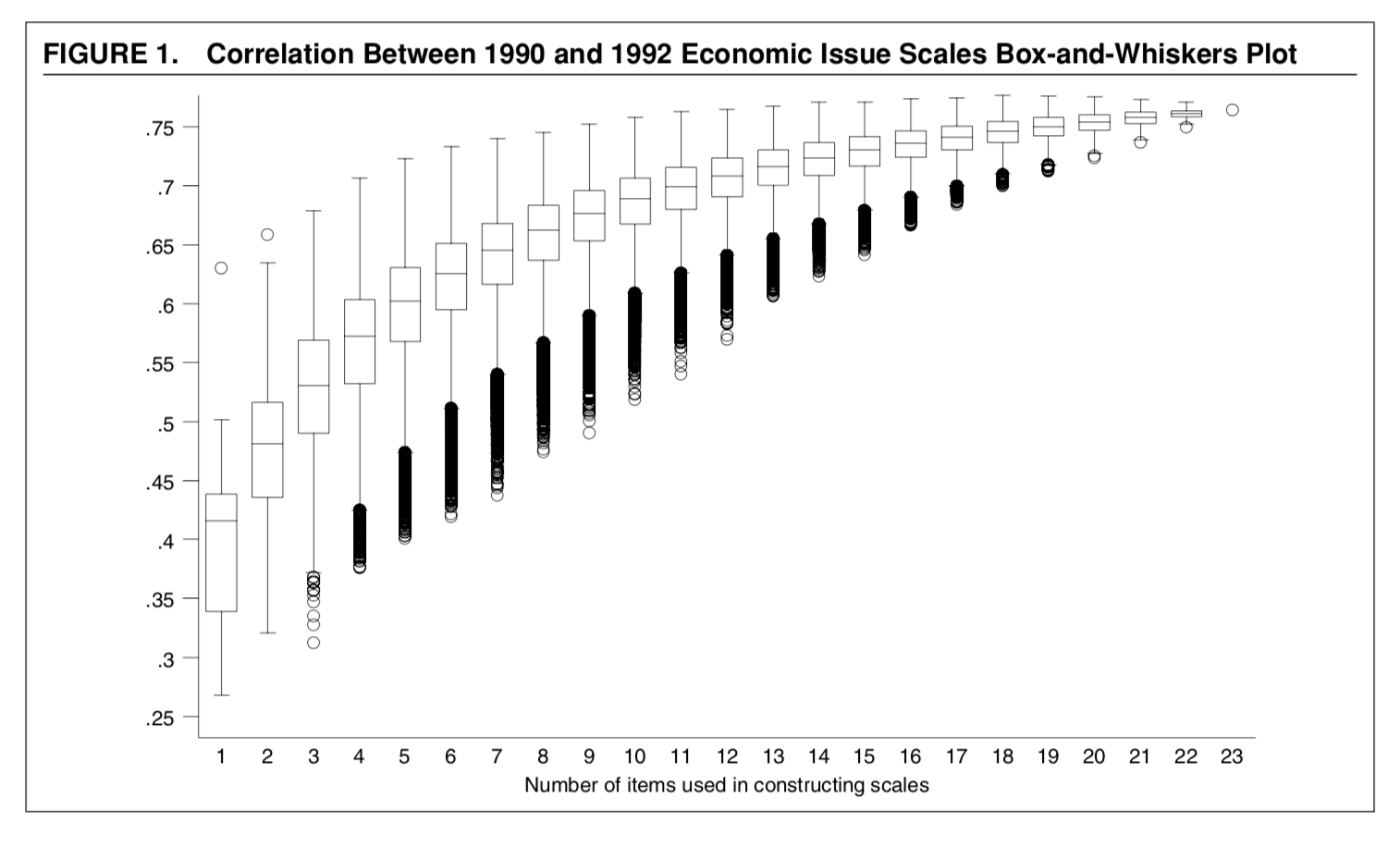

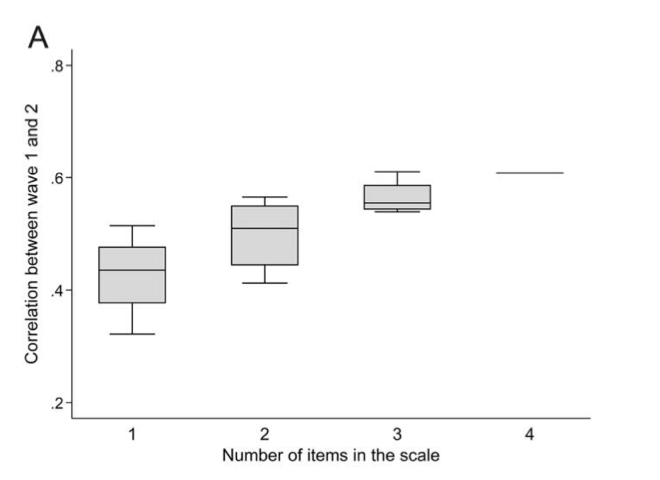

- The over time reliability of scales increases with the number of items used

- Table 1, Figure 1

- Table 1, Figure 1

- The correlations are higher between scales within surveys

- Table 2, Figure 2

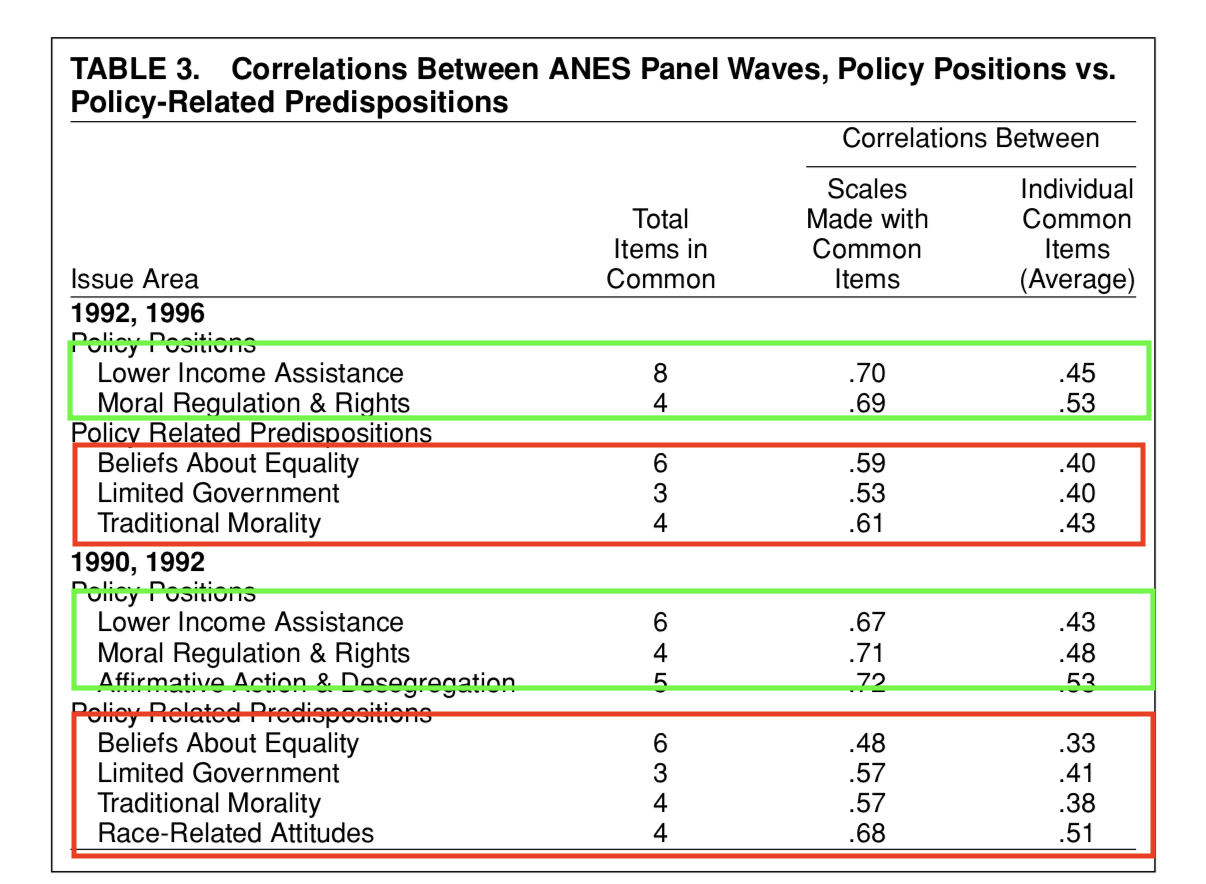

- Issue scales are more stable than policy predispositions

- Table 3

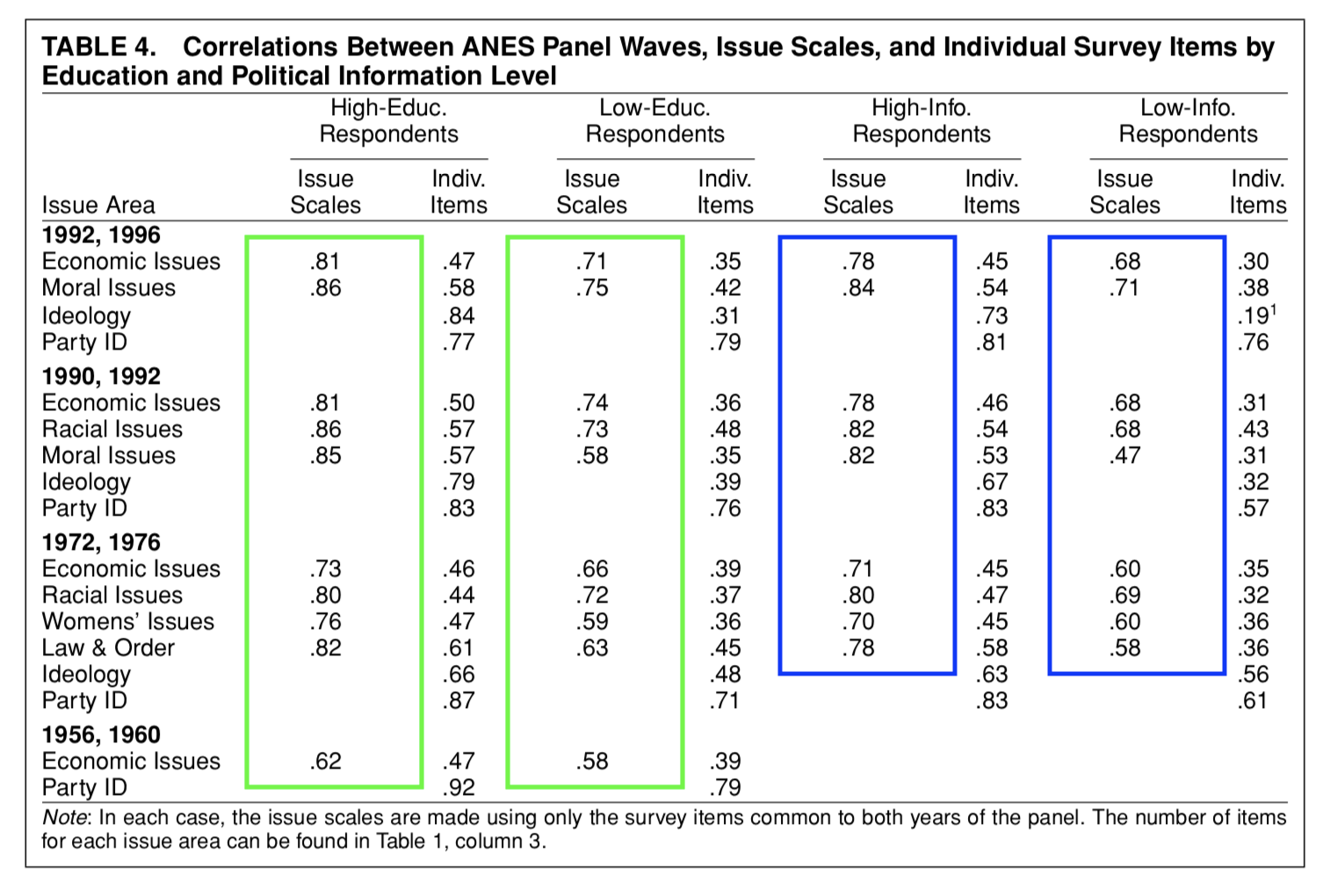

- Little variation across political sophistication

- Table 4

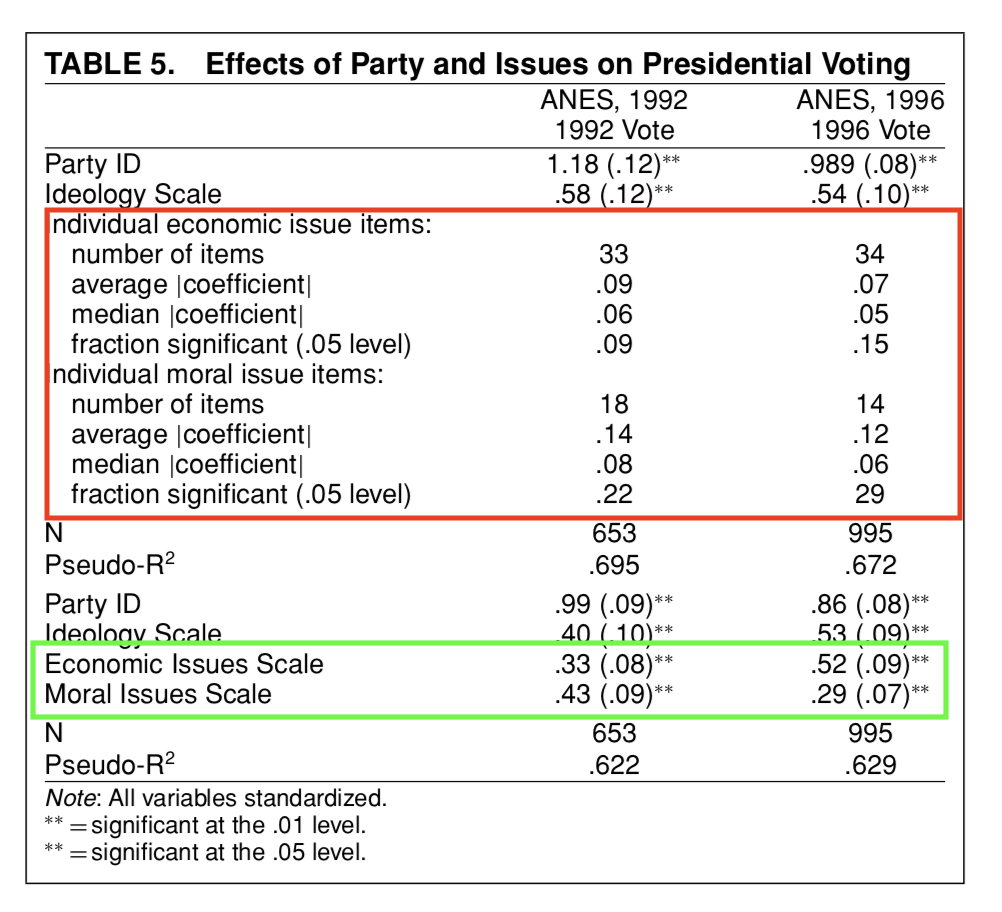

- Issue scales predict vote choice

- Table 5

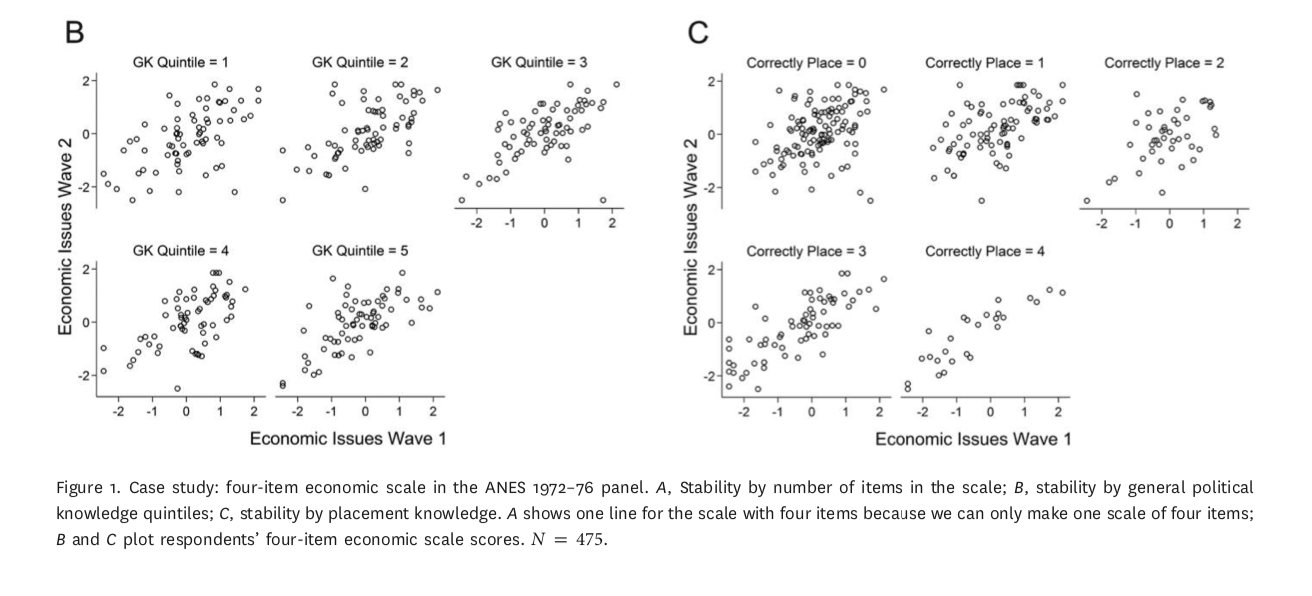

The over time reliability of scales increases with the number of items used

The over time reliability of of scales increases with the number of items used

The correlations are higher between scales within surveys

Issue scales are more stable than policy predispositions

Little variation across political sophistication

This is in contrast to what Converse’s “Black and White” model would predict and consistent with general arguments about measurement error

The entry point for Freeder et al.’s critique

Issue scales predict vote choice

What’s are the conclusions

Democracy is saved?

It’s the measures not the public that’s the problem

Use multiple measures and scale them together to study the concepts we’re interested in

The importance of political sophistication may be overstated

Break

Class Survey

Please click here to take our periodic attendance survey

Freeder et al. (2019)

Goals

Goals

- What’s the research question

- What’s the theoretical framework

- What’s the empirical design

- What’s are the results

- What’s are the conclusions

What’s the research question

- Is the lack of ideological constraint really just a function of measurement error, or is it a product of citizens’ ignorance of “what goes with what”

What’s the theoretical framework

- Measurement error critiques of Converse like Ansolabehere et al. can’t distinguish between error due to:

- The vagaries of the question (classical measurement error)

- The vagaries of person (lack of knowledge)

- The vagaries of survey response (more on this later)

\[\overbrace{y}^{\text{Observed}} = \underbrace{\hat{y}}_{\text{True}} + \overbrace{u_i}^{\text{Error}} + \underbrace{v_i}_{\text{Survey Response}} + \overbrace{p_iw_i}^{WGWW}\]

- Averaging reduces error from all of these sources

What’s the theoretical framework

- Knowledge of what goes with what (WGWW) measured by awareness of which party is more liberal or conservative explains lack of constraint, even after accounting for measurement error

What’s the empirical design

Panel data with multiple issue items, measures of general knowledge/sophistication, and specific measures of WGWW proxied by candidate and party placements

Correlations and scale properties

Sub-group analysis

Regression analysis

Simulations

What are the results

More items reduces measurement error

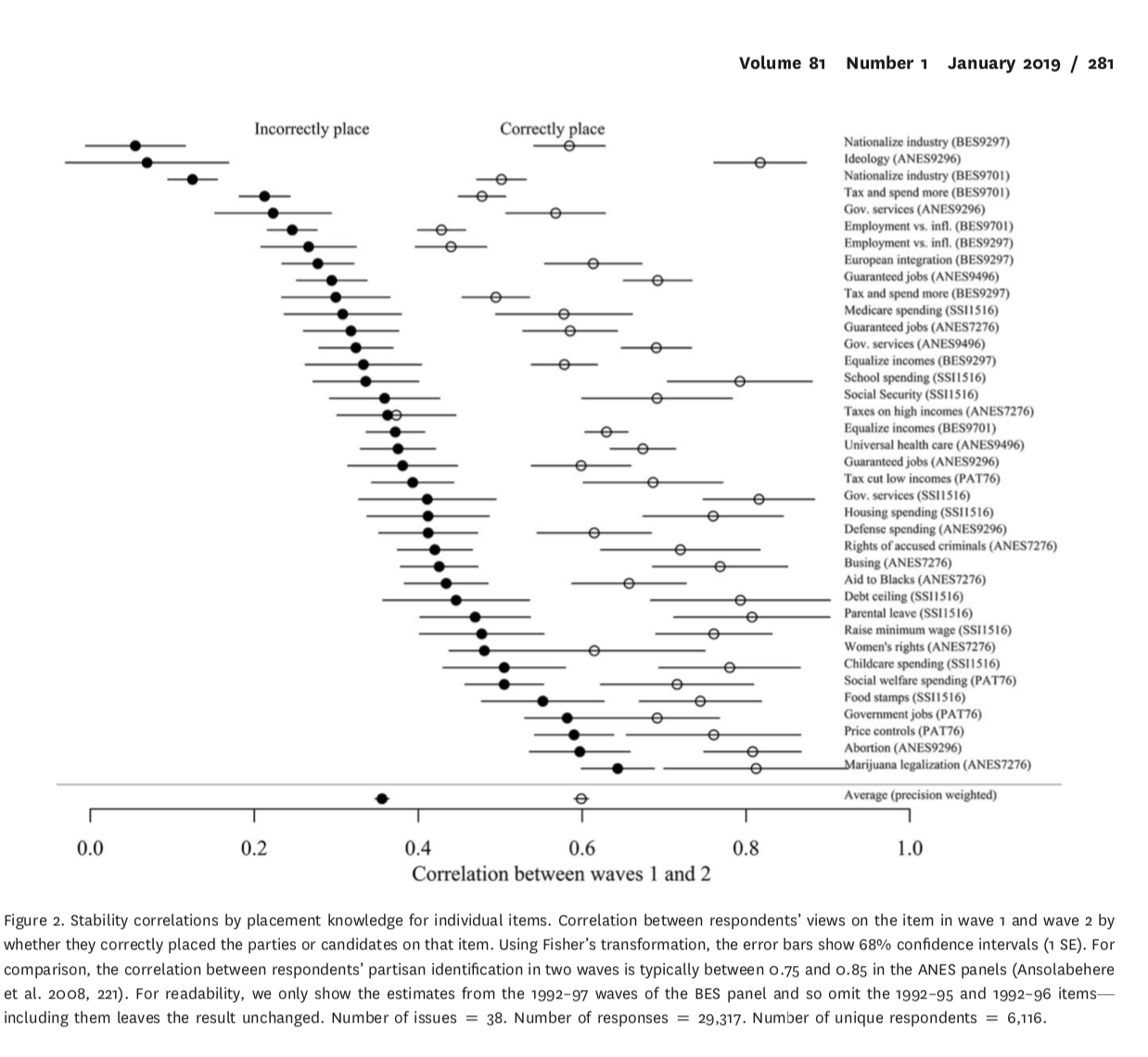

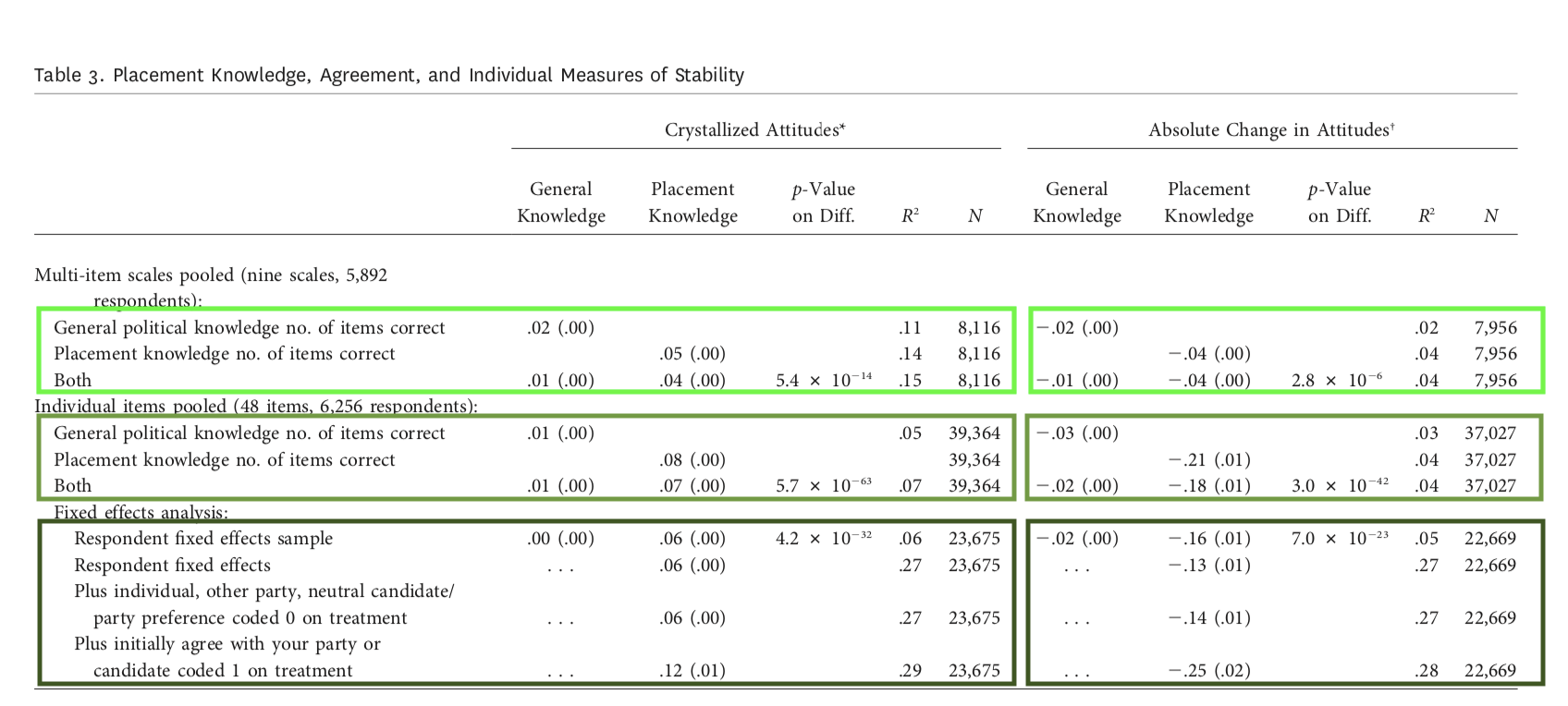

Constraint doesn’t vary with general knowledge, but does vary with WGWW

True of scales and individual items

WGWW predicts attitude stability

But only for people who agree with their party’s positions

More items won’t fix the problem

More items reduces measurement error

Constraint doesn’t vary with general knowledge, but does vary with WGWW

True of scales and individual items

WGWW predicts attitude stability

But only for people who agree with their party’s positions

More items won’t fix the problem

What are the conclusions

Correcting for measurement error alone won’t save democracy

Multiple items are still useful

- But what does the first principal component of a multi-item scale really mean?

Where does knowledge of what goes with what come from?

- Are parties the only source of constraint?

Next week

Overview

Tuesday:

Recap discussion of Ideology and Issues

General overview of political knowledge

Discussion of Jerit, Barabas and Bolsen (2006)

Thursday:

Alternative conceptions of political knowledge Weaver, Prowse and Piston (2019)

Misinformation (Jerit and Zhao 2020)

Begin discussion of A1

References

POLS 1140

Social Groupings as central objects in belief systems