POLS 1140

Political Knowledge and Information

Updated Apr 13, 2026

Tuesday

Overview

Monday:

Groups assigned for Group Project

Recap discussion of Ideology and Issues

General overview of political knowledge

Thursday:

Finish Jerit, Barabas and Bolsen (2006)

Alternative conceptions of political knowledge Weaver, Prowse and Piston (2019)

Misinformation (Jerit and Zhao 2020)

Readings for Political Cognition

By Thursday:

Group Projects

Assignment 1:

- Today:

- Get group contact info

- Brainstorm and share ideas

- By Next Tuesday:

- 1 Page Memo:

- General topic

- Elevator pitch

- Outcomes of interest

- Key predictors

Review

Review

What have we learned so far?

Converse (1964)

Ansolabehere, Rodden, and Snyder (2008)

Freeder, Lenz and Turney (2019)

Converse (1964)

Most citizens lack what stable, coherent ideological belief systems

Shallow understanding of liberal-conservative labels

Inconsistent beliefs across issue, particular among the mass public

Centrality of groups and possiblity of issue publics

Ansolabehere, Rodden, and Snyder (2008)

A critique of Converse (1964) suggesting the apparent instabiltiy and incoherence of issue attitudes is a product of measurement error

Using multiple items to measure latent concepts:

- Reduces measurement error

- Increases stability and predictive validity of measures

Freeder, Lenz, and Turney (2019)

A critique of Ansolabehere’s critique, suggesting that simply constructing multi-item scales reduces multiple sources of error and instability, only some of which is classic measurement error

Returns to Converse’s concept of “what goes with what,” using candidate and party placements (how) as a proxy for this form of knowledge.

Show that knowledge of what goes with what is the driving force of ideological consistency and stability

- But only for folks that agree with their party/candidate’s position.

First Reflection Papers Due February 24

Due February 24th

May be on any topic we’ve covered so far

- Ideology

- Political Knowledge

- Political Cognition

- Democratic Choice

Additional Readings

Some additional suggested papers

- Mondak (1983)“Public opinion and heuristic processing of source cues”

- Kam (2005) “Who Toes the Party Line? Cues, Values, and Individual Differences”

- Taber and Lodge (2006) “Motivated Skepticism in the Evaluation of Political Beliefs”

- Bartels (2005)“Homer Gets a Tax Cut: Inequality and Public Policy in the American Mind”

- Fowler and Hall (2018) “Do Shark Attacks Influence Presidential Elections? Reassessing a Prominent Finding on Voter Competence”

- Busby et al (2016) “The Political Relevance of Irrelevant Events”

- Miller et al. (2016) “Conspiracy Endorsement as Motivated Reasoning: The Moderating Roles of Political Knowledge and Trust”

Concepts you should be applying

- Measures of Association

- Measures of Uncertainty

- Models of Causation

Questions you should be asking

- What’s the research question

- What’s the theoretical framework

- What’s the empirical design

- What are the results

- What are the conclusions

What’s the research question

What is this paper about?

If you had to summarize this paper in one to two sentences, how would you do it?

What’s the theoretical framework

What’s the argument?

What are the key concepts?

Why do we care?

What’s the contribution?

What are the expectations?

What’s the empirical design

How will the paper convince you of its claims

Is it an experimental or observational design

What data does the paper use

What methods the paper apply

What are the results

What evidence does the paper provide to support its claims

What specific tables and figures support the paper’s claims

Note

In your papers, I’m particularly interested in your ability to take a theoretical claim and map it onto an empirical result. Give me page numbers, tables, estimates.

What are the conclusions

What have we learned?

What do we still need to know?

What would we do differently?

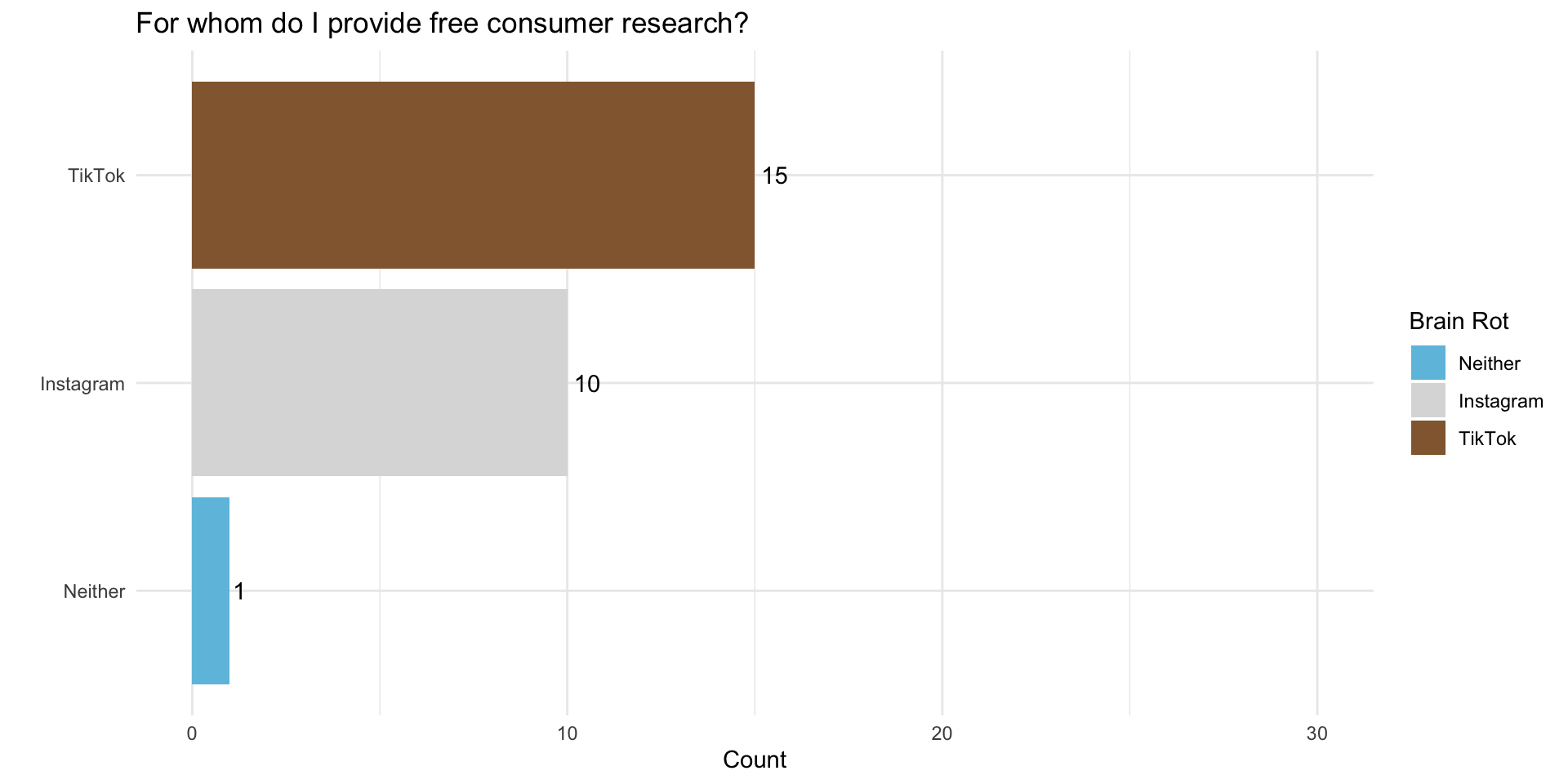

Instagram or TikTok?

Chat am I cooked?

Political Knowledge

What should citizens know about politics?

Take a few moments to write down your thoughts:

What kinds of knowledge do citizens in a democracy need to possess

Do citizens generally possess this knowledge?

Is this a problem?

Luskin (1987)

- Luskin offers a definition of “political belief systems” defined in terms of

- size (number of cognitions)

- range (diversity)

- organization (constraint)

- Converse’s focus on constraint, Delli Carpini and Keeter (1993) argue “putting the cart before the dead horse.”

How Should We Define Political Knowledge

- Delli Carpini and Keeter (1996) define political knowledge as: “the range of factual information about politics that is stored in long-term memory.”

How Should We Measure Political Knowledge

- Open-ended questions can be problematic

- Instead, scholars of developed set of questions which we use to measure this concept

What do you know!

Do you happen to know what job or office is now held by J.D. Vance?

Whose responsibility is it to determine if a law is constitutional or not … is it the president, the Congress, or the Supreme Court?

How much of a majority is required for the US Senate and House to override a presidential veto?

Do you happen to know which party has the most members in the House of Representatives in Washington?

Would you say that one of the parties is more conservative than the other at the national level? Which party is more conservative?

What do you know!

Do you happen to know what job or office is now held by J.D. Vance? Vice President

Whose responsibility is it to determine if a law is constitutional or not … is it the president, the Congress, or the Supreme Court? Supreme Court

How much of a majority is required for the US Senate and House to override a presidential veto? Two-thirds

Do you happen to know which party has the most members in the House of Representatives in Washington? Republicans

Would you say that one of the parties is more conservative than the other at the national level? Which party is more conservative? Republican Party

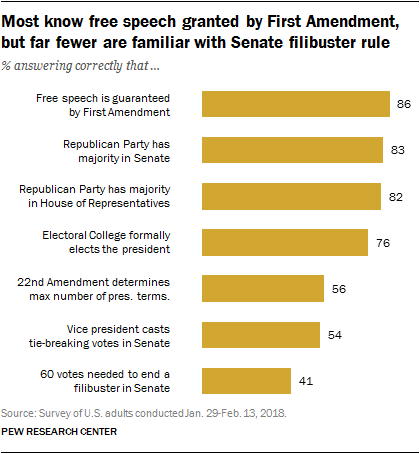

What does the public know?

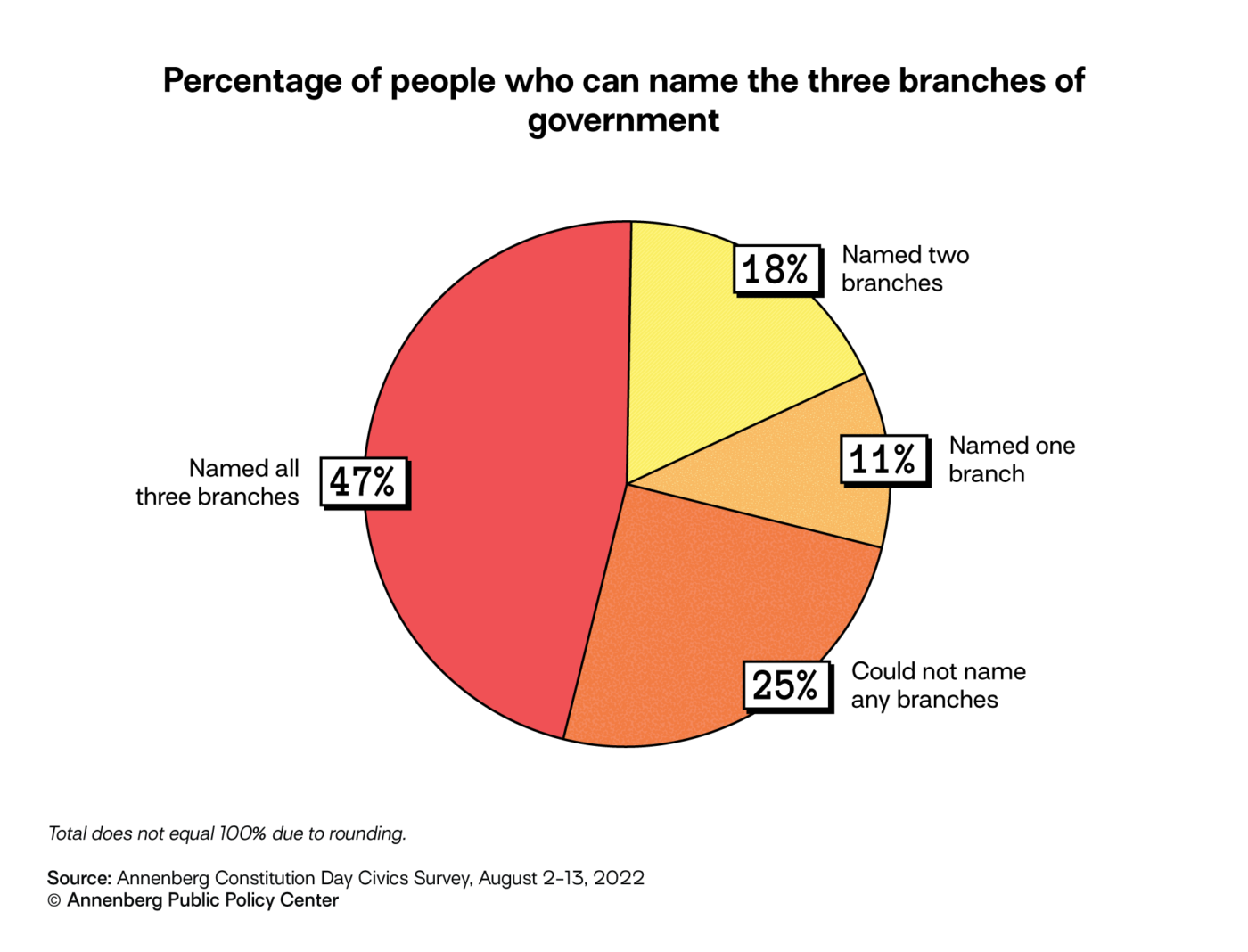

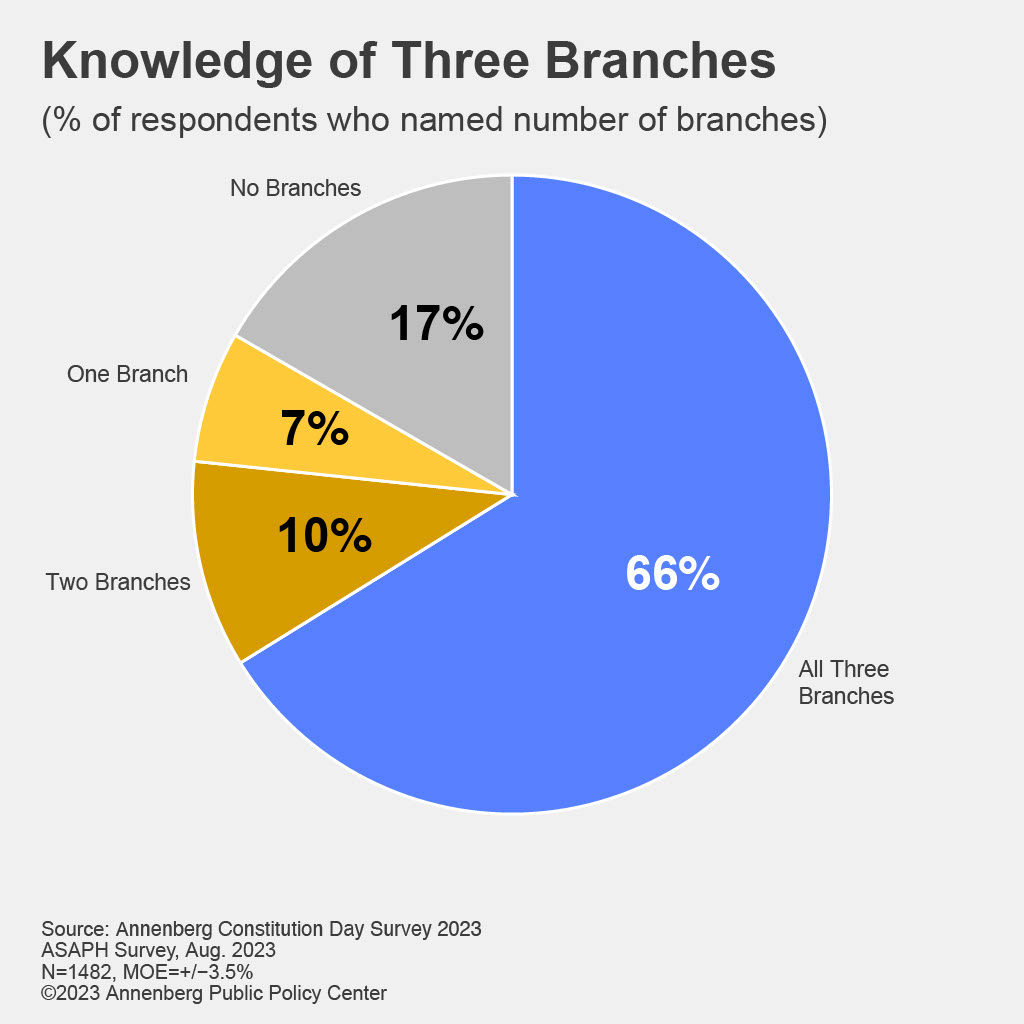

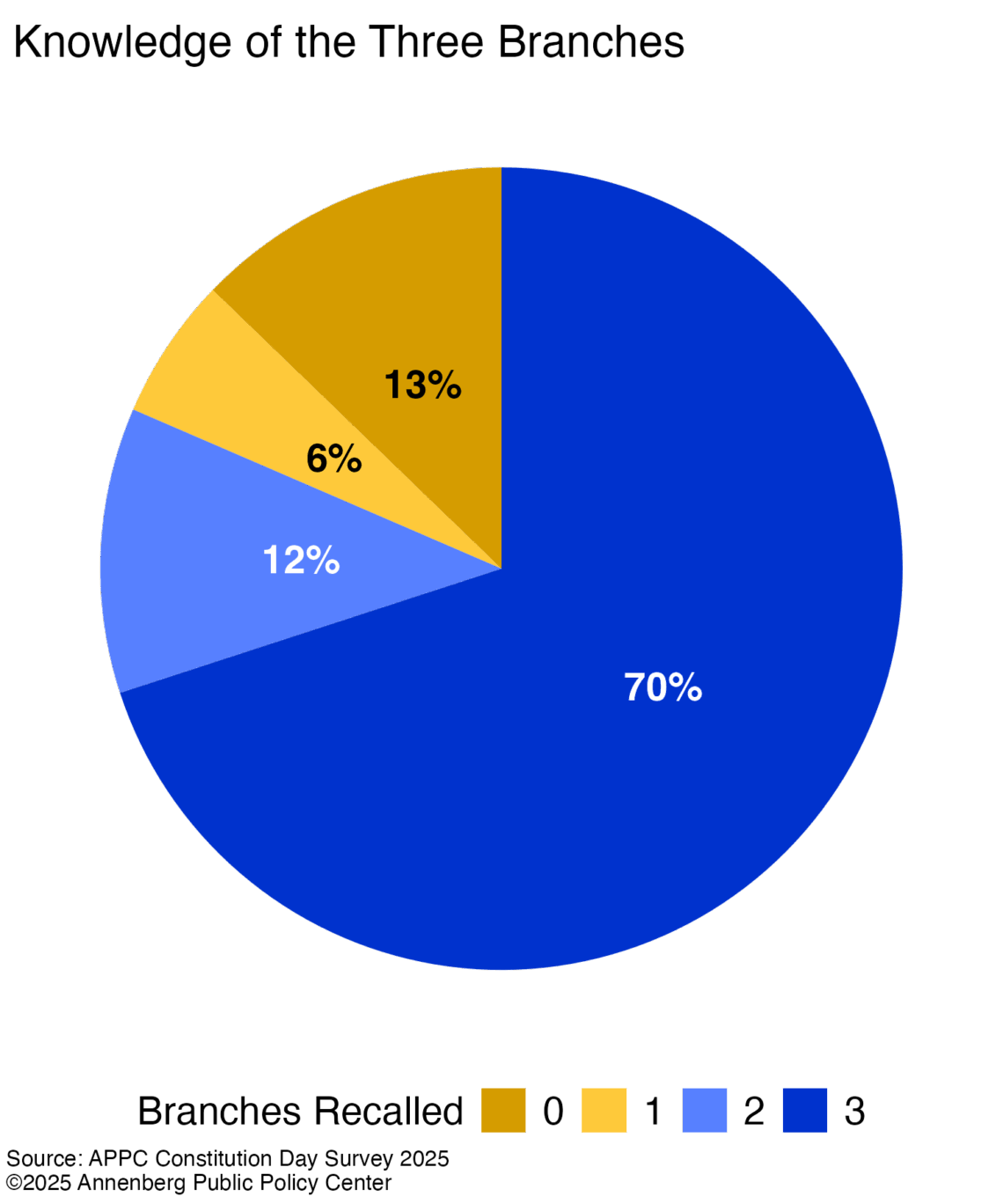

Annenberg Civics Knowledge Survey

Can you name all three branches of government?

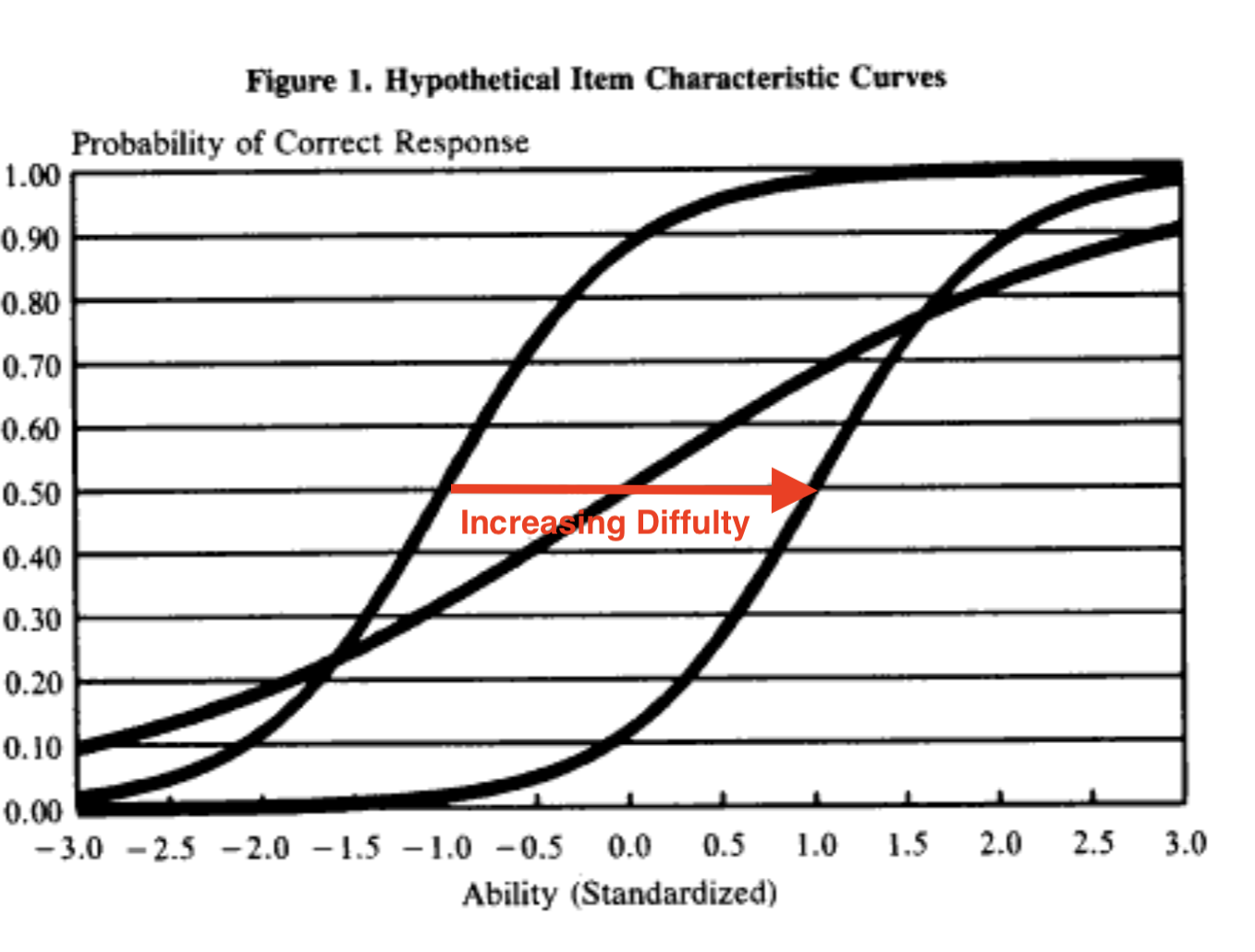

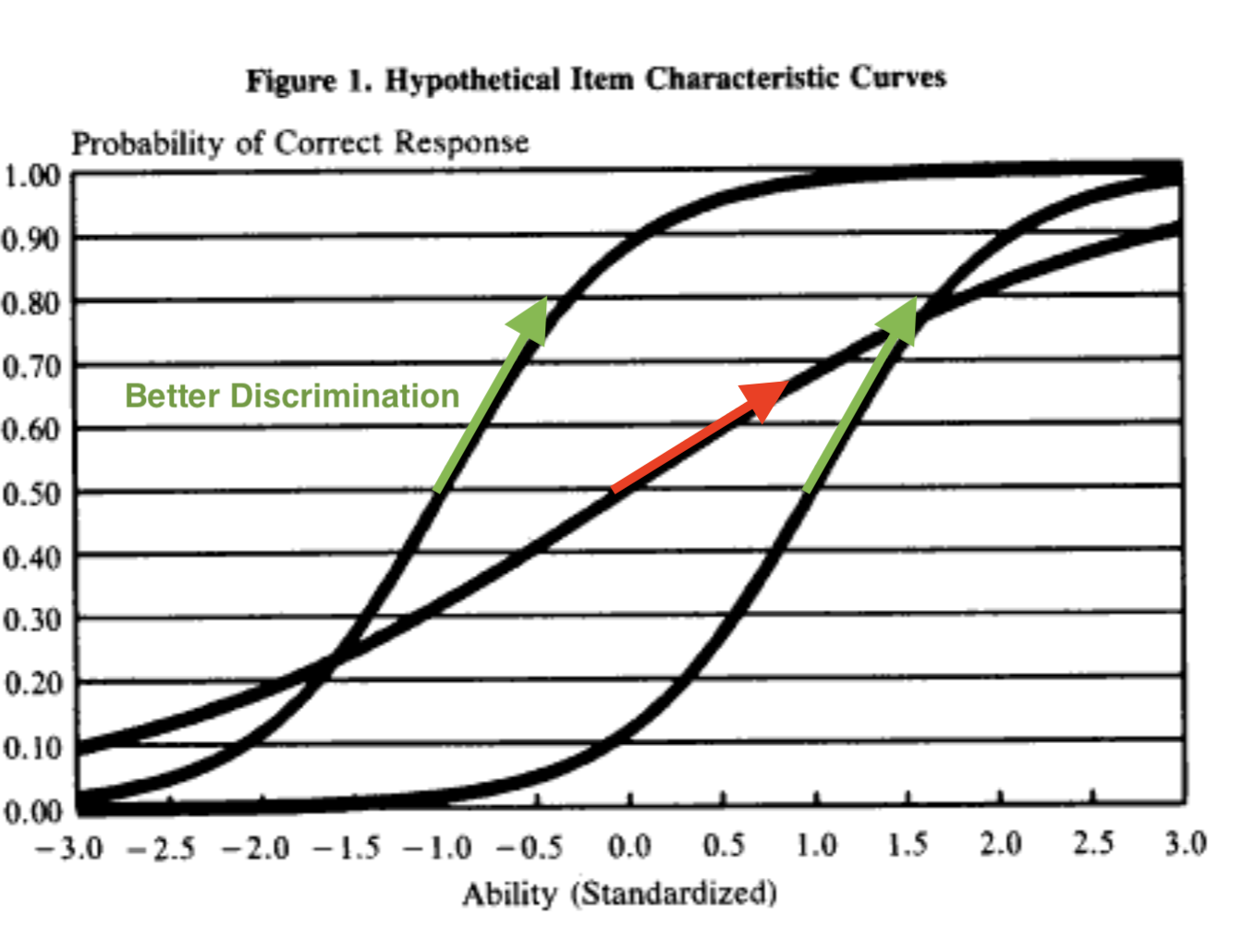

What Makes a Good Measure

- These standard measures of political knowledge have some nice properties

- They “scale” together well

- They discriminate levels of political knowledge

What Makes a Good Measure? Mix Difficulties

What Makes a Good Measure? High “Discrimination”

What Makes a Good Measure

- These standard measures of political knowledge have some nice properties

- They “scale” together well

- They discriminate levels of “political knowledge

- They predict attitudes and behavior

- But what are these scales really measuring?

- Why would we expect an increase in this measure of PK to increase voting?

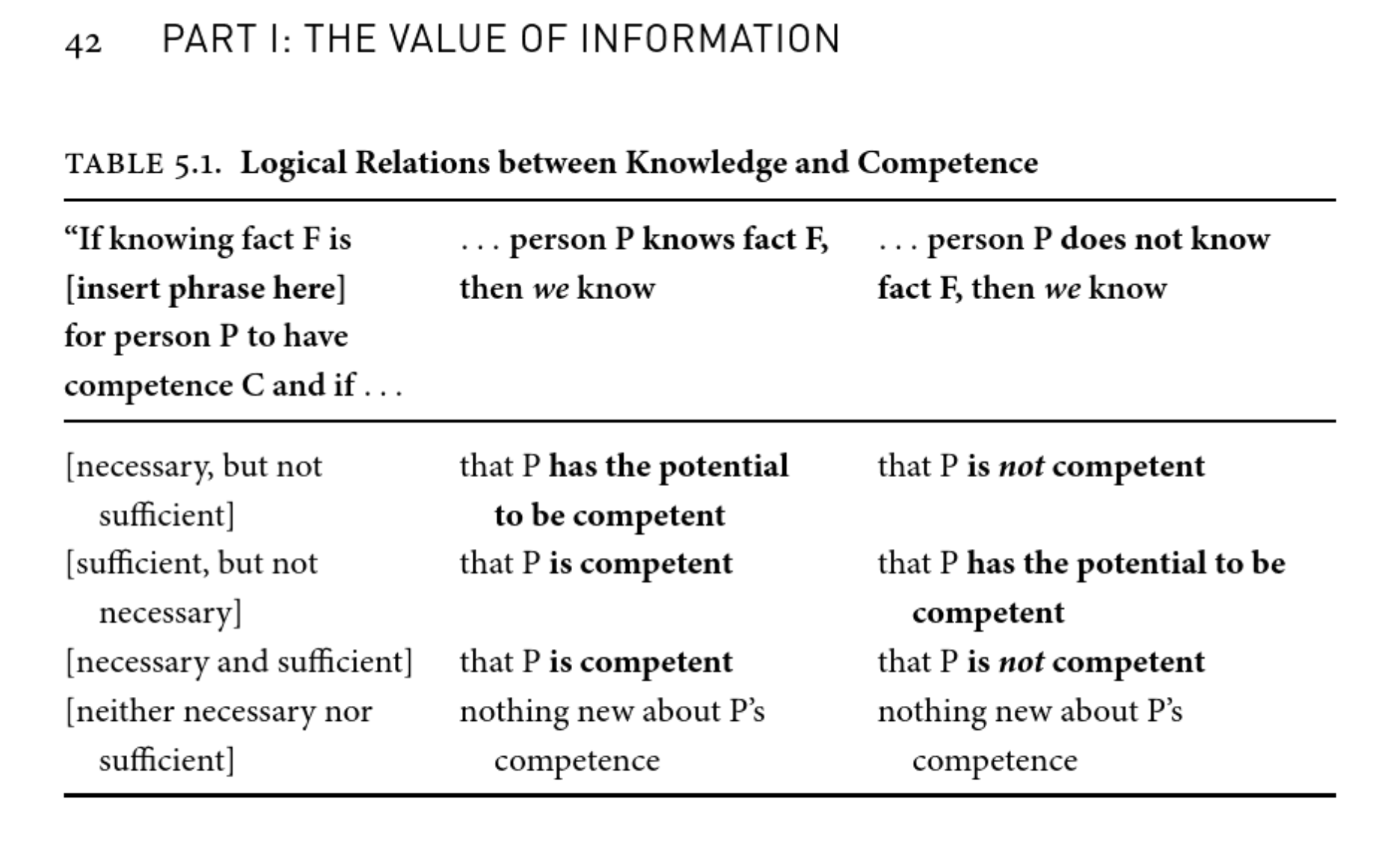

Lupia (2016)

- Offers an an important distinction between:

- Information

- Knowledge

- Competence

Lupia (2016)

Information: “Information is what educators can convey to others”

Knowledge is memories of how concepts and objects are related to each other.

- Knowledge requires Information

Competence is the ability to perform a task in a particular way

Competence requires knowledge, but not complete knowledge. Depends on the context/decision

- Cues and heuristics can help

- Competence is contextual and political

What is necessary and sufficient to make competent decisions?

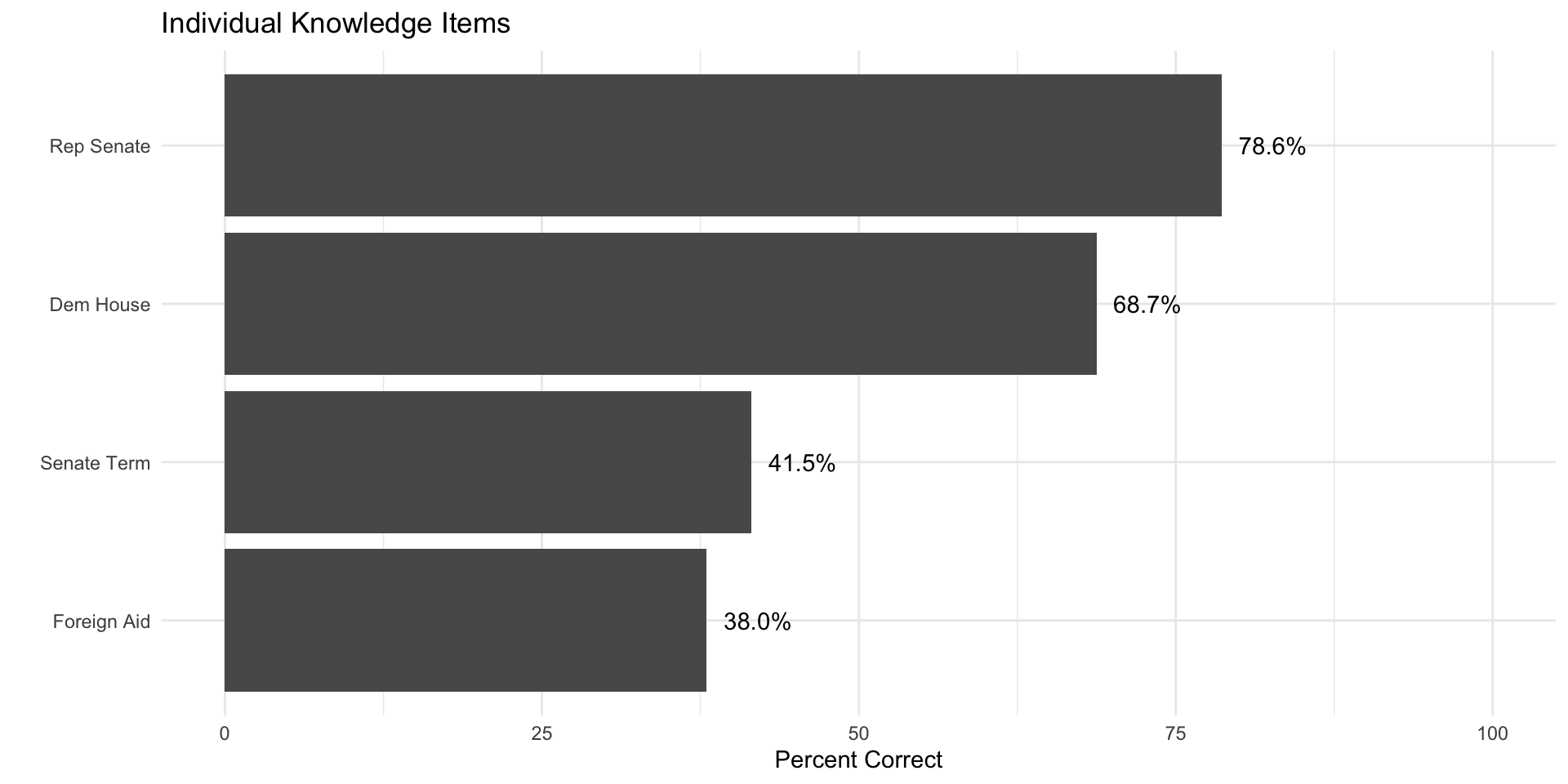

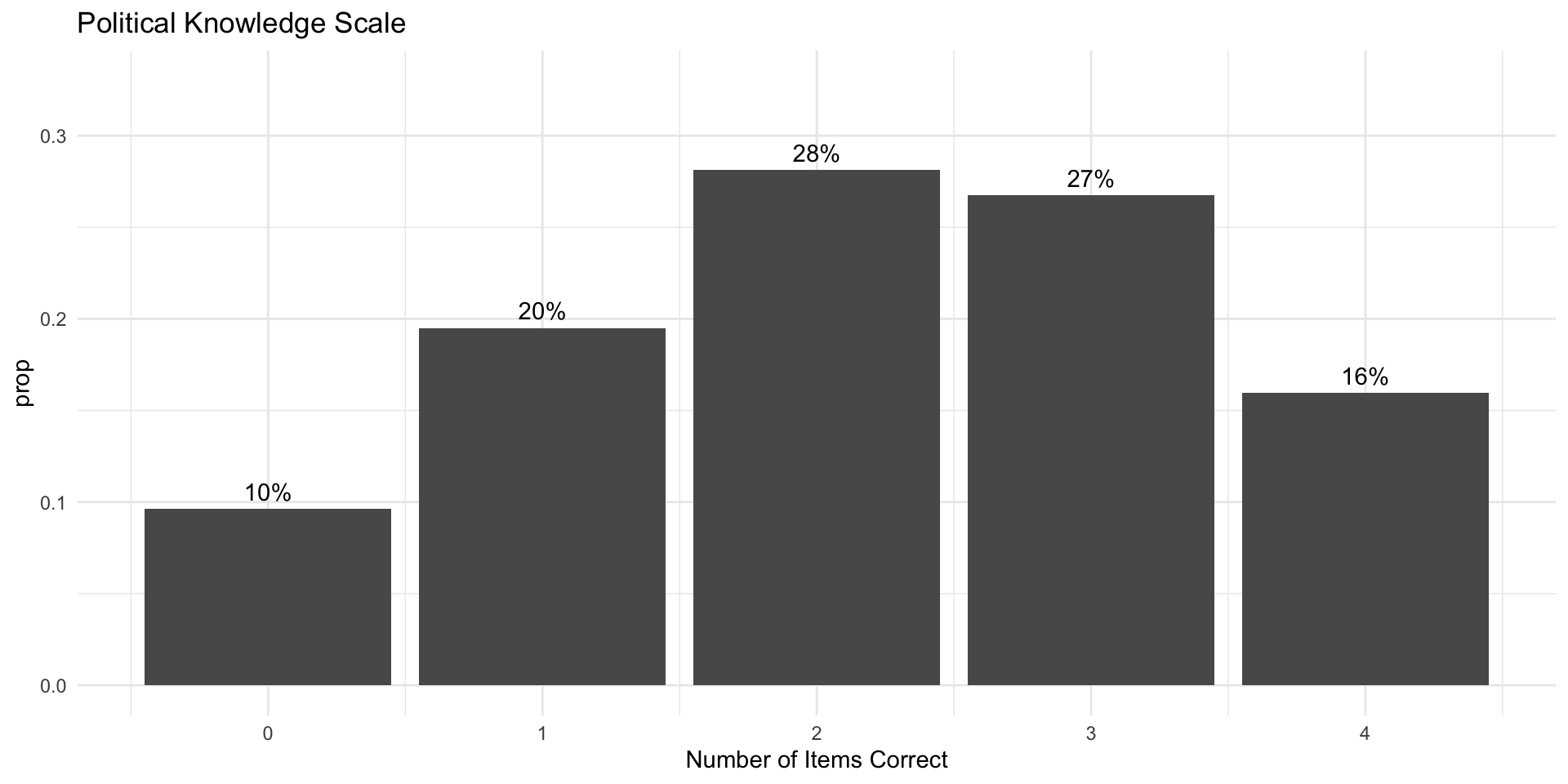

Political Knowledge in the 2020 ANES

Political Knowledge

Let’s take a look at political knowledge in the 2020 American National Election Study as measured by four items:

- Length of Senate Term

- Government spending on Foreign Aid

- Party control of House

- Party control of Senate

## ---- Recode data ----

df %>%

mutate(

pk_senate_term = case_when(

V201644 == 6 ~ 1,

V201644 < 0 ~ NA,

T ~ 0

),

pk_foreign_aid = case_when(

V201645 == 1 ~ 1,

V201645 < 0 ~ NA,

T ~ 0

),

pk_house = case_when(

V201646 == 1 ~ 1,

V201646 < 0 ~ NA,

T ~ 0

),

pk_senate = case_when(

V201647 == 2 ~ 1,

V201647 < 0 ~ NA,

T ~ 0

),

sex = ifelse(V201600 == 2, "Female", "Male"),

college_degree = ifelse(V201511x > 3, "College Degree", "No College"),

race = case_when(

V201549x == 1 ~ "White",

V201549x == 2 ~ "Black",

V201549x == 3 ~ "Hispanic",

V201549x == 4 ~ "Asian",

T ~ "Other"

),

race = forcats::fct_infreq(race),

income = case_when(

V201617x < 0 ~ NA,

T ~ V201617x

),

political_interest = ifelse(

V201005 < 0, NA, (V201005 - 5)*-1

)

) %>%

mutate(

political_knowledge = rowSums(

select(.,starts_with("pk")),

na.rm=T

)

)-> df## ---- Figures ----

## Individual items

df %>%

summarise(

`Senate Term` = mean(pk_senate_term,na.rm=T),

`Foreign Aid` = mean(pk_foreign_aid, na.rm = T),

`Dem House` = mean(pk_house, na.rm=T),

`Rep Senate` = mean(pk_senate, na.rm = T)

) %>%

pivot_longer(

cols = 1:4,

names_to = "Item"

) %>%

mutate(

Item = forcats::fct_reorder(Item,value),

`Percent Correct` = round(value*100,2)

) %>%

ggplot(aes(Item, `Percent Correct`))+

geom_bar(stat = "identity")+

coord_flip()+

geom_text(aes(label = scales::percent(value)),

hjust = -.25)+

ylim(0,100)+

labs(

title="Individual Knowledge Items",

x = ""

) +

theme_minimal()-> fig1

## Knowledge Scale

df %>%

group_by(political_knowledge) %>%

summarise(

n = n(),

prop = n()/nrow(df),

Percent = scales::percent(prop)

) %>%

ggplot(aes(political_knowledge, prop))+

geom_bar(stat = "identity")+

scale_y_continuous(labels = label_percent())+

geom_text(aes(y = prop, x= political_knowledge,label = Percent), vjust = -.5)+

ylim(0, .33)+

labs(

x = "Number of Items Correct",

title = "Political Knowledge Scale"

) +

theme_minimal() -> fig2

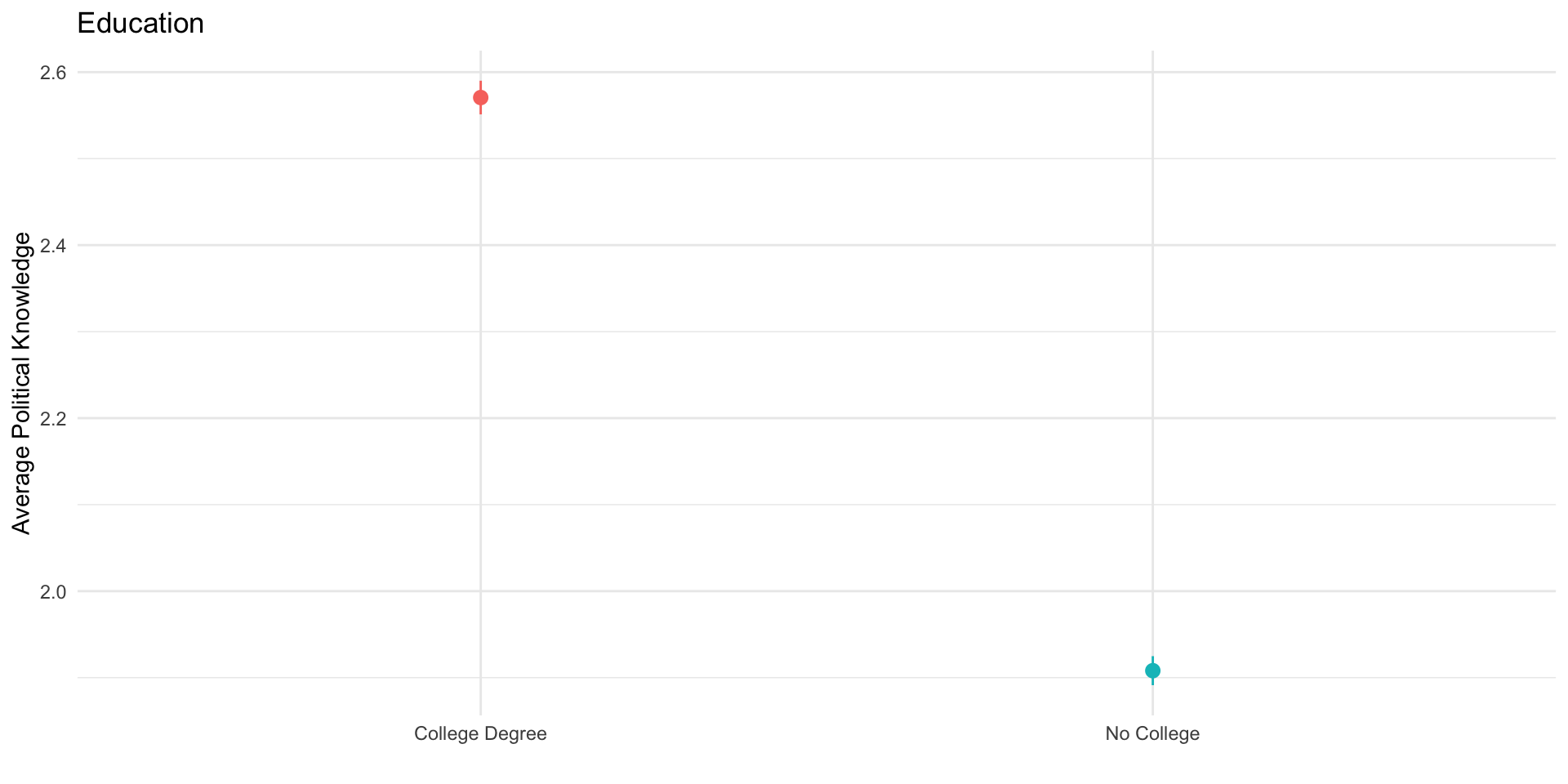

# ---- Knowledge Gaps ----

# Education

df %>%

ggplot(aes(college_degree, political_knowledge,

col = college_degree))+

stat_summary()+

guides(col = "none")+

theme_minimal()+

labs(

x= "",

y = "Average Political Knowledge",

title = "Education"

) -> fig3

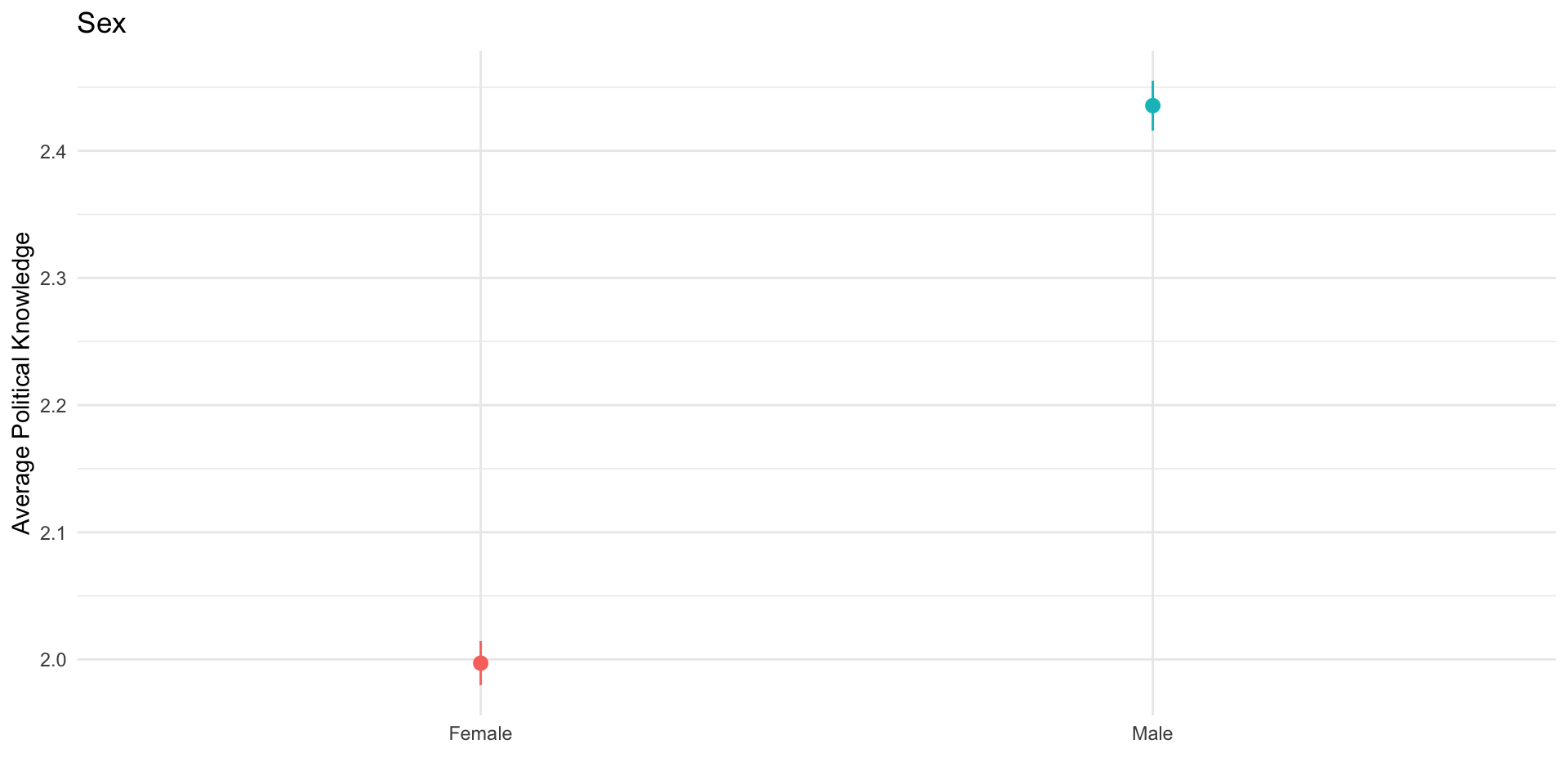

# Sex

df %>%

ggplot(aes(sex, political_knowledge,

col = sex))+

stat_summary()+

guides(col = "none")+

theme_minimal()+

labs(

x= "",

y = "Average Political Knowledge",

title = "Sex"

) -> fig4

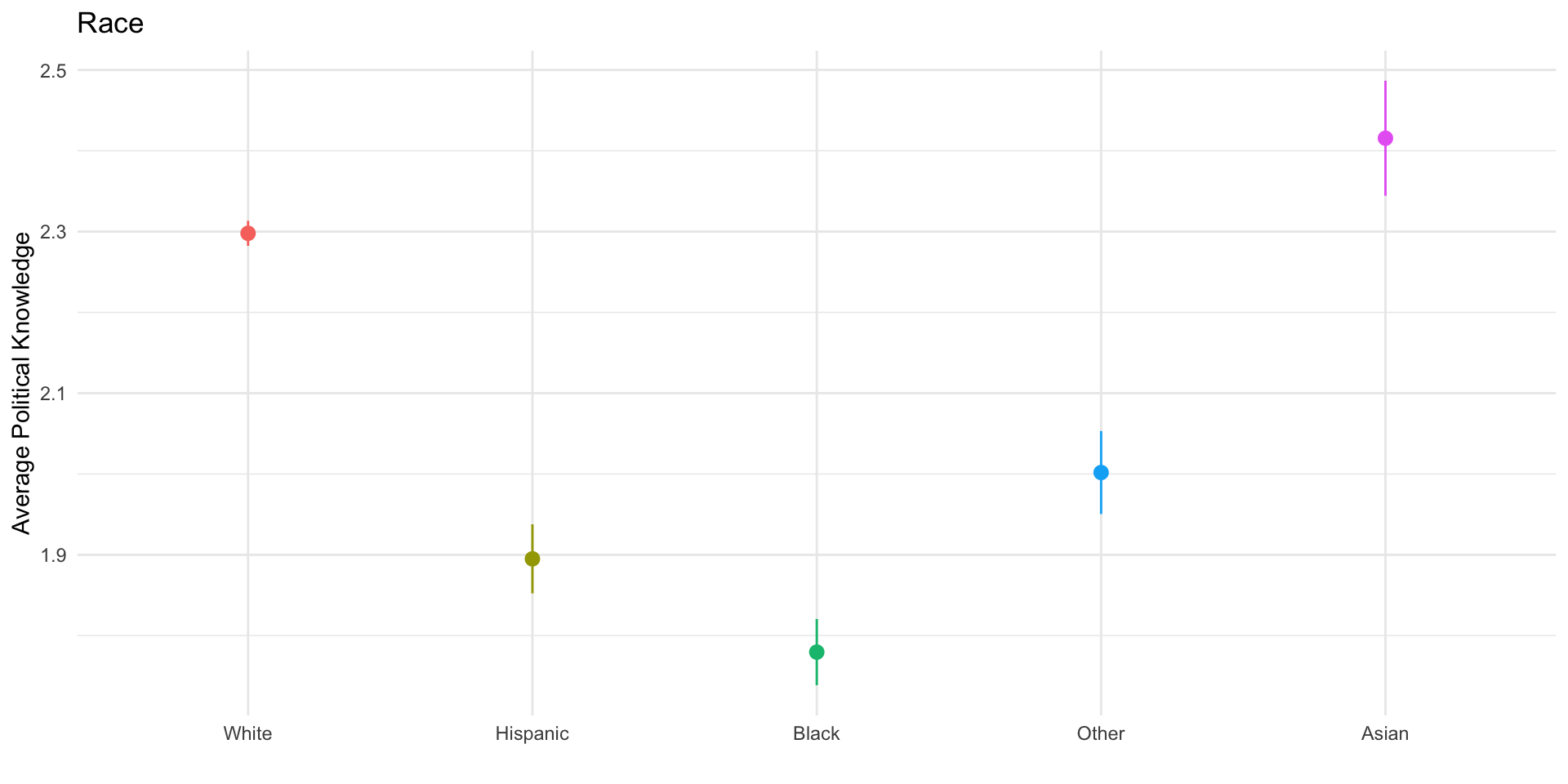

# Race

df %>%

ggplot(aes(race, political_knowledge,

col = race))+

stat_summary()+

guides(col = "none")+

theme_minimal()+

labs(

x= "",

y = "Average Political Knowledge",

title = "Race"

) -> fig5

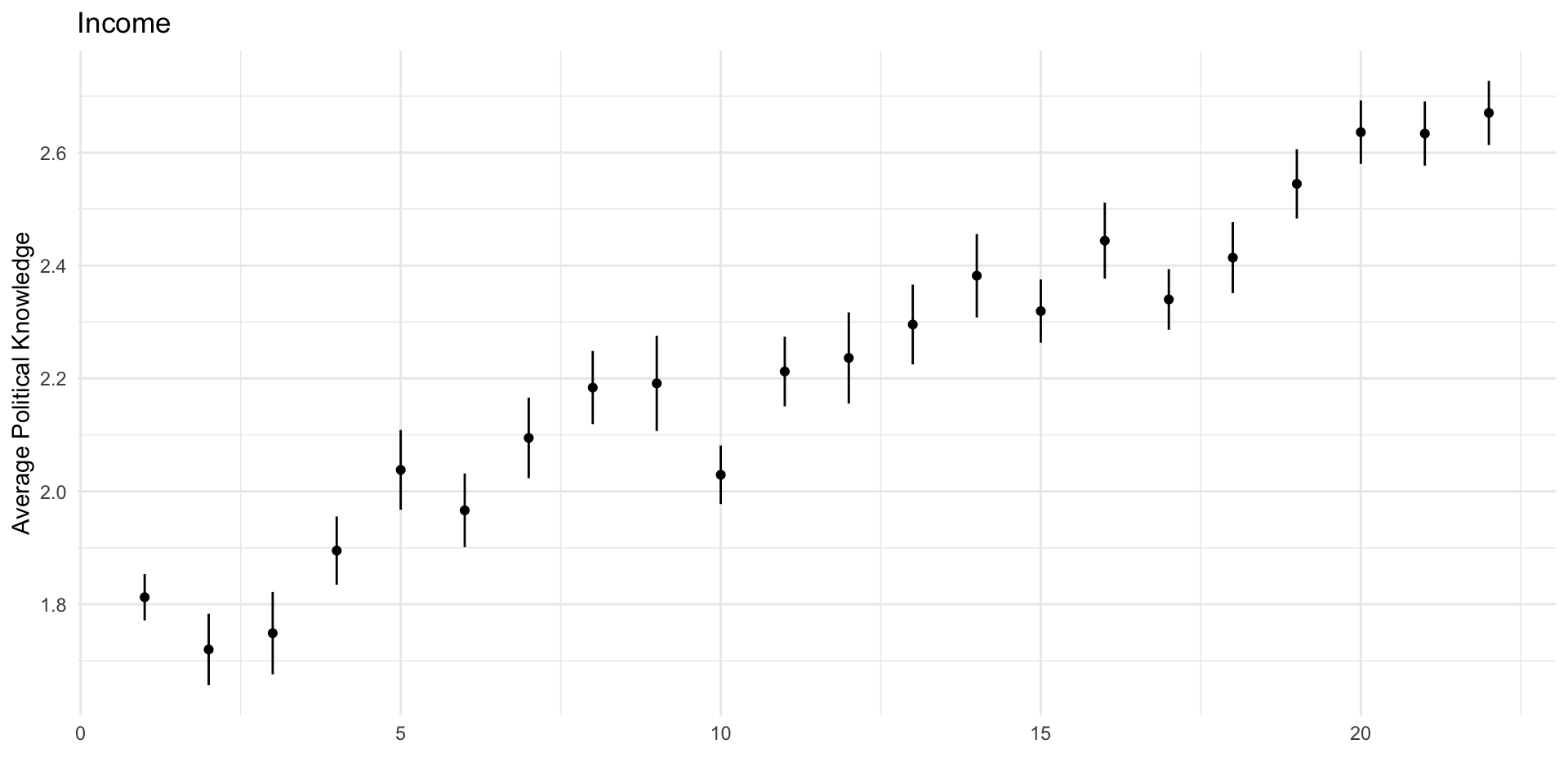

# Income

df %>%

ggplot(aes(income, political_knowledge,

))+

stat_summary(size=.2)+

theme_minimal()+

labs(

x= "",

y = "Average Political Knowledge",

title = "Income"

) -> fig6# ---- Models ----

## Bivariate

m1 <- lm_robust(political_knowledge ~ college_degree, df)

m2 <- lm_robust(political_knowledge ~ sex, df)

m3 <- lm_robust(political_knowledge ~ race, df)

m4 <- lm_robust(political_knowledge ~ income, df)

## Multiple Regression

m5 <- lm_robust(political_knowledge ~

college_degree + sex + race + income + political_interest , df)

Knowledge Gaps

Knowledge Gaps

We could also explore knowledge gaps using regression. Recall:

Regression is tool for estimating conditional means

Regression partitions variance explained by specific factors

| Model 1 | Model 2 | Model 3 | Model 4 | Model 5 | |

|---|---|---|---|---|---|

| (Intercept) | 2.57*** | 2.00*** | 2.30*** | 1.73*** | 1.42*** |

| (0.02) | (0.02) | (0.02) | (0.03) | (0.05) | |

| No BA | -0.66*** | -0.46*** | |||

| (0.03) | (0.03) | ||||

| Male | 0.44*** | 0.34*** | |||

| (0.03) | (0.02) | ||||

| Hispanic | -0.40*** | -0.18*** | |||

| (0.05) | (0.04) | ||||

| Black | -0.52*** | -0.26*** | |||

| (0.04) | (0.04) | ||||

| Other | -0.30*** | -0.08 | |||

| (0.05) | (0.05) | ||||

| Asian | 0.12 | 0.10 | |||

| (0.07) | (0.06) | ||||

| Income | 0.04*** | 0.02*** | |||

| (0.00) | (0.00) | ||||

| Political Interest | 0.27*** | ||||

| (0.01) | |||||

| R2 | 0.07 | 0.03 | 0.02 | 0.06 | 0.19 |

| Adj. R2 | 0.07 | 0.03 | 0.02 | 0.06 | 0.19 |

| Num. obs. | 8280 | 8280 | 8280 | 7665 | 7664 |

| RMSE | 1.16 | 1.18 | 1.19 | 1.15 | 1.07 |

| ***p < 0.001; **p < 0.01; *p < 0.05 | |||||

Jerit, Barabas, and Bolsen (2006)

What’s the research question

What’s the point of this study?

Think, pair, share…

What’s the research question

- Jerit et al. argue that political knowledge is not a static concept, but instead varies over time as a function of the characteristics of individuals and their environments

What’s the theoretical framework

- What debate do the authors speak to?

- What are their theoretical contributions to this debate?

- What are the specific expectations from this theory

What’s the theoretical framework

- Jerit et al. address a literature focused on knowledge gaps which:

- highlights the importance of relatively stable individual factors like education, income, gender, race in predicting differences in knowledge

- Concludes that any changes in political knowledge are likely to benefit the “informationaly rich”

What’s the theoretical framework

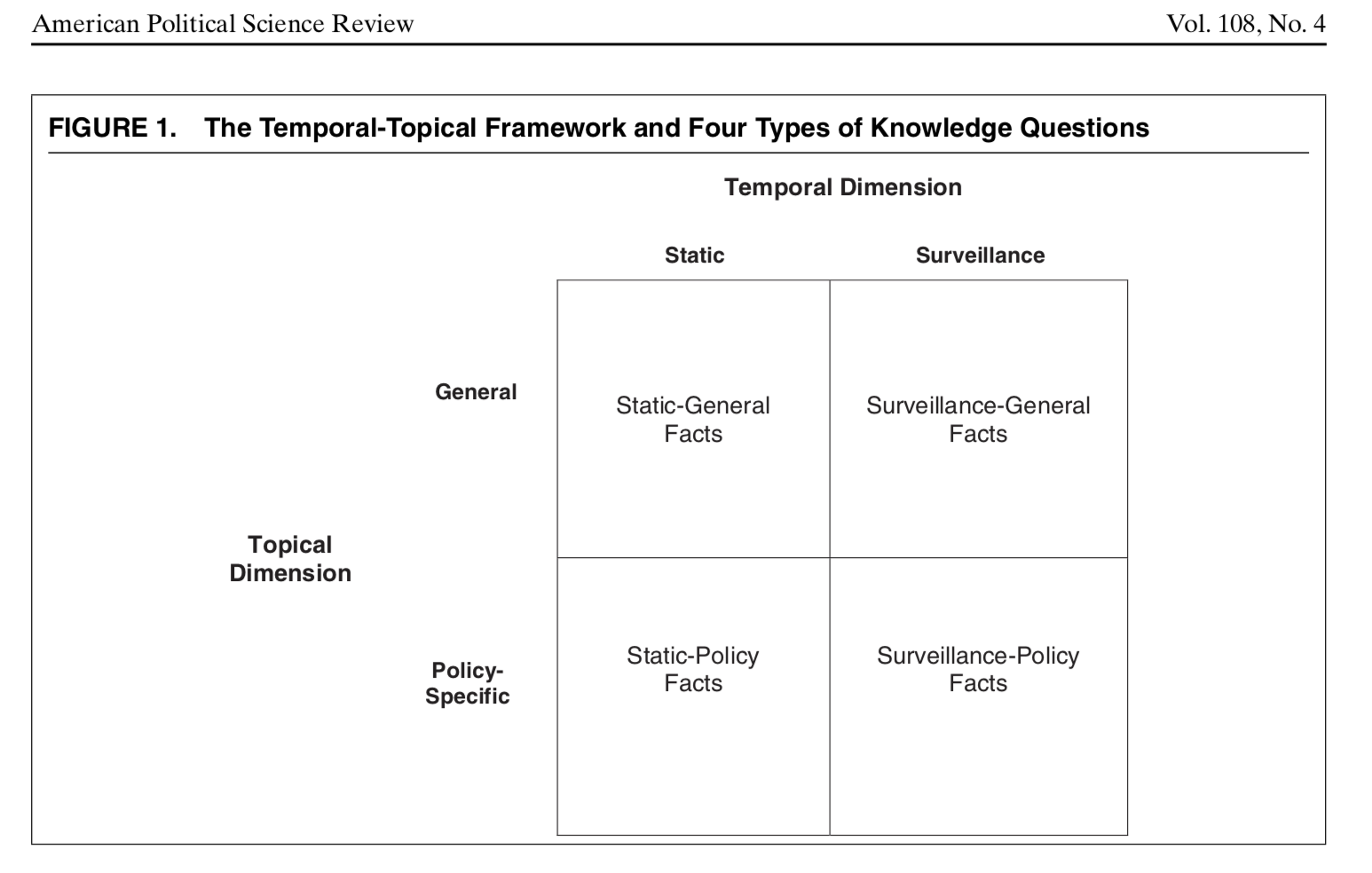

- Jerit et al. lay the foundation for a distinction made by Barabas et al. (2014) between the type and temporal dimensions of knowledge

What’s the theoretical framework

- Jerit et al. argue political knowledge will vary as both a function of characteristics of the individual (education) and the information environments in which they exist

What’s the theoretical framework

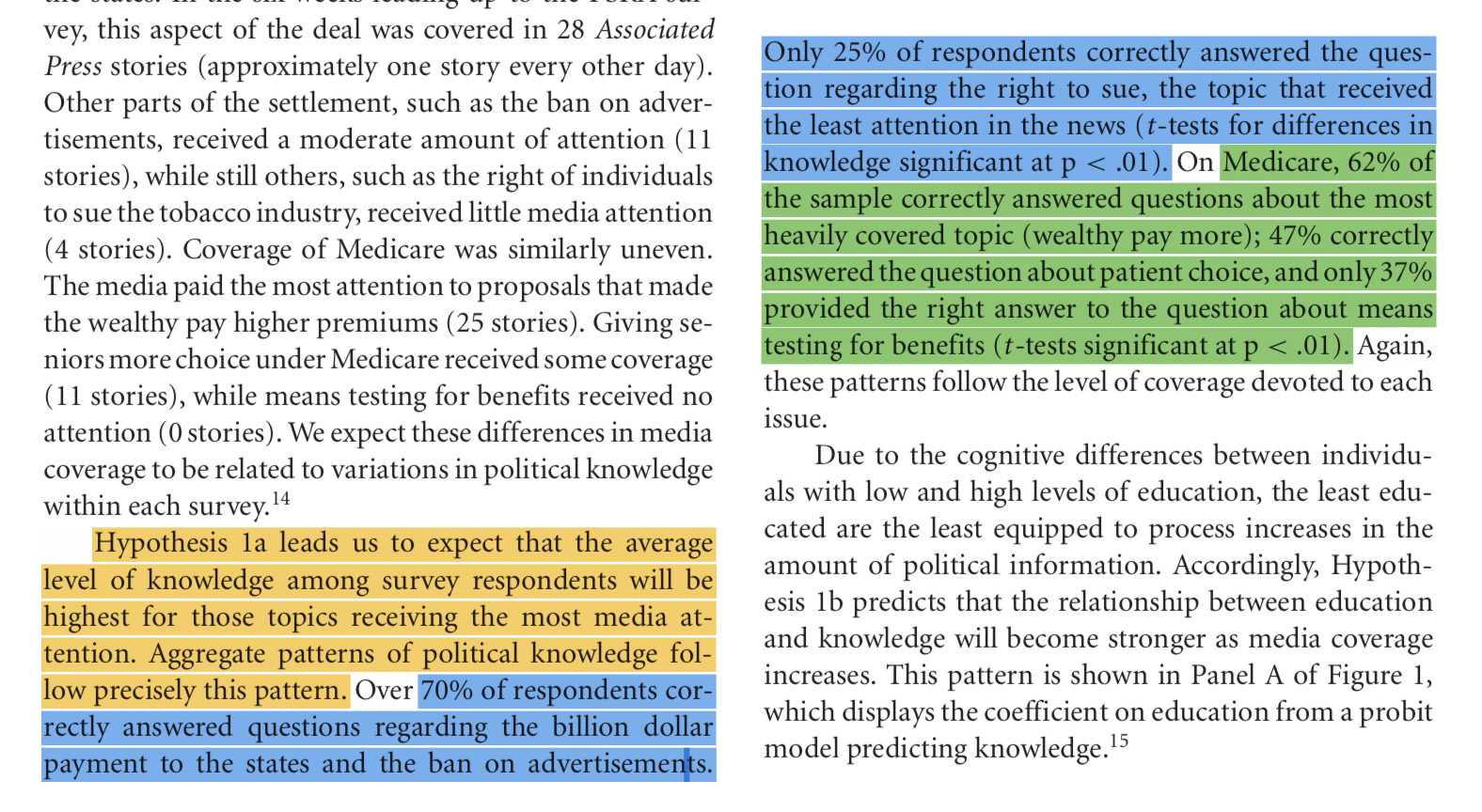

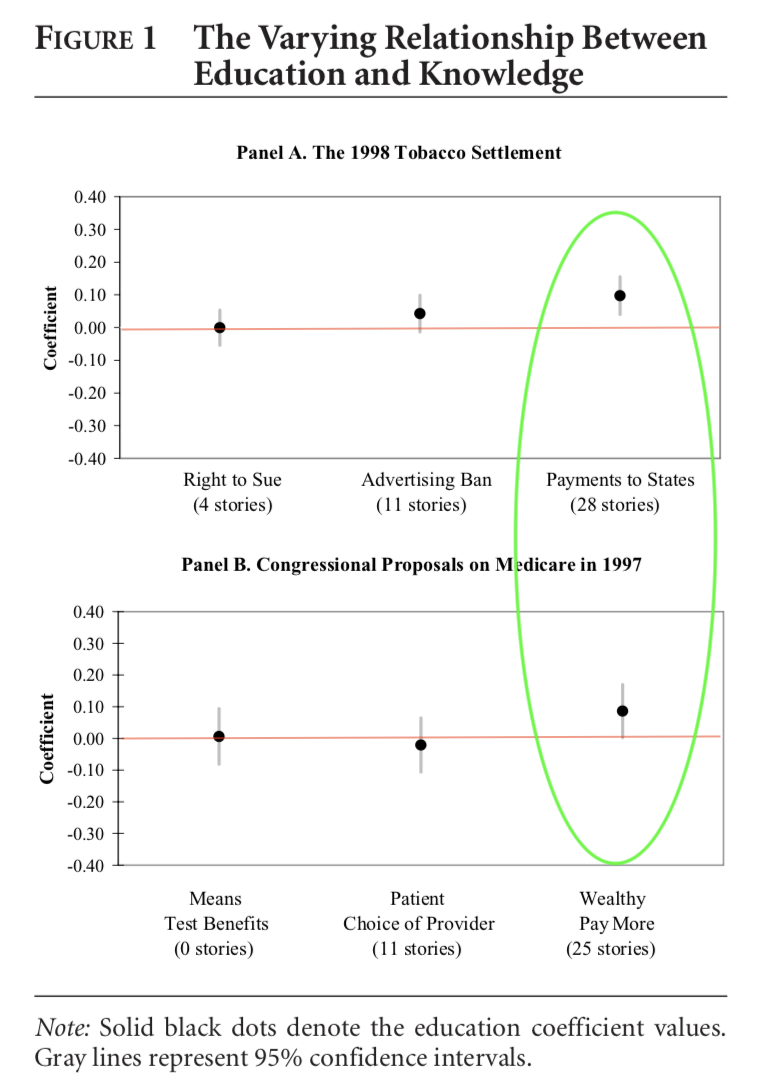

From page 268:

- H1a: Increases in the overall amount of media attention to an issue will increase the average amount of knowledge in the population

- H1b: The gap in knowledge between individuals with low and high levels of education also will increase

- H2a: All else held constant, increasing the amount of newspaper coverage will raise the average level of knowledge in the population, but it should primarily benefit those with high levels of education

- H2b: An increase in television coverage will raise the average level of knowledge in the population, but it will not alter the relationship between education and knowledge

What’s the empirical design

- What data and methods do the authors use?

What’s the empirical design

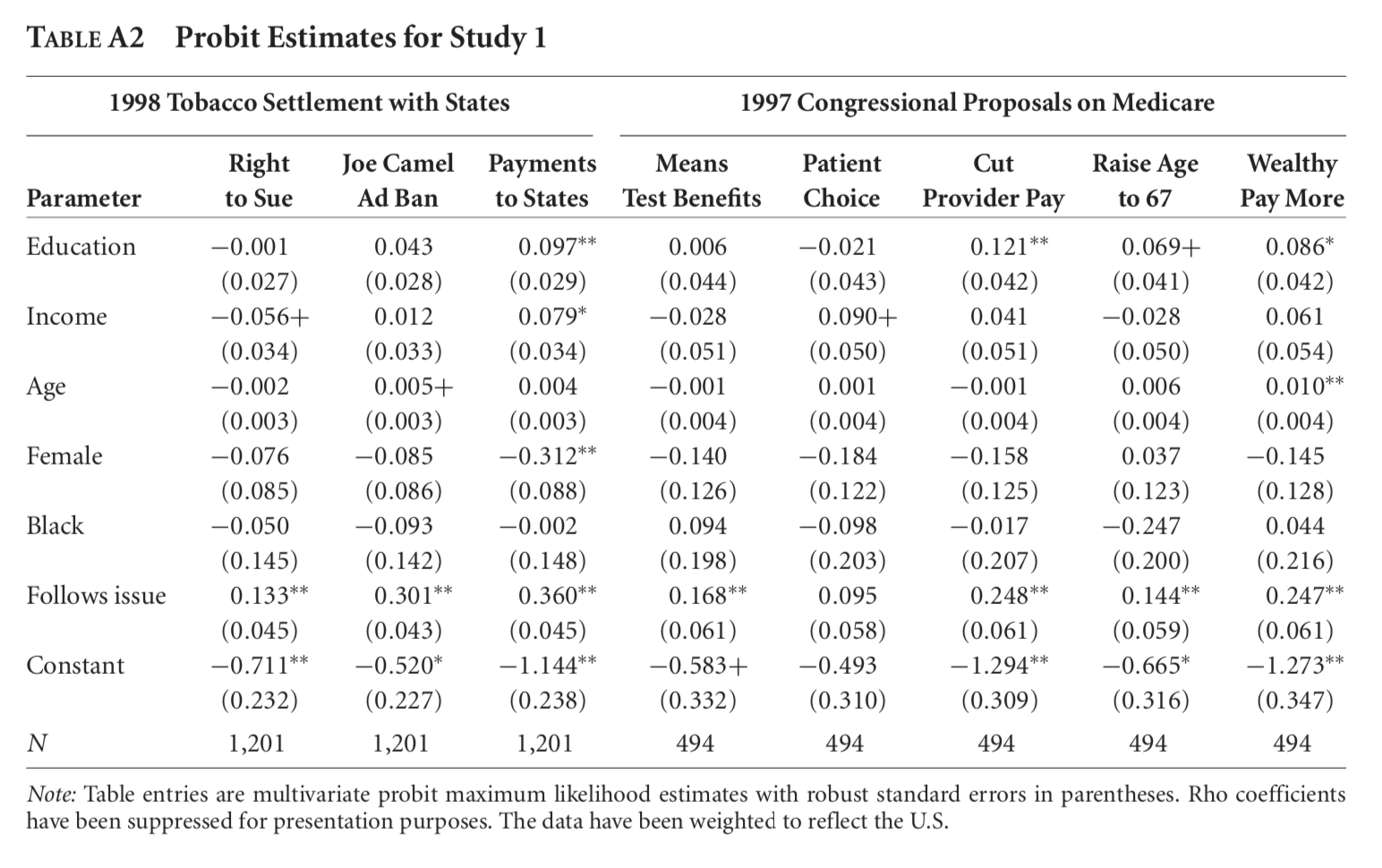

- Measure specific surveillance knowledge from surveys on 41 issues (primarily health related), paired with content analysis of issue-specific coverage in the AP, Broadcast TV News, and USA Today.

- Study 1 looks at variation in coverage across two issues using a probit regression

- Study 2 looks at variation in coverage and individual education across multiple issues using multilevel modeling

😉 What you need to know (WYNK)

- “Dichotomous” taking 1 of two values (1 if correct, 0 otherwise)

- “We ran a multivariate probit…” They did a special type of regression for data that has a binary (0 or 1) outcome

- “A Multilevel Model” They pool data together across surveys and model variation at the level of both the individual \(i\) and the issue \(j\)

- “The grand mean” The mean for the entire sample

- “ANOVA” Analysis of Variance: How much variation is at the individual vs issue level

What’s are the results

- What are the hypotheses?

- What figures and tables provide evidence in support of each claim?

What are the results

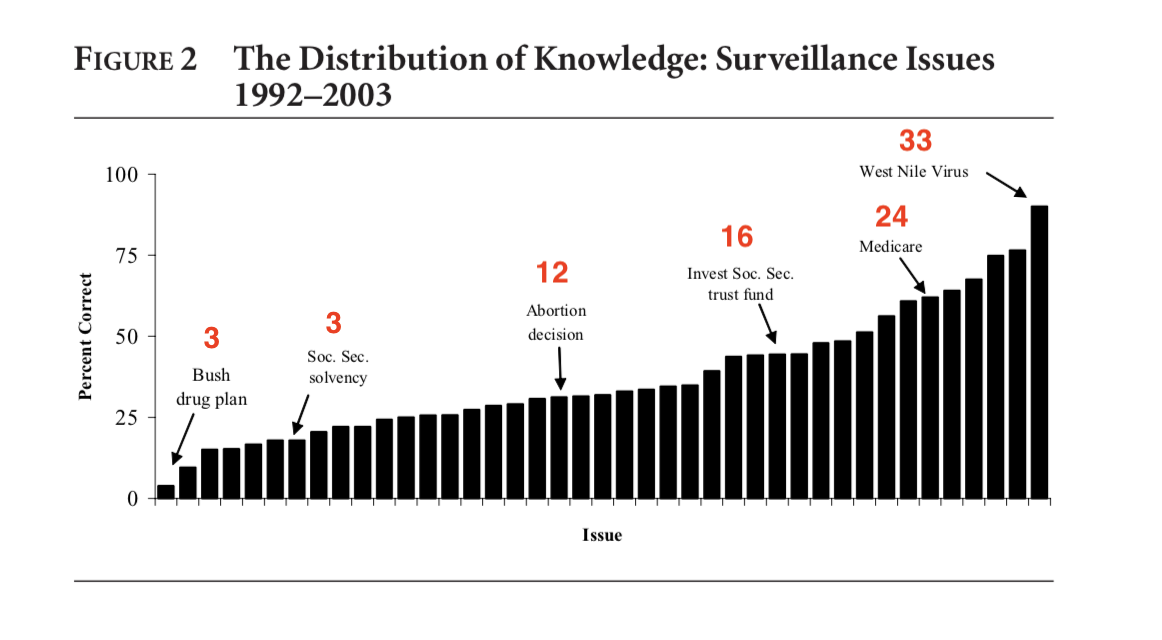

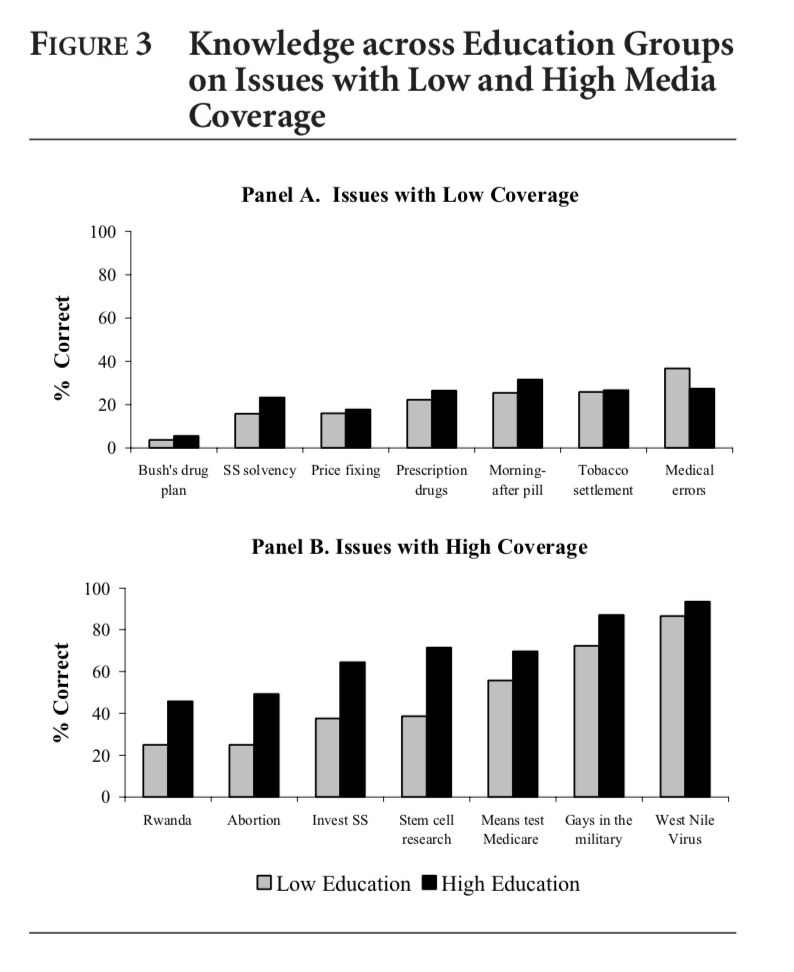

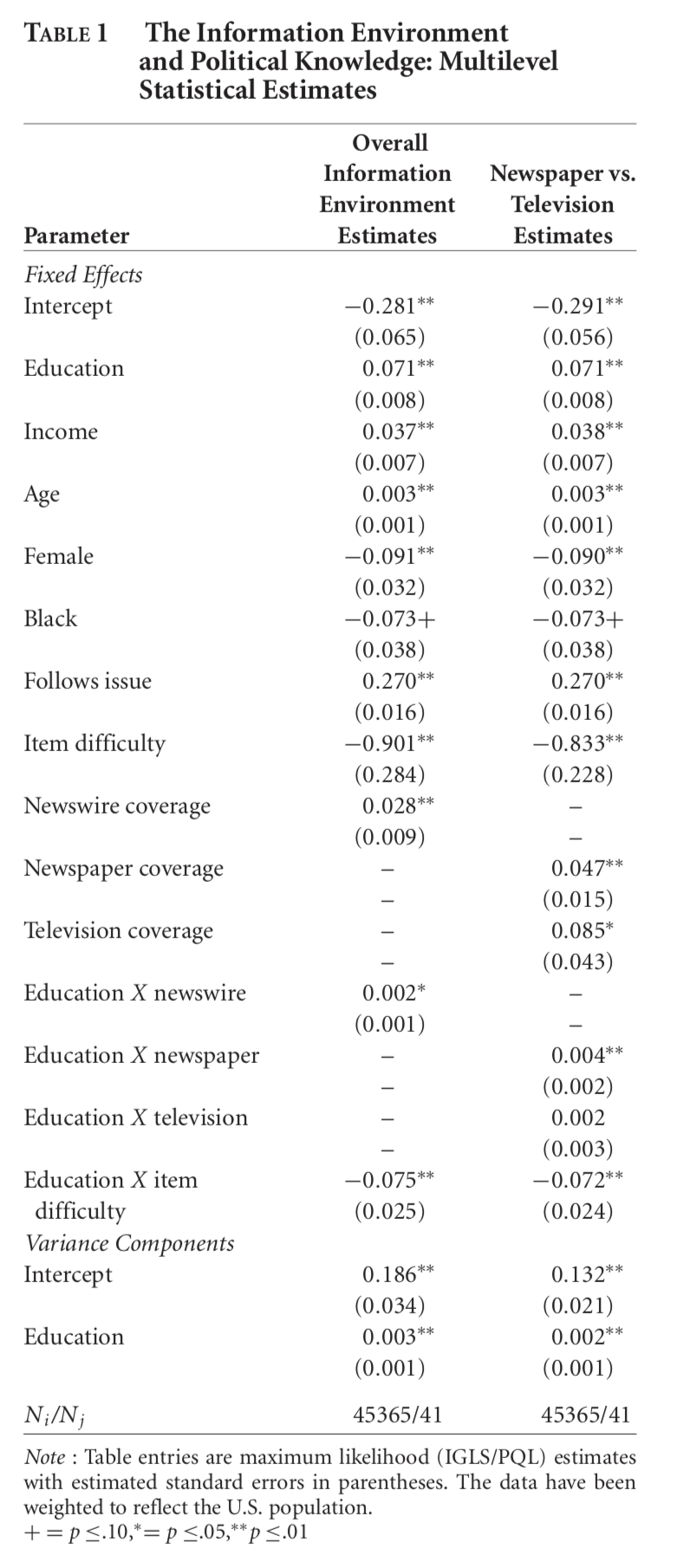

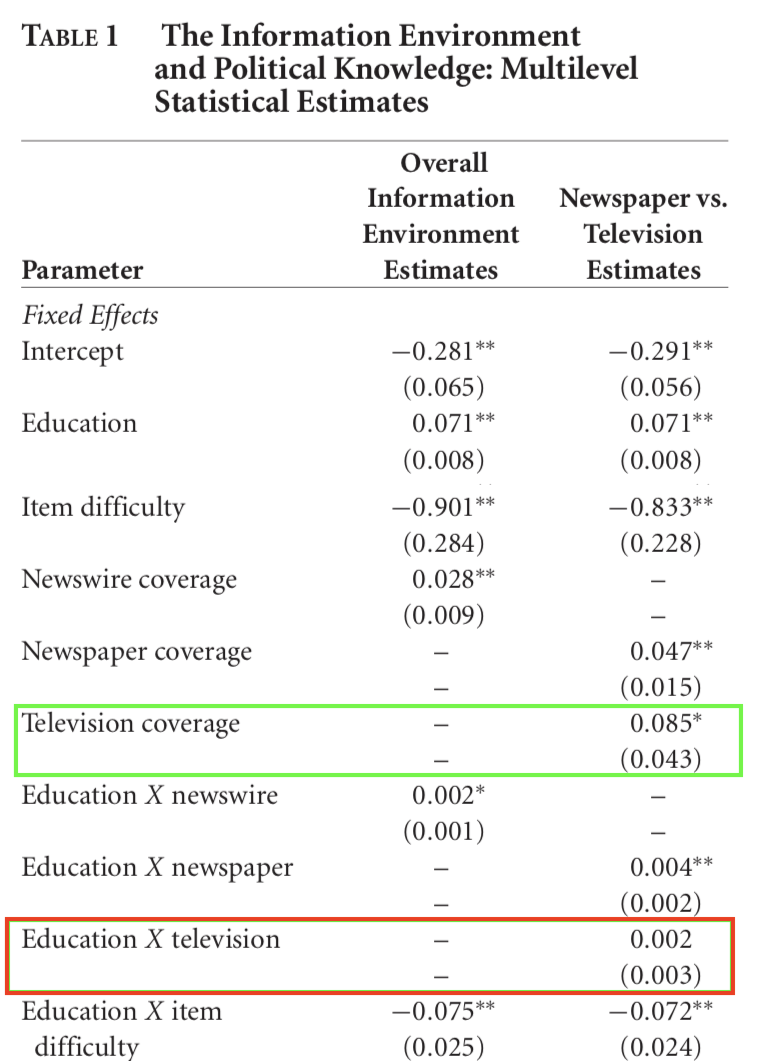

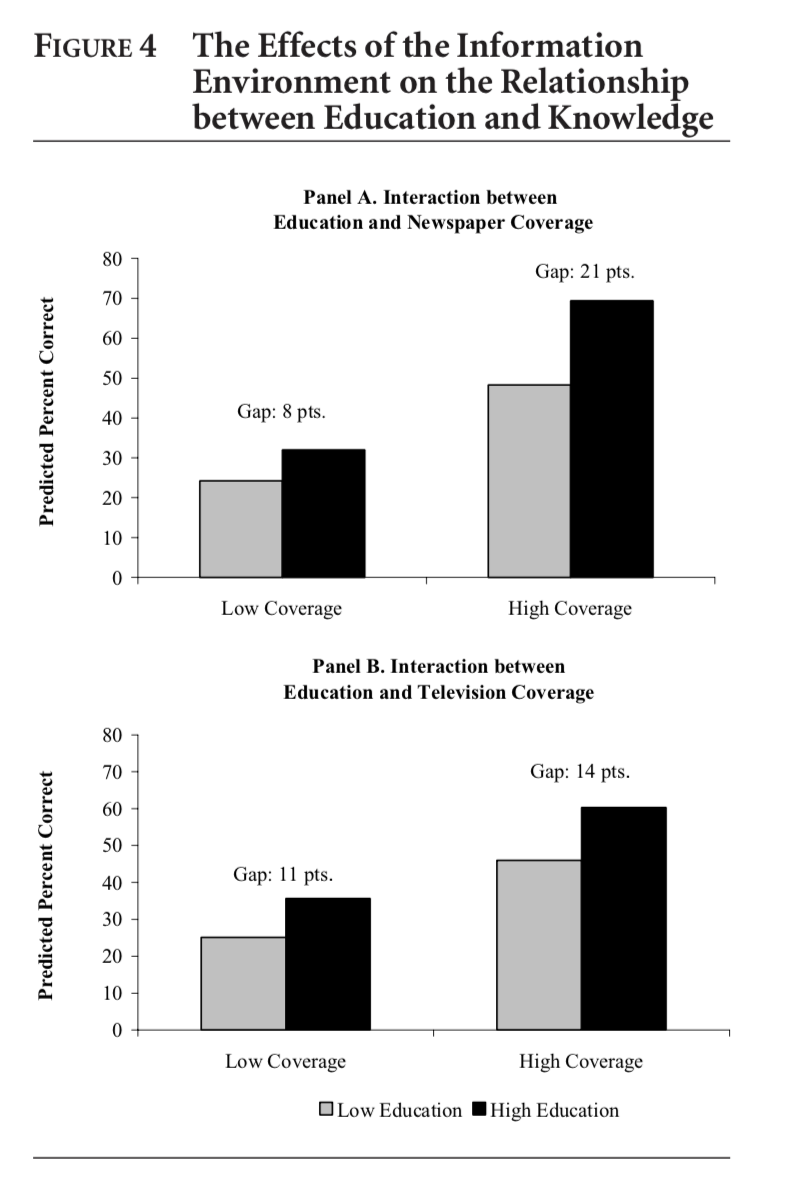

- H1a

- Text page 271, Figure 2, Figure 3, Table 1, Figure 4

- Text page 271, Figure 2, Figure 3, Table 1, Figure 4

- H1b

- Figure 2, Figure 3, Table 1, Figure 4

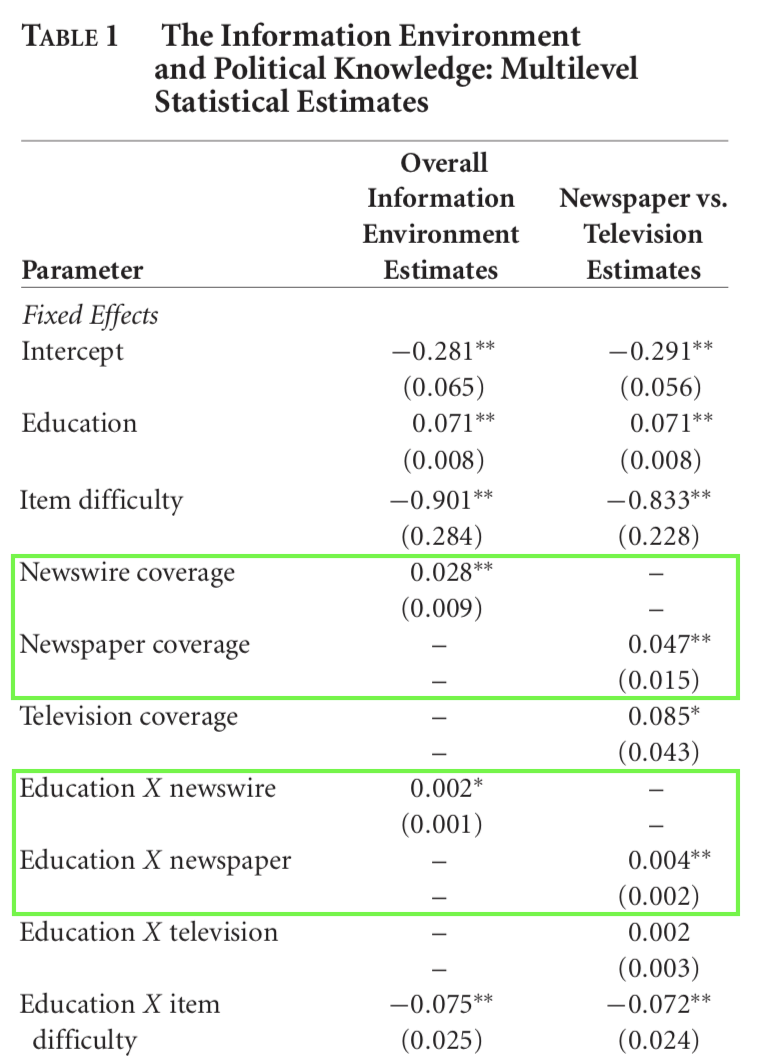

- H2a

- Table 1, Figure 4

- H2b

- Table 1, Figure 4

Increasing Media Coverage Increases Knowledge (H1a)

Knowledge Gaps Increase When There is More Coverage (H1b)

Is this Always True?

Is this Always True?

Increasing Media Coverage Increases Knowledge (H1a)

Knowledge Gaps Increase with more Media Coverage (H1b)

Newspaper Coverage Benefits the Informationally Rich (H2a)

Newspaper Coverage Benefits the Informationally Rich (H2a)

Television Coverage Offers Wider Benefits (H2b)

Media Coverage and Knowledge Gaps

What’s are the conclusions

- What are some potential concerns about this study?

- What are the broader conclusions

Weaver, Prowse, and Piston (2019)

Take a few moments

- What’s the research question

- What’s the theoretical framework

- What’s the empirical design

- What are the results

- What are the conclusions

What’s the research question

Prevailing accounts of citizen competence suggest citizens have:

- Too little knowledge \(\to\) too much power

WPP invert this claim, contending marginalized groups often have:

- Too much knowledge \(\to\) Too little power

What’s the theoretical framework

WPP intervene in the political knowledge literature, which:

Finds citizens possess low levels of factual knowledge

Argues this ignorance may undermine democratic decision-making

In some cases, suggests citizens exert too much influence (Quirk & Hinchliffe 1998)

What’s the theoretical framework

Following Cramer and Toff (2017) they think of political knowledge in terms of people’s lived experiences

Civic competence depends less on factual recall and more on how people interpret and deploy political experience

What’s the theoretical framework

WPP’s work builds on theories of policy feedback

- Policy Feedback examines the way policy experiences shape citizens’ attitudes and behaviors

WPP in particular, focus on the second face of government that deals with aspects of social control

WPP argue that citizens in highly policed communities possess extensive knowledge of the state’s coercive face — and that this knowledge reflects democratic failure rather than democratic competence.

What’s the empirical design

- 233 conversations between Black residents across 13 highly policed neighborhoods

- 233 conversations between Black residents across 13 highly policed neighborhoods

- Interpretive, inductive analysis of dialogue

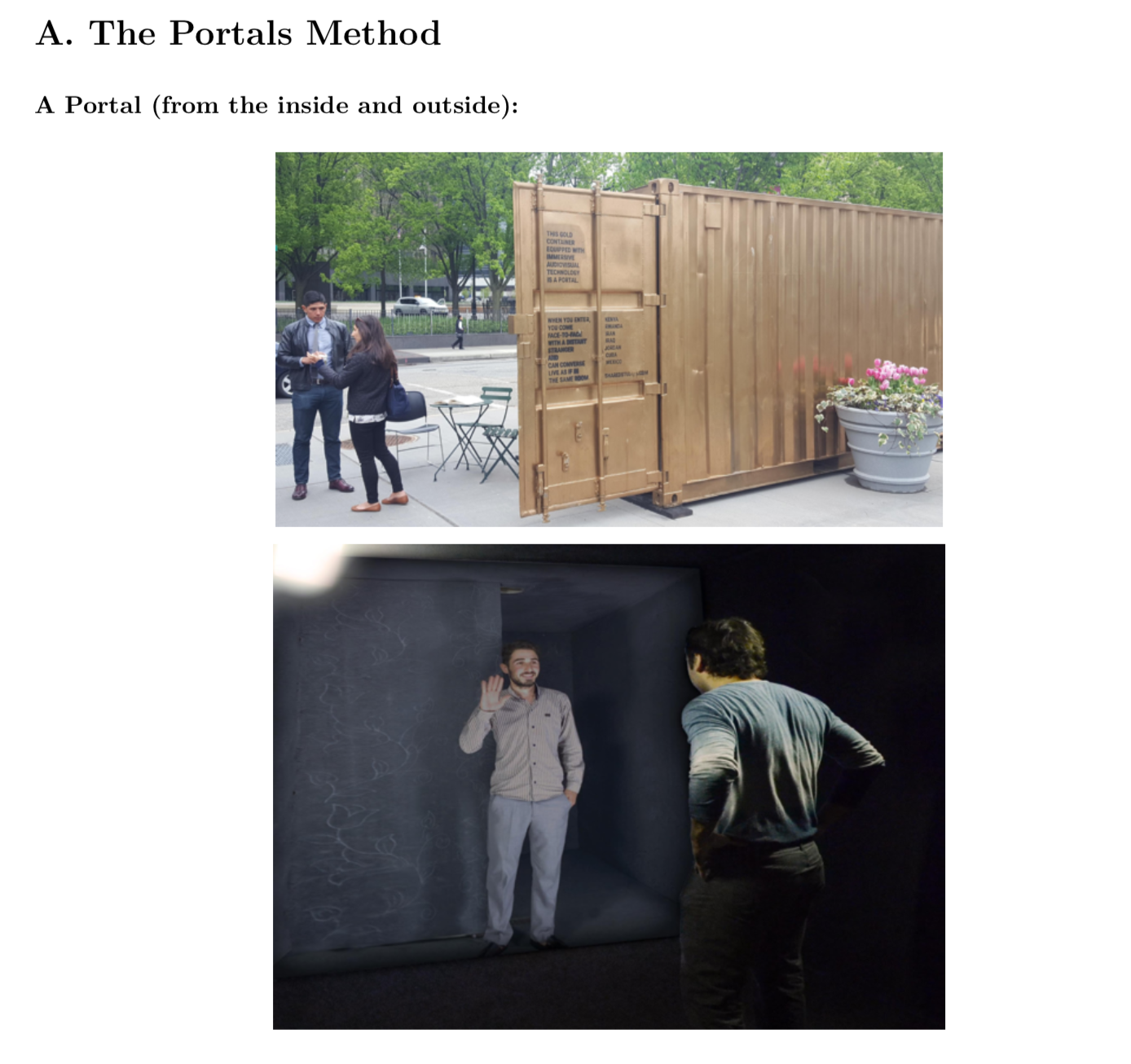

Conversations with Strangers

The Portal does two things simultaneously, providing the opportunity and space to discuss a topic and connecting them to those who can be assumed to be knowledgeable about that topic.

Yes, but how representative are these portals?

Portals participants are not a strictly random sample and we cannot say how representative they are of communities of interest.

We are after richer data that reveals not just a snapshot of opinion that is “representative,” but how people reason together, how they frame things in their own words not those of the survey researcher, and how they develop a theory of state action and power.

Second, we would be more concerned about representativeness or bias if we were testing hypotheses about the distributions of attitudes (how many) or causal relationships between variables (how related), studies based on a “sampling logic.

Finally, existing large-N surveys are notoriously inadequate at capturing the experiences of highly policed communities.

Summary: Concerns about representativeness

Not designed to estimate population distributions

Designed to understand reasoning processes

Survey methods under-capture highly policed communities

Goal: depth, not representativeness

What are the results

Take a few momements to share with your neighbor, your understanding of the study’s findings

Two Much Knowledge, Too Little Power

The crux of their argument follows from their summary of the initial portal conversation (pp. 1155-1158) which they argue reveals:

- Knowledge rooted in repeated, often involuntary encounters with police

- Extensive factual knowledge (Names of public officials, scandals)

- Knowledge of “unwritten rules”

- Multiple sources of information (personal experience, vicarious experience, media coverage)

- Official stories vs counter narratives (Overt curriculum” vs hidden curriculum; Official law vs lived law)

What are the results

Pariticipants exhibit Dual Knowledge:

What the state claims to do

What the state actually does

Knowledge is:

Deep, specific, experiential

Often acquired involuntarily

Reinforced by community and media

Knowledge functions as:

A strategy of self-preservation

A way to navigate risk

A tool for distancing from state oversight

Conclusion:

- Residents have “too much knowledge, too little power”

Broader Implications

What counts as political knowledge?

Should experiential knowledge be treated as epistemically privileged?

Does this redefine democratic competence?

What does this imply about measuring political knowledge with surveys?

When does this knowledge lead to withdrawal rather than participation?

Attendance Survey

Please complete the following survey

Misinformation

Motivating questions

- What is misinformation?

- Why do people become misinformed?

- Can we correct misinformation?

- Are reported misperceptions sincere?

What is misinformation

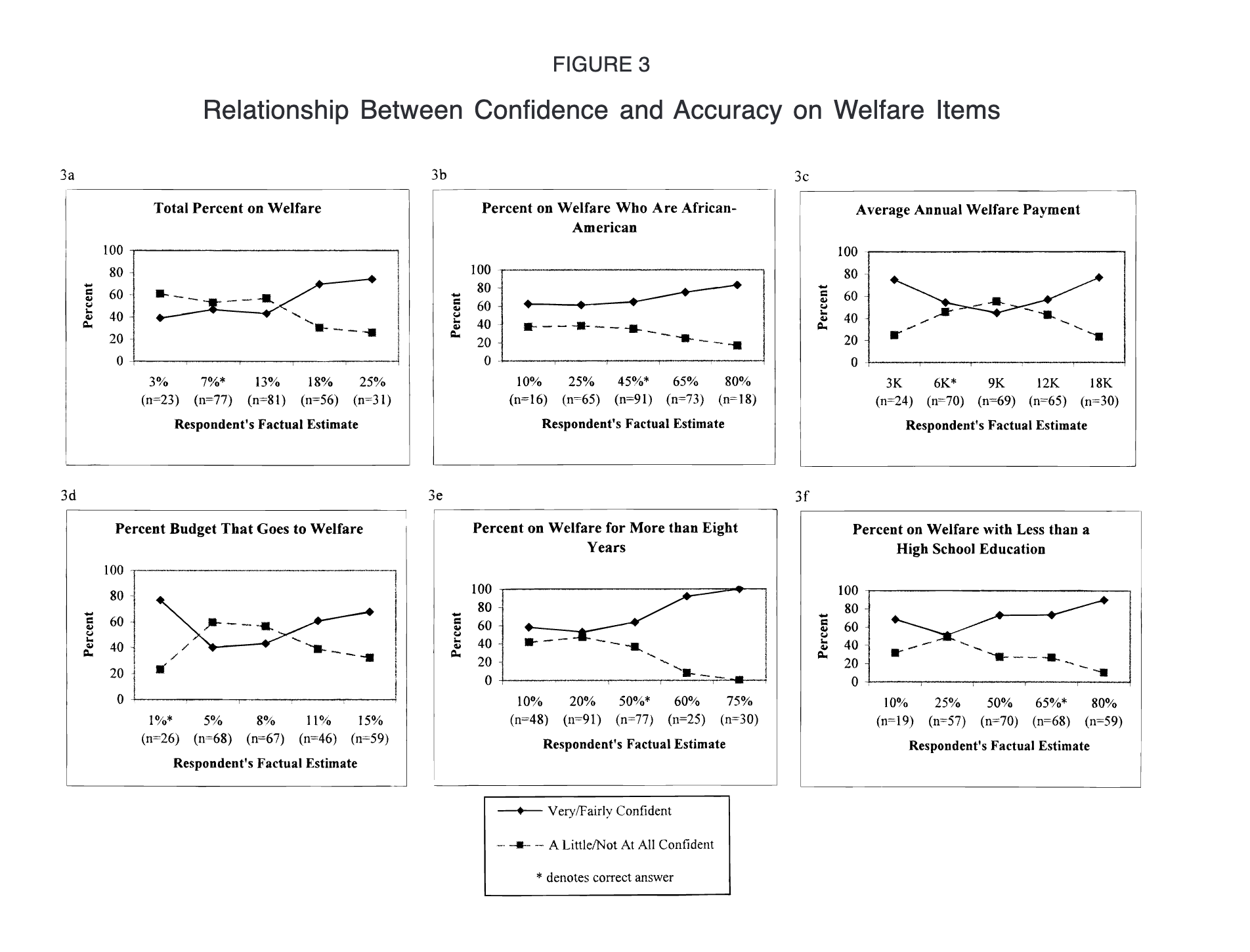

- Kuklinski et al. (2000): “People are misinformed when they confidently hold wrong beliefs”

Rumors

Lack evidentiary standards

May turn out to be true

Conspiracy beliefs

Explain events via hidden, powerful actors

Often tied to dispositional predispositions

Misinformation

Unambiguously false

Confidently held

Intrepetation:

- The people who give the most inflated, factually wrong answers are often the most confident.

Origins of misinformation?

Jerit and Zhao (2020) (pp 79-81) review some psychological explanations, emphasizing different cognitive motivations:

- Accuracy motives \(\to\) correct decisions

- Directional motives \(\to\) consistent decisions

Misinformation is a form of motivated reasoning reflecting a directional desire to maintain consistency with ones’ prior beliefs.

Directional motives are often the default in politics.

Identity-linked issues activate them most strongly.

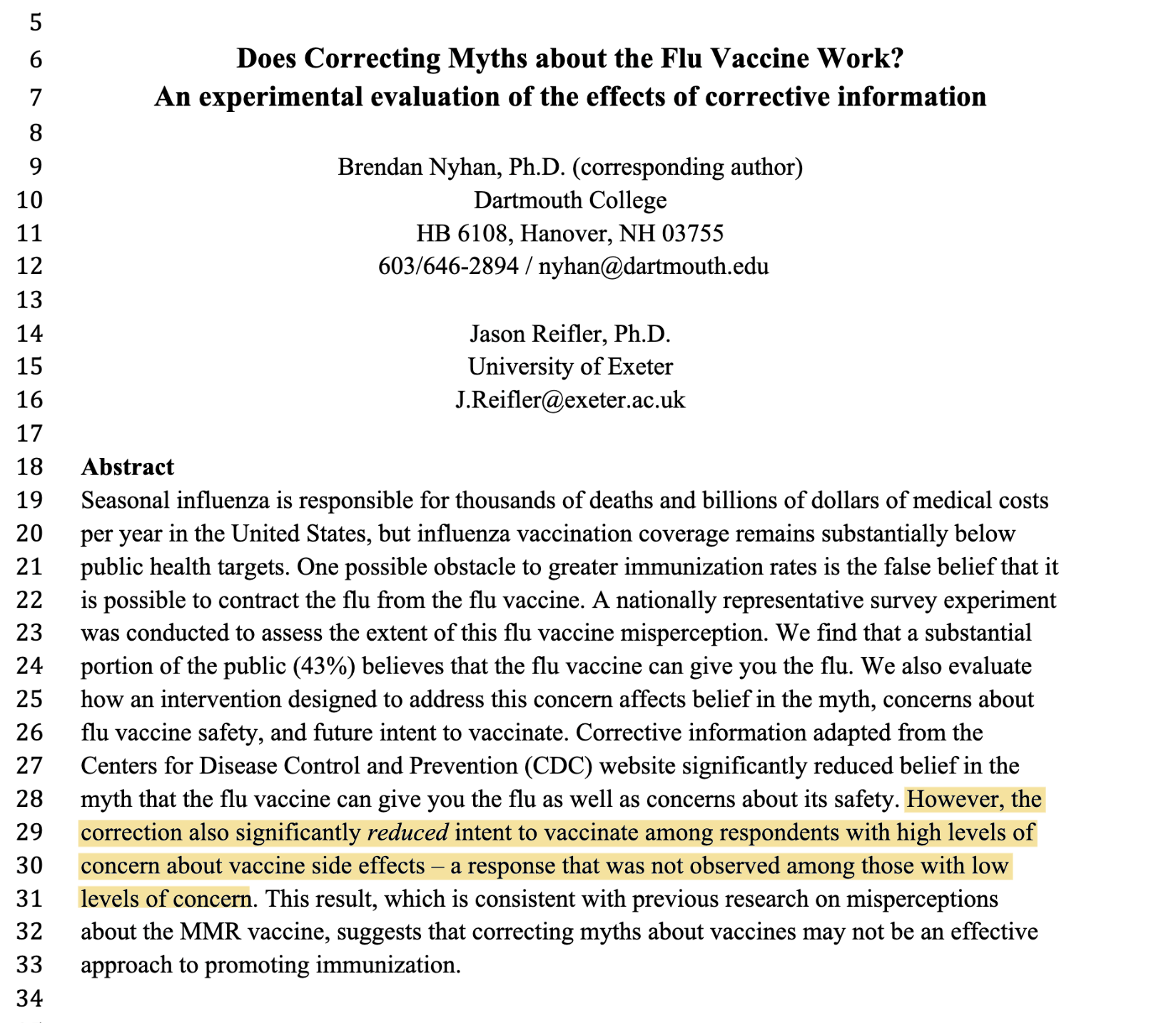

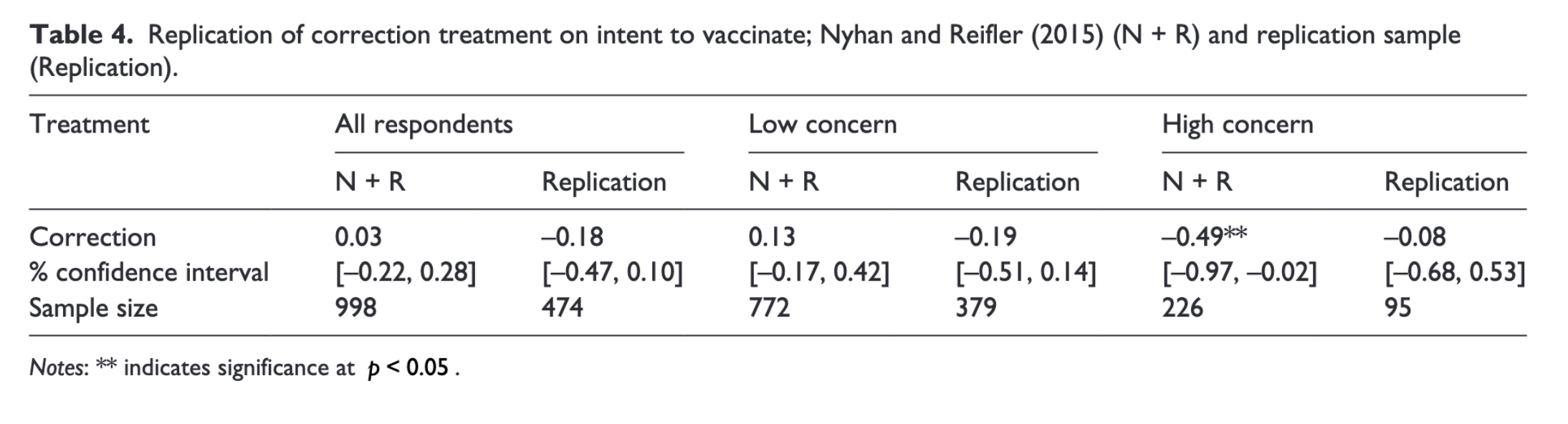

Correcting Misinformation

Extensive but theoretically fragmented.

Depends on the issue, correction, and individuals

- Can you find concrete examples? (p. 83)

What counts as success?

- Changed beliefs?

- Changed attitudes?

- Both?

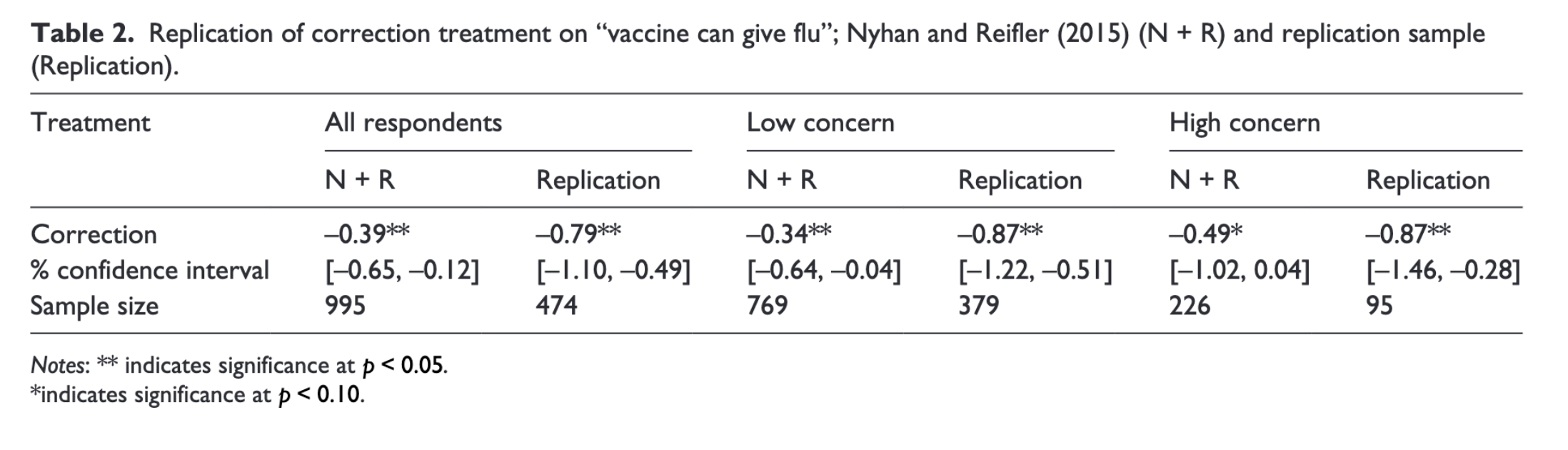

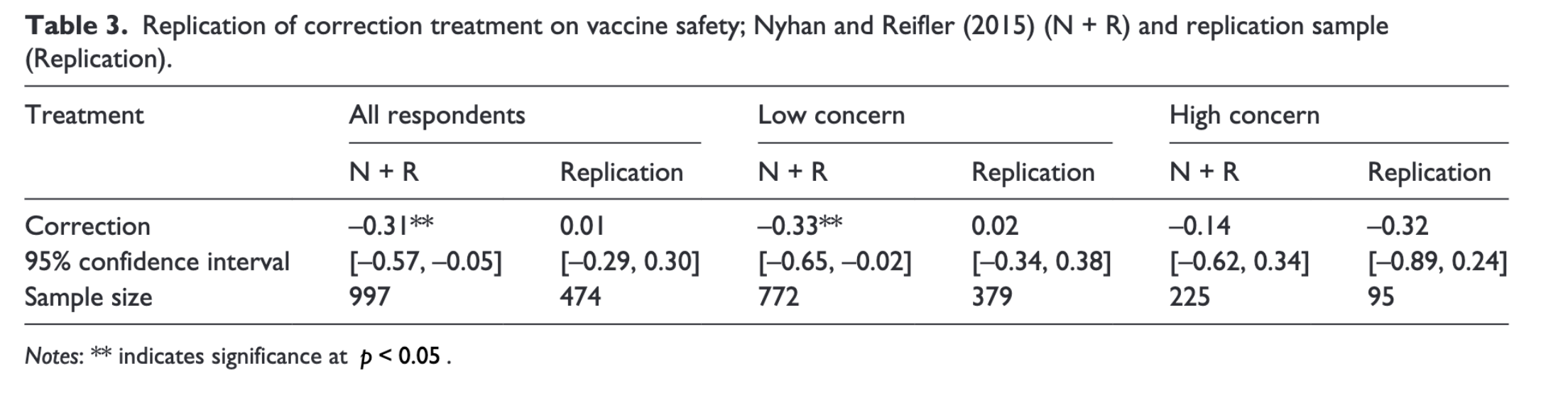

Possibility for corrections to backfire

Backfire Effects

Measuring misinformation

Conceptually, misinformation involves confidently holding false beliefs

- But many studies fail to measure confidence

Some scholars have proposed that misinformation is a form of expressive responding or partisan cheerleading and that partisan gaps disapper when we incentivize correct responses (Bullock et al. 2015)

- If partisan gaps disappear under incentives, are we observing misinformation — or expressive identity signaling?

What do we make of the directions for further research on p. 88?

Broader Implications:

Think in terms of larger questions of citizen competence.

Why does it matter if citizens:

- are confidently wrong?

- are strategically expressive

- update their factual beliefs, but not their subsequent interpretations?

- only correct their beliefs when their identity is not threatened?

Next week

Readings for Political Cognition

By Monday:

- Zaller and Feldman (1992)

By Wednesday:

- Lodge and Taber (2013)

References

POLS 1140